2025.1.26机器学习笔记:C-RNN-GAN文献阅读

2025.1.26周报

- 文献阅读

- 题目信息

- 摘要

- Abstract

- 创新点

- 网络架构

- 实验

- 结论

- 缺点以及后续展望

- 总结

文献阅读

题目信息

- 题目: C-RNN-GAN: Continuous recurrent neural networks with adversarial training

- 会议期刊: NIPS

- 作者: Olof Mogren

- 发表时间: 2016

- 文章链接:https://arxiv.org/pdf/1611.09904

摘要

生成对抗网络(GANs)目的是生成数据,而循环神经网络(RNNs)常用于生成数据序列。目前已有研究用RNN进行音乐生成,但多使用符号表示。本论文中,作者研究了使用对抗训练生成连续数据的序列可行性,并使用古典音乐的midi文件进行评估。作者提出C-RNN-GAN(连续循环生成对抗网络)这种神经网络架构,用对抗训练来对序列的整体联合概率建模并生成高质量的数据序列。通过在古典音乐midi格式序列上训练该模型,并用音阶一致性和音域等指标进行评估,以验证生成对抗训练是一种可行的训练网络的方法,提出的模型为连续数据的生成提供了新思路。

Abstract

The purpose of Generative Adversarial Networks (GANs) is to generate data, while Recurrent Neural Networks (RNNs) are often used for generating data sequences. Currently, there have been many studies using RNNs for music generation, but most of them employ symbolic representations. In this paper, the authors investigate the feasibility of using adversarial training to generate sequences of continuous data, and evaluate it using classical music MIDI files. They propose the C-RNN-GAN (Continuous Recurrent Neural Network GAN), a neural network architecture that uses adversarial training to model the joint probability of the entire sequence and generate high-quality data sequences. By training this model on classical music MIDI format sequences and assessing it with metrics such as scale consistency and range, the authors demonstrate that adversarial training is a viable method for training networks, and the proposed model offers a new approach for the generation of continuous data.

创新点

本研究创新性在于提出C-RNN-GAN模型,作者采用对抗训练方式处理连续序列数据。作者使用四个实值标量对音乐信号进行生成,此外,还使用了反向传播算法进行端到端训练。

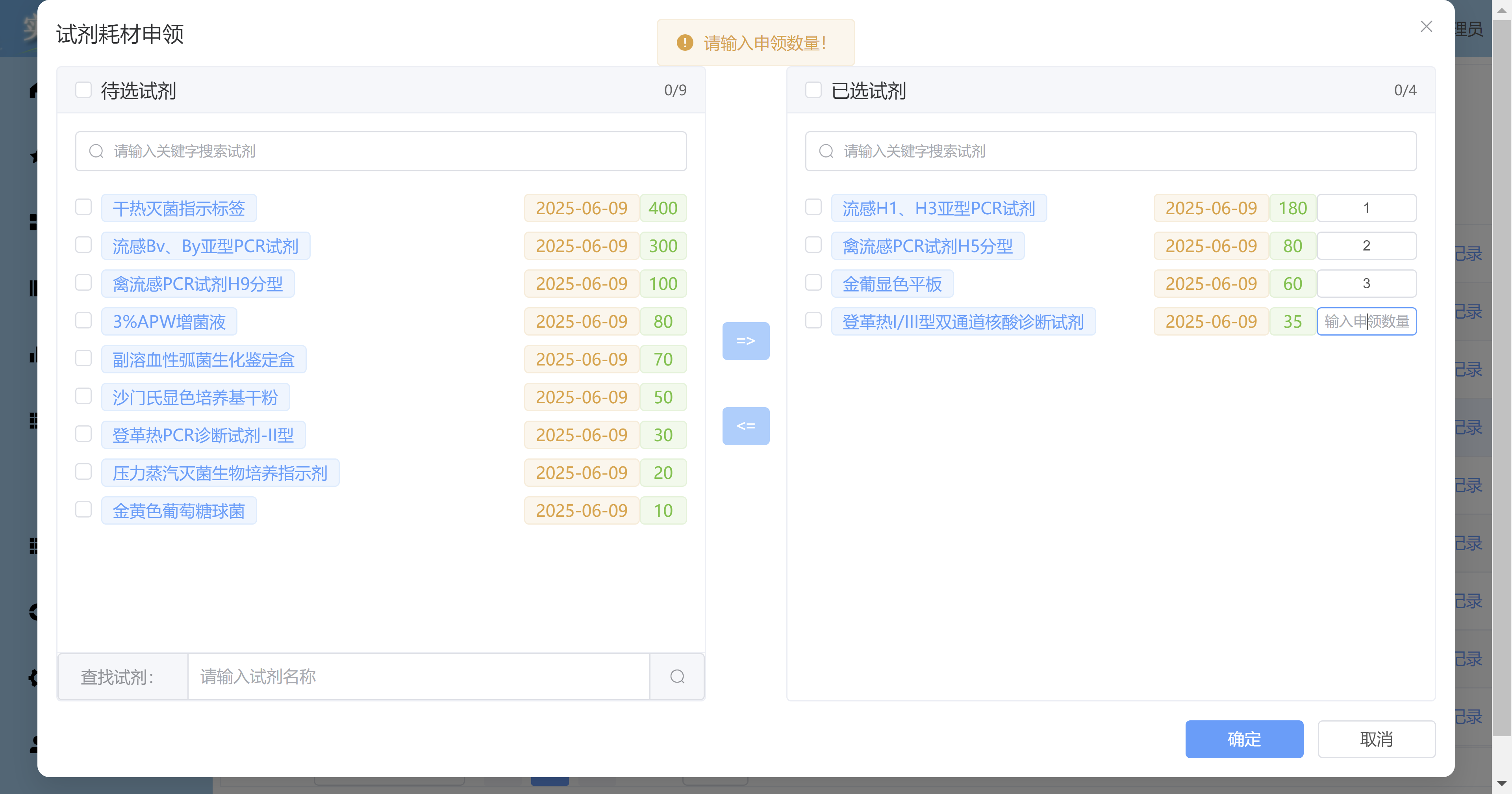

网络架构

提出C-RNN-GAN模型,RNN-GAN 由生成器(Generator)和判别器(Discriminator)两个主要部分组成。

如下图所示:

生成器(G)从随机输入(噪声)生成音乐序列。其包含LSTM层和全连接层。输入为随机噪声输入(如,随机向量);输出是生成的音乐序列。

判别器(D)用于区分生成的音乐序列和真实音乐序列。D由Bi-LSTM(双向长短期记忆网络)组成。输入为真实或生成的音乐序列;输出为一个概率值(表示输入序列是真实音乐的概率)。

在训练中,G与D相互对抗,生成器和判别器交替训练,生成器的目标是欺骗判别器,判别器的目标是准确区分真实和生成的音乐。

其中G与D的损失函数表达式如下:

L G = 1 m ∑ i = 1 m log ( 1 − D ( G ( z ( i ) ) ) ) L_{G}=\frac{1}{m} \sum_{i=1}^{m} \log \left(1-D\left(G\left(\boldsymbol{z}^{(i)}\right)\right)\right) LG=m1i=1∑mlog(1−D(G(z(i))))

L D = 1 m ∑ i = 1 m [ − log D ( x ( i ) ) − ( log ( 1 − D ( G ( z ( i ) ) ) ) ) ] L_{D}=\frac{1}{m} \sum_{i=1}^{m}\left[-\log D\left(\boldsymbol{x}^{(i)}\right)-\left(\log \left(1-D\left(G\left(\boldsymbol{z}^{(i)}\right)\right)\right)\right)\right] LD=m1i=1∑m[−logD(x(i))−(log(1−D(G(z(i)))))]

其中, z ( i ) z^{(i)} z(i) 是 [ 0 , 1 ] k [0,1]^{k} [0,1]k 中的均匀随机向量的序列,而 x ( i ) x^(i) x(i) 是来自训练数据的序列,k 表示随机序列中的数据的维数。G 中每个单元格的输入是一个随机向量,与先前单元格的输出串联。.

其实就跟我们之前阅读的GAN差不多,这里就不在赘述了。

实验

从网络收集midi格式的古典音乐文件作为训练数据,训练数据是以midi格式的音乐文件形式从网上收集的,包含着名的古典音乐作品。 每个midi事件被加载并与其持续时间,音调,强度(速度)以及自上一音调开始以来的时间一起保存。音调数据在内部用相应的声音频率表示。所有数据归一化为每秒384点的刻度分辨率。 该数据包含来自160位不同古典音乐作曲家的3697个midi文件,最后作者通过多维度指标评估生成音乐。

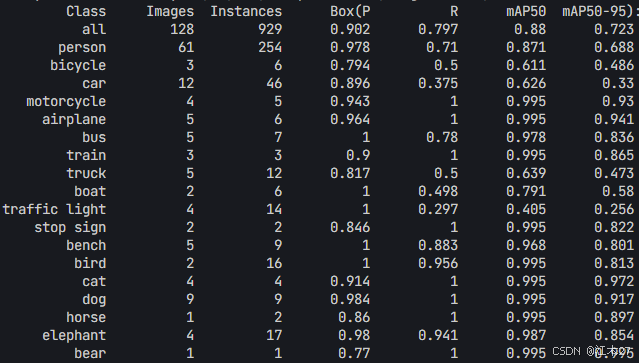

实验的模型评估指标:

Polyphony(复音):衡量两个音调同时演奏的频率。

Scale consistency(音阶一致性):通过计算属于标准音阶的音调比例得出,报告最匹配音阶的数值。

Repetitions (重复度):计算样本中的重复程度,仅考虑音调及其顺序,不考虑时间。

Tone span(音域):样本中最低和最高音调之间的半音步数。

模型参数:

生成器(G)和判别器(D)中的LSTM网络深度都为2,每个LSTM单元具有350个隐藏单元。

D双向的,而G是单向的。其中,来自D中的每个LSTM单元的输出被馈送到完全连接的层,其中权重在时间步长上共享,然后每个单元的sigmoid输出被平均化。

此外,在训练中使用反向传播(BPTT)和小批量随机梯度下降。学习率设置为0.1,并且将L2正则化应用于G和D中的权重。模型预训练6个epochs,平方误差损失以预测训练序列中的下一个事件。每个LSTM单元的输入是随机向量v,与前一时间步的输出连接。 v均匀分布在 [ 0 , 1 ] k [0,1]^k [0,1]k 中,并且k被选择为每个音调中的特征数量。在预训练期间,作者使用序列长度的模式,从短序列开始,从训练数据中随机样,最终用越来越长的序列训练模型。

实验结果:

C-RNN-GAN随着训练进行,生成音乐的复杂性增加。独特音调数量有微弱上升趋势,音阶一致性在10-15个周期后趋于稳定。

3音调重复在前25个周期有上升趋势,然后保持在较低水平,其与使用的音调数量相关。

Baseline(一个类似于生成器的循环网络)变化程度未达到C-RNN-GAN的水平。使用的独特音调数量一直低很多,音阶一致性与C-RNN-GAN相似,但音域与独特音调数量的关系比C-RNN-GAN更紧密,表明其使用的音调变化性更小。

C-RNN-GAN-3(3的意思是每个LSTM单元三个音调输出)与C-RNN-GAN和Baseline模型相比,获得了更高的复音分数。

在第50 - 55个周期左右达到许多零值输出状态后,在音域、独特音调数量、强度范围和3音调重复方面达到了更高的值。

真实音乐强度范围与生成音乐相似,音阶一致性略高但变化更大,复音分数与C-RNN-GAN-3相似,3音调重复高很多,但由于歌曲长度不同难以比较(通过除以真实音乐长度与生成音乐长度之比进行了归一化)。

从实验结果可以看出对抗训练有助于模型学习更多变、音域更广、强度范围更大的音乐。其中,模型每个LSTM单元输出多于一个音调有助于生成复音分数更高的音乐。虽然生成音乐是复音的,但在实验评估的复音分数方面,C-RNN-GAN得分较低,而允许每个LSTM单元同时输出多达三个音调的模型(C-RNN-GAN-3)在复音方面得分更好。虽然样本之间的时间差异较大,但在一首曲子内大致相同。

代码:https://github.com/olofmogren/c-rnn-gan

"""

模型参数:

learning_rate - 学习率的初始值

max_grad_norm - 梯度的最大允许范数

num_layers - LSTM 层的数量

songlength - LSTM 展开的步数

hidden_size - LSTM 单元的数量

epochs_before_decay - 使用初始学习率训练的轮数

max_epoch - 训练的总轮数

keep_prob - Dropout 层中保留权重的概率

lr_decay - 在 "epochs_before_decay" 之后每个轮数的学习率衰减

batch_size - 批量大小

"""

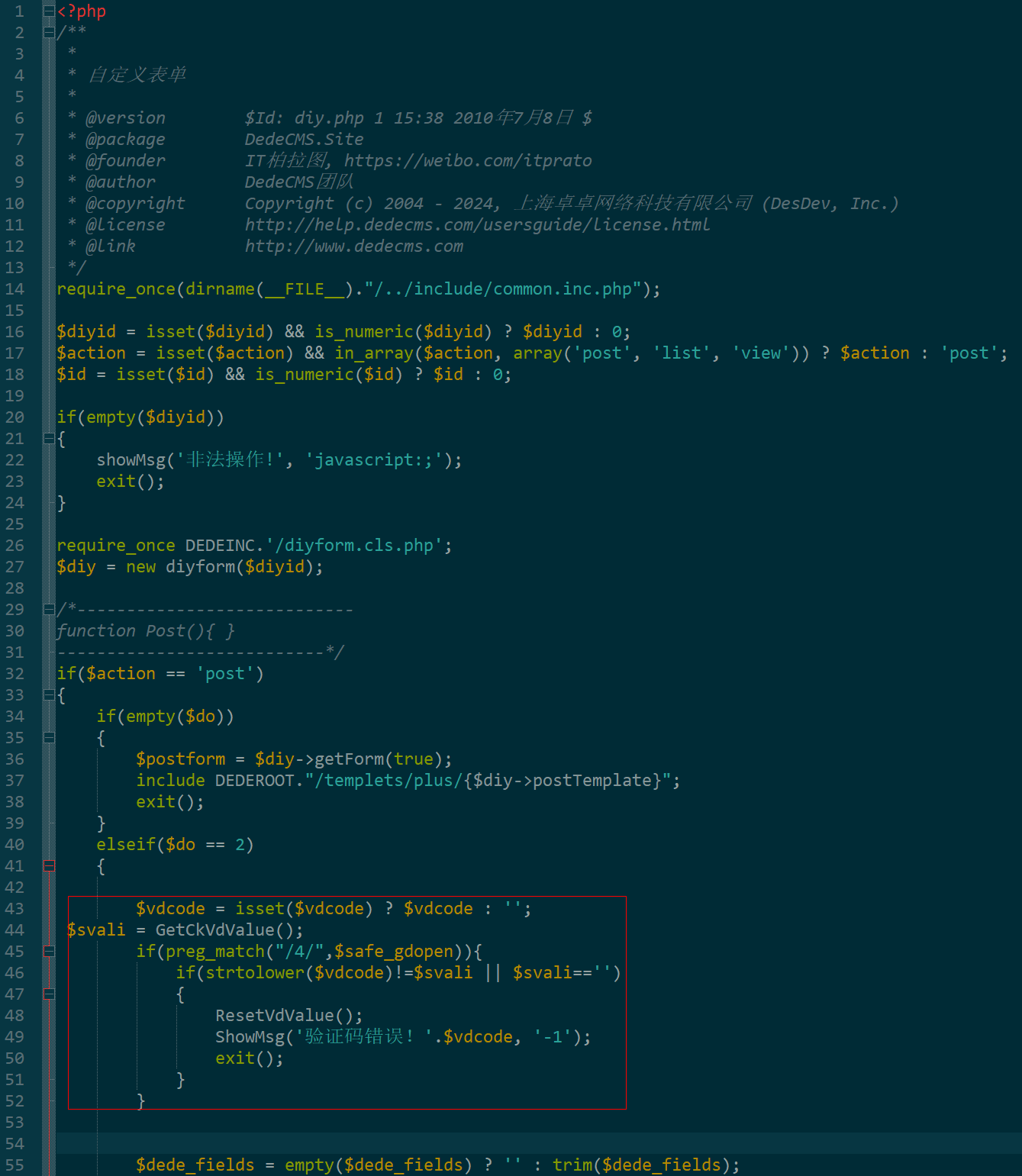

from __future__ import absolute_import

from __future__ import division

from __future__ import print_functionimport time, datetime, os, sys

import pickle as pkl

from subprocess import call, Popenimport numpy as np

import tensorflow as tf

from tensorflow.python.client import timelineimport music_data_utils

from midi_statistics import get_all_statsflags = tf.flags

logging = tf.loggingflags.DEFINE_string("datadir", None, "保存和加载 MIDI 音乐文件的目录")

flags.DEFINE_string("traindir", None, "保存检查点和 gnuplot 文件的目录")

flags.DEFINE_integer("epochs_per_checkpoint", 2, "每个检查点之间进行的训练轮数")

flags.DEFINE_boolean("log_device_placement", False, "输出设备放置的信息")

flags.DEFINE_string("call_after", None, "退出后调用的命令")

flags.DEFINE_integer("exit_after", 1440, "运行多少分钟后退出")

flags.DEFINE_integer("select_validation_percentage", None, "选择作为验证集的数据的随机百分比")

flags.DEFINE_integer("select_test_percentage", None, "选择作为测试集的数据的随机百分比")

flags.DEFINE_boolean("sample", False, "从模型中采样输出。假设训练已经完成。将采样输出保存到文件中")

flags.DEFINE_integer("works_per_composer", None, "限制每个作曲家加载的作品数量")

flags.DEFINE_boolean("disable_feed_previous", False, "在生成器中,将前一个单元的输出作为下一个单元的输入")

flags.DEFINE_float("init_scale", 0.05, "权重的初始缩放值")

flags.DEFINE_float("learning_rate", 0.1, "学习率")

flags.DEFINE_float("d_lr_factor", 0.5, "学习率衰减因子")

flags.DEFINE_float("max_grad_norm", 5.0, "梯度的最大允许范数")

flags.DEFINE_float("keep_prob", 0.5, "保留权重的概率。1表示不使用 Dropout")

flags.DEFINE_float("lr_decay", 1.0, "在 'epochs_before_decay' 之后每个轮数的学习率衰减")

flags.DEFINE_integer("num_layers_g", 2, "生成器 G 中堆叠的循环单元数量")

flags.DEFINE_integer("num_layers_d", 2, "判别器 D 中堆叠的循环单元数量")

flags.DEFINE_integer("songlength", 100, "限制歌曲输入的事件数量")

flags.DEFINE_integer("meta_layer_size", 200, "元信息模块的隐藏层大小")

flags.DEFINE_integer("hidden_size_g", 100, "生成器 G 的循环部分的隐藏层大小")

flags.DEFINE_integer("hidden_size_d", 100, "判别器 D 的循环部分的隐藏层大小,默认与 G 相同")

flags.DEFINE_integer("epochs_before_decay", 60, "开始衰减之前进行的轮数")

flags.DEFINE_integer("max_epoch", 500, "停止训练之前的总轮数")

flags.DEFINE_integer("batch_size", 20, "批量大小")

flags.DEFINE_integer("biscale_slow_layer_ticks", 8, "Biscale 慢层的刻度")

flags.DEFINE_boolean("multiscale", False, "多尺度 RNN")

flags.DEFINE_integer("pretraining_epochs", 6, "进行语言模型风格预训练的轮数")

flags.DEFINE_boolean("pretraining_d", False, "在预训练期间训练 D")

flags.DEFINE_boolean("initialize_d", False, "初始化 D 的变量,无论检查点中是否有已训练的版本")

flags.DEFINE_boolean("ignore_saved_args", False, "告诉程序忽略已保存的参数,而是使用命令行参数")

flags.DEFINE_boolean("pace_events", False, "在解析输入数据时,如果某个四分音符位置没有音符,则插入一个虚拟事件")

flags.DEFINE_boolean("minibatch_d", False, "为小批量增加核特征以提高多样性")

flags.DEFINE_boolean("unidirectional_d", False, "使用单向 RNN 而不是双向 RNN 作为 D")

flags.DEFINE_boolean("profiling", False, "性能分析。在 plots 目录中写入 timeline.json 文件")

flags.DEFINE_boolean("float16", False, "使用 float16 数据类型,否则,使用 float32")

flags.DEFINE_boolean("adam", False, "使用 Adam 优化器")

flags.DEFINE_boolean("feature_matching", False, "生成器 G 的特征匹配目标")

flags.DEFINE_boolean("disable_l2_regularizer", False, "对权重进行 L2 正则化")

flags.DEFINE_float("reg_scale", 1.0, "L2 正则化系数")

flags.DEFINE_boolean("synthetic_chords", False, "使用合成生成的和弦进行训练(每个事件三个音符)")

flags.DEFINE_integer("tones_per_cell", 1, "每个 RNN 单元输出的最大音符数量")

flags.DEFINE_string("composer", None, "指定一个作曲家,并仅在此作曲家的作品上训练模型")

flags.DEFINE_boolean("generate_meta", False, "将作曲家和流派作为输出的一部分生成")

flags.DEFINE_float("random_input_scale", 1.0, "随机输入的缩放比例(1表示与生成的特征大小相同)")

flags.DEFINE_boolean("end_classification", False, "仅在 D 的末尾进行分类。否则,在每个时间步进行分类并取平均值")FLAGS = flags.FLAGSmodel_layout_flags = ['num_layers_g', 'num_layers_d', 'meta_layer_size', 'hidden_size_g', 'hidden_size_d', 'biscale_slow_layer_ticks', 'multiscale', 'multiscale', 'disable_feed_previous', 'pace_events', 'minibatch_d', 'unidirectional_d', 'feature_matching', 'composer']def make_rnn_cell(rnn_layer_sizes,dropout_keep_prob=1.0,attn_length=0,base_cell=tf.contrib.rnn.BasicLSTMCell,state_is_tuple=True,reuse=False):

"""

根据给定的超参数创建一个RNN单元。参数:rnn_layer_sizes:一个整数列表,表示 RNN 每层的大小。dropout_keep_prob:一个浮点数,表示保留任何给定子单元输出的概率。attn_length:注意力向量的大小。base_cell:用于子单元的基础 tf.contrib.rnn.RNNCell。state_is_tuple:一个布尔值,指定是否使用隐藏矩阵和单元矩阵的元组作为状态,而不是拼接矩阵。return:一个基于给定超参数的 tf.contrib.rnn.MultiRNNCell。"""cells = []for num_units in rnn_layer_sizes:cell = base_cell(num_units, state_is_tuple=state_is_tuple, reuse=reuse)cell = tf.contrib.rnn.DropoutWrapper(cell, output_keep_prob=dropout_keep_prob)cells.append(cell)cell = tf.contrib.rnn.MultiRNNCell(cells, state_is_tuple=state_is_tuple)if attn_length:cell = tf.contrib.rnn.AttentionCellWrapper(cell, attn_length, state_is_tuple=state_is_tuple, reuse=reuse)return cell

def restore_flags(save_if_none_found=True):if FLAGS.traindir:saved_args_dir = os.path.join(FLAGS.traindir, 'saved_args')if save_if_none_found:try: os.makedirs(saved_args_dir)except: passfor arg in FLAGS.__flags:if arg not in model_layout_flags:continueif FLAGS.ignore_saved_args and os.path.exists(os.path.join(saved_args_dir, arg+'.pkl')):print('{:%Y-%m-%d %H:%M:%S}: saved_args: Found {} setting from saved state, but using CLI args ({}) and saving (--ignore_saved_args).'.format(datetime.datetime.today(), arg, getattr(FLAGS, arg)))elif os.path.exists(os.path.join(saved_args_dir, arg+'.pkl')):with open(os.path.join(saved_args_dir, arg+'.pkl'), 'rb') as f:setattr(FLAGS, arg, pkl.load(f))print('{:%Y-%m-%d %H:%M:%S}: saved_args: {} from saved state ({}), ignoring CLI args.'.format(datetime.datetime.today(), arg, getattr(FLAGS, arg)))elif save_if_none_found:print('{:%Y-%m-%d %H:%M:%S}: saved_args: Found no {} setting from saved state, using CLI args ({}) and saving.'.format(datetime.datetime.today(), arg, getattr(FLAGS, arg)))with open(os.path.join(saved_args_dir, arg+'.pkl'), 'wb') as f:print(getattr(FLAGS, arg),arg)pkl.dump(getattr(FLAGS, arg), f)else:print('{:%Y-%m-%d %H:%M:%S}: saved_args: Found no {} setting from saved state, using CLI args ({}) but not saving.'.format(datetime.datetime.today(), arg, getattr(FLAGS, arg)))# 定义数据类型

def data_type():return tf.float16 if FLAGS.float16 else tf.float32#return tf.float16def my_reduce_mean(what_to_take_mean_over):return tf.reshape(what_to_take_mean_over, shape=[-1])[0]denom = 1.0#print(what_to_take_mean_over.get_shape())for d in what_to_take_mean_over.get_shape():#print(d)if type(d) == tf.Dimension:denom = denom*d.valueelse:denom = denom*dreturn tf.reduce_sum(what_to_take_mean_over)/denomdef linear(inp, output_dim, scope=None, stddev=1.0, reuse_scope=False):norm = tf.random_normal_initializer(stddev=stddev, dtype=data_type())const = tf.constant_initializer(0.0, dtype=data_type())with tf.variable_scope(scope or 'linear') as scope:scope.set_regularizer(tf.contrib.layers.l2_regularizer(scale=FLAGS.reg_scale))if reuse_scope:scope.reuse_variables()#print('inp.get_shape(): {}'.format(inp.get_shape()))w = tf.get_variable('w', [inp.get_shape()[1], output_dim], initializer=norm, dtype=data_type())b = tf.get_variable('b', [output_dim], initializer=const, dtype=data_type())return tf.matmul(inp, w) + bdef minibatch(inp, num_kernels=25, kernel_dim=10, scope=None, msg='', reuse_scope=False):with tf.variable_scope(scope or 'minibatch_d') as scope:scope.set_regularizer(tf.contrib.layers.l2_regularizer(scale=FLAGS.reg_scale))if reuse_scope:scope.reuse_variables()inp = tf.Print(inp, [inp],'{} inp = '.format(msg), summarize=20, first_n=20)x = tf.sigmoid(linear(inp, num_kernels * kernel_dim, scope))activation = tf.reshape(x, (-1, num_kernels, kernel_dim))activation = tf.Print(activation, [activation],'{} activation = '.format(msg), summarize=20, first_n=20)diffs = tf.expand_dims(activation, 3) - \tf.expand_dims(tf.transpose(activation, [1, 2, 0]), 0)diffs = tf.Print(diffs, [diffs],'{} diffs = '.format(msg), summarize=20, first_n=20)abs_diffs = tf.reduce_sum(tf.abs(diffs), 2)abs_diffs = tf.Print(abs_diffs, [abs_diffs],'{} abs_diffs = '.format(msg), summarize=20, first_n=20)minibatch_features = tf.reduce_sum(tf.exp(-abs_diffs), 2)minibatch_features = tf.Print(minibatch_features, [tf.reduce_min(minibatch_features), tf.reduce_max(minibatch_features)],'{} minibatch_features (min,max) = '.format(msg), summarize=20, first_n=20)return tf.concat( [inp, minibatch_features],1)class RNNGAN(object):"""定义RNN-GAN模型."""def __init__(self, is_training, num_song_features=None, num_meta_features=None):batch_size = FLAGS.batch_sizeself.batch_size = batch_sizesonglength = FLAGS.songlengthself.songlength = songlength#self.global_step= tf.Variable(0, trainable=False)print('songlength: {}'.format(self.songlength))self._input_songdata = tf.placeholder(shape=[batch_size, songlength, num_song_features], dtype=data_type())self._input_metadata = tf.placeholder(shape=[batch_size, num_meta_features], dtype=data_type())#_split = tf.split(self._input_songdata,songlength,1)[0]print("self._input_songdata",self._input_songdata, 'songlength',songlength)#print(tf.squeeze(_split,[1]))songdata_inputs = [tf.squeeze(input_, [1])for input_ in tf.split(self._input_songdata,songlength,1)]with tf.variable_scope('G') as scope:scope.set_regularizer(tf.contrib.layers.l2_regularizer(scale=FLAGS.reg_scale))#lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(FLAGS.hidden_size_g, forget_bias=1.0, state_is_tuple=True)if is_training and FLAGS.keep_prob < 1:#lstm_cell = tf.nn.rnn_cell.DropoutWrapper(# lstm_cell, output_keep_prob=FLAGS.keep_prob)cell = make_rnn_cell([FLAGS.hidden_size_g]*FLAGS.num_layers_g,dropout_keep_prob=FLAGS.keep_prob)else:cell = make_rnn_cell([FLAGS.hidden_size_g]*FLAGS.num_layers_g) #cell = tf.nn.rnn_cell.MultiRNNCell([lstm_cell for _ in range( FLAGS.num_layers_g)], state_is_tuple=True)self._initial_state = cell.zero_state(batch_size, data_type())# TODO: (possibly temporarily) disabling meta infoif FLAGS.generate_meta:metainputs = tf.random_uniform(shape=[batch_size, int(FLAGS.random_input_scale*num_meta_features)], minval=0.0, maxval=1.0)meta_g = tf.nn.relu(linear(metainputs, FLAGS.meta_layer_size, scope='meta_layer', reuse_scope=False))meta_softmax_w = tf.get_variable("meta_softmax_w", [FLAGS.meta_layer_size, num_meta_features])meta_softmax_b = tf.get_variable("meta_softmax_b", [num_meta_features])meta_logits = tf.nn.xw_plus_b(meta_g, meta_softmax_w, meta_softmax_b)meta_probs = tf.nn.softmax(meta_logits)random_rnninputs = tf.random_uniform(shape=[batch_size, songlength, int(FLAGS.random_input_scale*num_song_features)], minval=0.0, maxval=1.0, dtype=data_type())random_rnninputs = [tf.squeeze(input_, [1]) for input_ in tf.split( random_rnninputs,songlength,1)]# REAL GENERATOR:state = self._initial_state# as we feed the output as the input to the next, we 'invent' the initial 'output'.generated_point = tf.random_uniform(shape=[batch_size, num_song_features], minval=0.0, maxval=1.0, dtype=data_type())outputs = []self._generated_features = []for i,input_ in enumerate(random_rnninputs):if i > 0: scope.reuse_variables()concat_values = [input_]if not FLAGS.disable_feed_previous:concat_values.append(generated_point)if FLAGS.generate_meta:concat_values.append(meta_probs)if len(concat_values):input_ = tf.concat(axis=1, values=concat_values)input_ = tf.nn.relu(linear(input_, FLAGS.hidden_size_g,scope='input_layer', reuse_scope=(i!=0)))output, state = cell(input_, state)outputs.append(output)#generated_point = tf.nn.relu(linear(output, num_song_features, scope='output_layer', reuse_scope=(i!=0)))generated_point = linear(output, num_song_features, scope='output_layer', reuse_scope=(i!=0))self._generated_features.append(generated_point)# PRETRAINING GENERATOR, will feed inputs, not generated outputs:scope.reuse_variables()# as we feed the output as the input to the next, we 'invent' the initial 'output'.prev_target = tf.random_uniform(shape=[batch_size, num_song_features], minval=0.0, maxval=1.0, dtype=data_type())outputs = []self._generated_features_pretraining = []for i,input_ in enumerate(random_rnninputs):concat_values = [input_]if not FLAGS.disable_feed_previous:concat_values.append(prev_target)if FLAGS.generate_meta:concat_values.append(self._input_metadata)if len(concat_values):input_ = tf.concat(axis=1, values=concat_values)input_ = tf.nn.relu(linear(input_, FLAGS.hidden_size_g, scope='input_layer', reuse_scope=(i!=0)))output, state = cell(input_, state)outputs.append(output)#generated_point = tf.nn.relu(linear(output, num_song_features, scope='output_layer', reuse_scope=(i!=0)))generated_point = linear(output, num_song_features, scope='output_layer', reuse_scope=(i!=0))self._generated_features_pretraining.append(generated_point)prev_target = songdata_inputs[i]#outputs, state = tf.nn.rnn(cell, transformed, initial_state=self._initial_state)#self._generated_features = [tf.nn.relu(linear(output, num_song_features, scope='output_layer', reuse_scope=(i!=0))) for i,output in enumerate(outputs)]self._final_state = state# These are used both for pretraining and for D/G training further down.self._lr = tf.Variable(FLAGS.learning_rate, trainable=False, dtype=data_type())self.g_params = [v for v in tf.trainable_variables() if v.name.startswith('model/G/')]if FLAGS.adam:g_optimizer = tf.train.AdamOptimizer(self._lr)else:g_optimizer = tf.train.GradientDescentOptimizer(self._lr)reg_losses = tf.get_collection(tf.GraphKeys.REGULARIZATION_LOSSES)reg_constant = 0.1 # Choose an appropriate one.reg_loss = reg_constant * sum(reg_losses)reg_loss = tf.Print(reg_loss, reg_losses,'reg_losses = ', summarize=20, first_n=20)# 预训练print(tf.transpose(tf.stack(self._generated_features_pretraining), perm=[1, 0, 2]).get_shape())print(self._input_songdata.get_shape())self.rnn_pretraining_loss = tf.reduce_mean(tf.squared_difference(x=tf.transpose(tf.stack(self._generated_features_pretraining), perm=[1, 0, 2]), y=self._input_songdata))if not FLAGS.disable_l2_regularizer:self.rnn_pretraining_loss = self.rnn_pretraining_loss+reg_losspretraining_grads, _ = tf.clip_by_global_norm(tf.gradients(self.rnn_pretraining_loss, self.g_params), FLAGS.max_grad_norm)self.opt_pretraining = g_optimizer.apply_gradients(zip(pretraining_grads, self.g_params))# The discriminator tries to tell the difference between samples from the# true data distribution (self.x) and the generated samples (self.z).## Here we create two copies of the discriminator network (that share parameters),# as you cannot use the same network with different inputs in TensorFlow.with tf.variable_scope('D') as scope:scope.set_regularizer(tf.contrib.layers.l2_regularizer(scale=FLAGS.reg_scale))# Make list of tensors. One per step in recurrence.# Each tensor is batchsize*numfeatures.# TODO: (possibly temporarily) disabling meta infoprint('self._input_songdata shape {}'.format(self._input_songdata.get_shape()))print('generated data shape {}'.format(self._generated_features[0].get_shape()))# TODO: (possibly temporarily) disabling meta infoif FLAGS.generate_meta:songdata_inputs = [tf.concat([self._input_metadata, songdata_input],1) for songdata_input in songdata_inputs]#print(songdata_inputs[0])#print(songdata_inputs[0])#print('metadata inputs shape {}'(self._input_metadata.get_shape()))#print('generated metadata shape {}'.format(meta_probs.get_shape()))self.real_d,self.real_d_features = self.discriminator(songdata_inputs, is_training, msg='real')scope.reuse_variables()# TODO: (possibly temporarily) disabling meta infoif FLAGS.generate_meta:generated_data = [tf.concat([meta_probs, songdata_input],1) for songdata_input in self._generated_features]else:generated_data = self._generated_featuresif songdata_inputs[0].get_shape() != generated_data[0].get_shape():print('songdata_inputs shape {} != generated data shape {}'.format(songdata_inputs[0].get_shape(), generated_data[0].get_shape()))self.generated_d,self.generated_d_features = self.discriminator(generated_data, is_training, msg='generated')# Define the loss for discriminator and generator networks (see the original# paper for details), and create optimizers for bothself.d_loss = tf.reduce_mean(-tf.log(tf.clip_by_value(self.real_d, 1e-1000000, 1.0)) \-tf.log(1 - tf.clip_by_value(self.generated_d, 0.0, 1.0-1e-1000000)))self.g_loss_feature_matching = tf.reduce_sum(tf.squared_difference(self.real_d_features, self.generated_d_features))self.g_loss = tf.reduce_mean(-tf.log(tf.clip_by_value(self.generated_d, 1e-1000000, 1.0)))if not FLAGS.disable_l2_regularizer:self.d_loss = self.d_loss+reg_lossself.g_loss_feature_matching = self.g_loss_feature_matching+reg_lossself.g_loss = self.g_loss+reg_lossself.d_params = [v for v in tf.trainable_variables() if v.name.startswith('model/D/')]if not is_training:returnd_optimizer = tf.train.GradientDescentOptimizer(self._lr*FLAGS.d_lr_factor)d_grads, _ = tf.clip_by_global_norm(tf.gradients(self.d_loss, self.d_params),FLAGS.max_grad_norm)self.opt_d = d_optimizer.apply_gradients(zip(d_grads, self.d_params))if FLAGS.feature_matching:g_grads, _ = tf.clip_by_global_norm(tf.gradients(self.g_loss_feature_matching,self.g_params),FLAGS.max_grad_norm)else:g_grads, _ = tf.clip_by_global_norm(tf.gradients(self.g_loss, self.g_params),FLAGS.max_grad_norm)self.opt_g = g_optimizer.apply_gradients(zip(g_grads, self.g_params))self._new_lr = tf.placeholder(shape=[], name="new_learning_rate", dtype=data_type())self._lr_update = tf.assign(self._lr, self._new_lr)def discriminator(self, inputs, is_training, msg=''):# RNN discriminator:#for i in xrange(len(inputs)):# print('shape inputs[{}] {}'.format(i, inputs[i].get_shape()))#inputs[0] = tf.Print(inputs[0], [inputs[0]],# '{} inputs[0] = '.format(msg), summarize=20, first_n=20)if is_training and FLAGS.keep_prob < 1:inputs = [tf.nn.dropout(input_, FLAGS.keep_prob) for input_ in inputs]#lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(FLAGS.hidden_size_d, forget_bias=1.0, state_is_tuple=True)if is_training and FLAGS.keep_prob < 1:#lstm_cell = tf.nn.rnn_cell.DropoutWrapper(#lstm_cell, output_keep_prob=FLAGS.keep_prob)cell_fw = make_rnn_cell([FLAGS.hidden_size_d]* FLAGS.num_layers_d,dropout_keep_prob=FLAGS.keep_prob)cell_bw = make_rnn_cell([FLAGS.hidden_size_d]* FLAGS.num_layers_d,dropout_keep_prob=FLAGS.keep_prob)else:cell_fw = make_rnn_cell([FLAGS.hidden_size_d]* FLAGS.num_layers_d)cell_bw = make_rnn_cell([FLAGS.hidden_size_d]* FLAGS.num_layers_d)#cell_fw = tf.nn.rnn_cell.MultiRNNCell([lstm_cell for _ in range( FLAGS.num_layers_d)], state_is_tuple=True)self._initial_state_fw = cell_fw.zero_state(self.batch_size, data_type())if not FLAGS.unidirectional_d:#lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(FLAGS.hidden_size_g, forget_bias=1.0, state_is_tuple=True)#if is_training and FLAGS.keep_prob < 1:# lstm_cell = tf.nn.rnn_cell.DropoutWrapper(# lstm_cell, output_keep_prob=FLAGS.keep_prob)#cell_bw = tf.nn.rnn_cell.MultiRNNCell([lstm_cell for _ in range( FLAGS.num_layers_d)], state_is_tuple=True)self._initial_state_bw = cell_bw.zero_state(self.batch_size, data_type())print("cell_fw",cell_fw.output_size)#print("cell_bw",cell_bw.output_size)#print("inputs",inputs)#print("initial_state_fw",self._initial_state_fw)#print("initial_state_bw",self._initial_state_bw)outputs, state_fw, state_bw = tf.contrib.rnn.static_bidirectional_rnn(cell_fw, cell_bw, inputs, initial_state_fw=self._initial_state_fw, initial_state_bw=self._initial_state_bw)#outputs[0] = tf.Print(outputs[0], [outputs[0]],# '{} outputs[0] = '.format(msg), summarize=20, first_n=20)#state = tf.concat(state_fw, state_bw)#endoutput = tf.concat(concat_dim=1, values=[outputs[0],outputs[-1]])else:outputs, state = tf.nn.rnn(cell_fw, inputs, initial_state=self._initial_state_fw)#state = self._initial_state#outputs, state = cell_fw(tf.convert_to_tensor (inputs),state)#endoutput = outputs[-1]if FLAGS.minibatch_d:outputs = [minibatch(tf.reshape(outp, shape=[FLAGS.batch_size, -1]), msg=msg, reuse_scope=(i!=0)) for i,outp in enumerate(outputs)]# decision = tf.sigmoid(linear(outputs[-1], 1, 'decision'))if FLAGS.end_classification:decisions = [tf.sigmoid(linear(output, 1, 'decision', reuse_scope=(i!=0))) for i,output in enumerate([outputs[0], outputs[-1]])]decisions = tf.stack(decisions)decisions = tf.transpose(decisions, perm=[1,0,2])print('shape, decisions: {}'.format(decisions.get_shape()))else:decisions = [tf.sigmoid(linear(output, 1, 'decision', reuse_scope=(i!=0))) for i,output in enumerate(outputs)]decisions = tf.stack(decisions)decisions = tf.transpose(decisions, perm=[1,0,2])print('shape, decisions: {}'.format(decisions.get_shape()))decision = tf.reduce_mean(decisions, reduction_indices=[1,2])decision = tf.Print(decision, [decision],'{} decision = '.format(msg), summarize=20, first_n=20)return (decision,tf.transpose(tf.stack(outputs), perm=[1,0,2]))def assign_lr(self, session, lr_value):session.run(self._lr_update, feed_dict={self._new_lr: lr_value})@propertydef generated_features(self):return self._generated_features@propertydef input_songdata(self):return self._input_songdata@propertydef input_metadata(self):return self._input_metadata@propertydef targets(self):return self._targets@propertydef initial_state(self):return self._initial_state@propertydef cost(self):return self._cost@propertydef final_state(self):return self._final_state@propertydef lr(self):return self._lr@propertydef train_op(self):return self._train_opdef run_epoch(session, model, loader, datasetlabel, eval_op_g, eval_op_d, pretraining=False, verbose=False, run_metadata=None, pretraining_d=False):"""Runs the model on the given data."""#epoch_size = ((len(data) // model.batch_size) - 1) // model.songlengthepoch_start_time = time.time()g_loss, d_loss = 10.0, 10.0g_losses, d_losses = 0.0, 0.0iters = 0#state = session.run(model.initial_state)time_before_graph = Nonetime_after_graph = Nonetimes_in_graph = []times_in_python = []#times_in_batchreading = []loader.rewind(part=datasetlabel)[batch_meta, batch_song] = loader.get_batch(model.batch_size, model.songlength, part=datasetlabel)run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)while batch_meta is not None and batch_song is not None:op_g = eval_op_gop_d = eval_op_dif datasetlabel == 'train' and not pretraining: # and not FLAGS.feature_matching:if d_loss == 0.0 and g_loss == 0.0:print('Both G and D train loss are zero. Exiting.')break#saver.save(session, checkpoint_path, global_step=m.global_step)#breakelif d_loss == 0.0:#print('D train loss is zero. Freezing optimization. G loss: {:.3f}'.format(g_loss))op_g = tf.no_op()elif g_loss == 0.0: #print('G train loss is zero. Freezing optimization. D loss: {:.3f}'.format(d_loss))op_d = tf.no_op()elif g_loss < 2.0 or d_loss < 2.0:if g_loss*.7 > d_loss:#print('G train loss is {:.3f}, D train loss is {:.3f}. Freezing optimization of D'.format(g_loss, d_loss))op_g = tf.no_op()#elif d_loss*.7 > g_loss:#print('G train loss is {:.3f}, D train loss is {:.3f}. Freezing optimization of G'.format(g_loss, d_loss))op_d = tf.no_op()#fetches = [model.cost, model.final_state, eval_op]if pretraining:if pretraining_d:fetches = [model.rnn_pretraining_loss, model.d_loss, op_g, op_d]else:fetches = [model.rnn_pretraining_loss, tf.no_op(), op_g, op_d]else:fetches = [model.g_loss, model.d_loss, op_g, op_d]feed_dict = {}feed_dict[model.input_songdata.name] = batch_songfeed_dict[model.input_metadata.name] = batch_meta#print(batch_song)#print(batch_song.shape)#for i, (c, h) in enumerate(model.initial_state):# feed_dict[c] = state[i].c# feed_dict[h] = state[i].h#cost, state, _ = session.run(fetches, feed_dict)time_before_graph = time.time()if iters > 0:times_in_python.append(time_before_graph-time_after_graph)if run_metadata:g_loss, d_loss, _, _ = session.run(fetches, feed_dict, options=run_options, run_metadata=run_metadata)else:g_loss, d_loss, _, _ = session.run(fetches, feed_dict)time_after_graph = time.time()if iters > 0:times_in_graph.append(time_after_graph-time_before_graph)g_losses += g_lossif not pretraining:d_losses += d_lossiters += 1if verbose and iters % 10 == 9:songs_per_sec = float(iters * model.batch_size)/float(time.time() - epoch_start_time)avg_time_in_graph = float(sum(times_in_graph))/float(len(times_in_graph))avg_time_in_python = float(sum(times_in_python))/float(len(times_in_python))#avg_time_batchreading = float(sum(times_in_batchreading))/float(len(times_in_batchreading))if pretraining:print("{}: {} (pretraining) batch loss: G: {:.3f}, avg loss: G: {:.3f}, speed: {:.1f} songs/s, avg in graph: {:.1f}, avg in python: {:.1f}.".format(datasetlabel, iters, g_loss, float(g_losses)/float(iters), songs_per_sec, avg_time_in_graph, avg_time_in_python))else:print("{}: {} batch loss: G: {:.3f}, D: {:.3f}, avg loss: G: {:.3f}, D: {:.3f} speed: {:.1f} songs/s, avg in graph: {:.1f}, avg in python: {:.1f}.".format(datasetlabel, iters, g_loss, d_loss, float(g_losses)/float(iters), float(d_losses)/float(iters),songs_per_sec, avg_time_in_graph, avg_time_in_python))#batchtime = time.time()[batch_meta, batch_song] = loader.get_batch(model.batch_size, model.songlength, part=datasetlabel)#times_in_batchreading.append(time.time()-batchtime)if iters == 0:return (None,None)g_mean_loss = g_losses/itersif pretraining and not pretraining_d:d_mean_loss = Noneelse:d_mean_loss = d_losses/itersreturn (g_mean_loss, d_mean_loss)def sample(session, model, batch=False):"""Samples from the generative model."""#state = session.run(model.initial_state)fetches = [model.generated_features]feed_dict = {}generated_features, = session.run(fetches, feed_dict)#print( generated_features)print( generated_features[0].shape)# The following worked when batch_size=1.# generated_features = [np.squeeze(x, axis=0) for x in generated_features]# If batch_size != 1, we just pick the first sample. Wastefull, yes.returnable = []if batch:for batchno in range(generated_features[0].shape[0]):returnable.append([x[batchno,:] for x in generated_features])else:returnable = [x[0,:] for x in generated_features]return returnabledef main(_):if not FLAGS.datadir:raise ValueError("Must set --datadir to midi music dir.")if not FLAGS.traindir:raise ValueError("Must set --traindir to dir where I can save model and plots.")restore_flags()summaries_dir = Noneplots_dir = Nonegenerated_data_dir = Nonesummaries_dir = os.path.join(FLAGS.traindir, 'summaries')plots_dir = os.path.join(FLAGS.traindir, 'plots')generated_data_dir = os.path.join(FLAGS.traindir, 'generated_data')try: os.makedirs(FLAGS.traindir)except: passtry: os.makedirs(summaries_dir)except: passtry: os.makedirs(plots_dir)except: passtry: os.makedirs(generated_data_dir)except: passdirectorynames = FLAGS.traindir.split('/')experiment_label = ''while not experiment_label:experiment_label = directorynames.pop()global_step = -1if os.path.exists(os.path.join(FLAGS.traindir, 'global_step.pkl')):with open(os.path.join(FLAGS.traindir, 'global_step.pkl'), 'r') as f:global_step = pkl.load(f)global_step += 1songfeatures_filename = os.path.join(FLAGS.traindir, 'num_song_features.pkl')metafeatures_filename = os.path.join(FLAGS.traindir, 'num_meta_features.pkl')synthetic=Noneif FLAGS.synthetic_chords:synthetic = 'chords'print('Training on synthetic chords!')if FLAGS.composer is not None:print('Single composer: {}'.format(FLAGS.composer))loader = music_data_utils.MusicDataLoader(FLAGS.datadir, FLAGS.select_validation_percentage, FLAGS.select_test_percentage, FLAGS.works_per_composer, FLAGS.pace_events, synthetic=synthetic, tones_per_cell=FLAGS.tones_per_cell, single_composer=FLAGS.composer)if FLAGS.synthetic_chords:# This is just a print out, to check the generated data.batch = loader.get_batch(batchsize=1, songlength=400)loader.get_midi_pattern([batch[1][0][i] for i in xrange(batch[1].shape[1])])num_song_features = loader.get_num_song_features()print('num_song_features:{}'.format(num_song_features))num_meta_features = loader.get_num_meta_features()print('num_meta_features:{}'.format(num_meta_features))train_start_time = time.time()checkpoint_path = os.path.join(FLAGS.traindir, "model.ckpt")songlength_ceiling = FLAGS.songlengthif global_step < FLAGS.pretraining_epochs:FLAGS.songlength = int(min(((global_step+10)/10)*10,songlength_ceiling))FLAGS.songlength = int(min((global_step+1)*4,songlength_ceiling))with tf.Graph().as_default(), tf.Session(config=tf.ConfigProto(log_device_placement=FLAGS.log_device_placement)) as session:with tf.variable_scope("model", reuse=None) as scope:scope.set_regularizer(tf.contrib.layers.l2_regularizer(scale=FLAGS.reg_scale))m = RNNGAN(is_training=True, num_song_features=num_song_features, num_meta_features=num_meta_features)if FLAGS.initialize_d:vars_to_restore = {}for v in tf.trainable_variables():if v.name.startswith('model/G/'):print(v.name[:-2])vars_to_restore[v.name[:-2]] = vsaver = tf.train.Saver(vars_to_restore)ckpt = tf.train.get_checkpoint_state(FLAGS.traindir)if ckpt and tf.gfile.Exists(ckpt.model_checkpoint_path):print("Reading model parameters from %s" % ckpt.model_checkpoint_path,end=" ")saver.restore(session, ckpt.model_checkpoint_path)session.run(tf.initialize_variables([v for v in tf.trainable_variables() if v.name.startswith('model/D/')]))else:print("Created model with fresh parameters.")session.run(tf.initialize_all_variables())saver = tf.train.Saver(tf.all_variables())else:saver = tf.train.Saver(tf.all_variables())ckpt = tf.train.get_checkpoint_state(FLAGS.traindir)if ckpt and tf.gfile.Exists(ckpt.model_checkpoint_path):print("Reading model parameters from %s" % ckpt.model_checkpoint_path)saver.restore(session, ckpt.model_checkpoint_path)else:print("Created model with fresh parameters.")session.run(tf.initialize_all_variables())run_metadata = Noneif FLAGS.profiling:run_metadata = tf.RunMetadata()if not FLAGS.sample:train_g_loss,train_d_loss = 1.0,1.0for i in range(global_step, FLAGS.max_epoch):lr_decay = FLAGS.lr_decay ** max(i - FLAGS.epochs_before_decay, 0.0)if global_step < FLAGS.pretraining_epochs:#new_songlength = int(min(((i+10)/10)*10,songlength_ceiling))new_songlength = int(min((i+1)*4,songlength_ceiling))else:new_songlength = songlength_ceilingif new_songlength != FLAGS.songlength:print('Changing songlength, now training on {} events from songs.'.format(new_songlength))FLAGS.songlength = new_songlengthwith tf.variable_scope("model", reuse=True) as scope:scope.set_regularizer(tf.contrib.layers.l2_regularizer(scale=FLAGS.reg_scale))m = RNNGAN(is_training=True, num_song_features=num_song_features, num_meta_features=num_meta_features)if not FLAGS.adam:m.assign_lr(session, FLAGS.learning_rate * lr_decay)save = Falsedo_exit = Falseprint("Epoch: {} Learning rate: {:.3f}, pretraining: {}".format(i, session.run(m.lr), (i<FLAGS.pretraining_epochs)))if i<FLAGS.pretraining_epochs:opt_d = tf.no_op()if FLAGS.pretraining_d:opt_d = m.opt_dtrain_g_loss,train_d_loss = run_epoch(session, m, loader, 'train', m.opt_pretraining, opt_d, pretraining = True, verbose=True, run_metadata=run_metadata, pretraining_d=FLAGS.pretraining_d)if FLAGS.pretraining_d:try:print("Epoch: {} Pretraining loss: G: {:.3f}, D: {:.3f}".format(i, train_g_loss, train_d_loss))except:print(train_g_loss)print(train_d_loss)else:print("Epoch: {} Pretraining loss: G: {:.3f}".format(i, train_g_loss))else:train_g_loss,train_d_loss = run_epoch(session, m, loader, 'train', m.opt_d, m.opt_g, verbose=True, run_metadata=run_metadata)try:print("Epoch: {} Train loss: G: {:.3f}, D: {:.3f}".format(i, train_g_loss, train_d_loss))except:print("Epoch: {} Train loss: G: {}, D: {}".format(i, train_g_loss, train_d_loss))valid_g_loss,valid_d_loss = run_epoch(session, m, loader, 'validation', tf.no_op(), tf.no_op())try:print("Epoch: {} Valid loss: G: {:.3f}, D: {:.3f}".format(i, valid_g_loss, valid_d_loss))except:print("Epoch: {} Valid loss: G: {}, D: {}".format(i, valid_g_loss, valid_d_loss))if train_d_loss == 0.0 and train_g_loss == 0.0:print('Both G and D train loss are zero. Exiting.')save = Truedo_exit = Trueif i % FLAGS.epochs_per_checkpoint == 0:save = Trueif FLAGS.exit_after > 0 and time.time() - train_start_time > FLAGS.exit_after*60:print("%s: Has been running for %d seconds. Will exit (exiting after %d minutes)."%(datetime.datetime.today().strftime('%Y-%m-%d %H:%M:%S'), (int)(time.time() - train_start_time), FLAGS.exit_after))save = Truedo_exit = Trueif save:saver.save(session, checkpoint_path, global_step=i)with open(os.path.join(FLAGS.traindir, 'global_step.pkl'), 'wb') as f:pkl.dump(i, f)if FLAGS.profiling:# Create the Timeline object, and write it to a jsontl = timeline.Timeline(run_metadata.step_stats)ctf = tl.generate_chrome_trace_format()with open(os.path.join(plots_dir, 'timeline.json'), 'w') as f:f.write(ctf)print('{}: Saving done!'.format(i))step_time, loss = 0.0, 0.0if train_d_loss is None: #pretrainingtrain_d_loss = 0.0valid_d_loss = 0.0valid_g_loss = 0.0if not os.path.exists(os.path.join(plots_dir, 'gnuplot-input.txt')):with open(os.path.join(plots_dir, 'gnuplot-input.txt'), 'w') as f:f.write('# global-step learning-rate train-g-loss train-d-loss valid-g-loss valid-d-loss\n')with open(os.path.join(plots_dir, 'gnuplot-input.txt'), 'a') as f:try:f.write('{} {:.4f} {:.2f} {:.2f} {:.3} {:.3f}\n'.format(i, m.lr.eval(), train_g_loss, train_d_loss, valid_g_loss, valid_d_loss))except:f.write('{} {} {} {} {} {}\n'.format(i, m.lr.eval(), train_g_loss, train_d_loss, valid_g_loss, valid_d_loss))if not os.path.exists(os.path.join(plots_dir, 'gnuplot-commands-loss.txt')):with open(os.path.join(plots_dir, 'gnuplot-commands-loss.txt'), 'a') as f:f.write('set terminal postscript eps color butt "Times" 14\nset yrange [0:400]\nset output "loss.eps"\nplot \'gnuplot-input.txt\' using ($1):($3) title \'train G\' with linespoints, \'gnuplot-input.txt\' using ($1):($4) title \'train D\' with linespoints, \'gnuplot-input.txt\' using ($1):($5) title \'valid G\' with linespoints, \'gnuplot-input.txt\' using ($1):($6) title \'valid D\' with linespoints, \n')if not os.path.exists(os.path.join(plots_dir, 'gnuplot-commands-midistats.txt')):with open(os.path.join(plots_dir, 'gnuplot-commands-midistats.txt'), 'a') as f:f.write('set terminal postscript eps color butt "Times" 14\nset yrange [0:127]\nset xrange [0:70]\nset output "midistats.eps"\nplot \'midi_stats.gnuplot\' using ($1):(100*$3) title \'Scale consistency, %\' with linespoints, \'midi_stats.gnuplot\' using ($1):($6) title \'Tone span, halftones\' with linespoints, \'midi_stats.gnuplot\' using ($1):($10) title \'Unique tones\' with linespoints, \'midi_stats.gnuplot\' using ($1):($23) title \'Intensity span, units\' with linespoints, \'midi_stats.gnuplot\' using ($1):(100*$24) title \'Polyphony, %\' with linespoints, \'midi_stats.gnuplot\' using ($1):($12) title \'3-tone repetitions\' with linespoints\n')try:Popen(['gnuplot','gnuplot-commands-loss.txt'], cwd=plots_dir)Popen(['gnuplot','gnuplot-commands-midistats.txt'], cwd=plots_dir)except:print('failed to run gnuplot. Please do so yourself: gnuplot gnuplot-commands.txt cwd={}'.format(plots_dir))song_data = sample(session, m, batch=True)midi_patterns = []print('formatting midi...')midi_time = time.time()for d in song_data:midi_patterns.append(loader.get_midi_pattern(d))print('done. time: {}'.format(time.time()-midi_time))filename = os.path.join(generated_data_dir, 'out-{}-{}-{}.mid'.format(experiment_label, i, datetime.datetime.today().strftime('%Y-%m-%d-%H-%M-%S')))loader.save_midi_pattern(filename, midi_patterns[0])stats = []print('getting stats...')stats_time = time.time()for p in midi_patterns:stats.append(get_all_stats(p))print('done. time: {}'.format(time.time()-stats_time))#print(stats)stats = [stat for stat in stats if stat is not None]if len(stats):stats_keys_string = ['scale']stats_keys = ['scale_score', 'tone_min', 'tone_max', 'tone_span', 'freq_min', 'freq_max', 'freq_span', 'tones_unique', 'repetitions_2', 'repetitions_3', 'repetitions_4', 'repetitions_5', 'repetitions_6', 'repetitions_7', 'repetitions_8', 'repetitions_9', 'estimated_beat', 'estimated_beat_avg_ticks_off', 'intensity_min', 'intensity_max', 'intensity_span', 'polyphony_score', 'top_2_interval_difference', 'top_3_interval_difference', 'num_tones']statsfilename = os.path.join(plots_dir, 'midi_stats.gnuplot')if not os.path.exists(statsfilename):with open(statsfilename, 'a') as f:f.write('# Average numers over one minibatch of size {}.\n'.format(FLAGS.batch_size))f.write('# global-step {} {}\n'.format(' '.join([s.replace(' ', '_') for s in stats_keys_string]), ' '.join(stats_keys)))with open(statsfilename, 'a') as f:f.write('{} {} {}\n'.format(i, ' '.join(['{}'.format(stats[0][key].replace(' ', '_')) for key in stats_keys_string]), ' '.join(['{:.3f}'.format(sum([s[key] for s in stats])/float(len(stats))) for key in stats_keys])))print('Saved {}.'.format(filename))if do_exit:if FLAGS.call_after is not None:print("%s: Will call \"%s\" before exiting."%(datetime.datetime.today().strftime('%Y-%m-%d %H:%M:%S'), FLAGS.call_after))res = call(FLAGS.call_after.split(" "))print ('{}: call returned {}.'.format(datetime.datetime.today().strftime('%Y-%m-%d %H:%M:%S'), res))exit()sys.stdout.flush()test_g_loss,test_d_loss = run_epoch(session, m, loader, 'test', tf.no_op(), tf.no_op())print("Test loss G: %.3f, D: %.3f" %(test_g_loss, test_d_loss))song_data = sample(session, m)filename = os.path.join(generated_data_dir, 'out-{}-{}-{}.mid'.format(experiment_label, i, datetime.datetime.today().strftime('%Y-%m-%d-%H-%M-%S')))loader.save_data(filename, song_data)print('Saved {}.'.format(filename))if __name__ == "__main__":tf.app.run()结论

作者提出了一种基于生成对抗网络训练的连续数据循环神经网络C-RNN-GAN。实验结果表明对抗训练有助于模型学习更多变的模式。虽然生成音乐与训练数据中的音乐相比仍有差距,但C-RNN-GAN生成音乐更接近真实音乐。

缺点以及后续展望

模型虽能生成音乐,但与人类判断的音乐仍有差距,后续可深入探究生成音乐与真实音乐存在差距的原因。作者提出可以进一步优化模型结构,提高生成音乐的质量。此外,还可研究该模型在其他类型连续序列数据中的应用。

总结

本周我阅读了一篇关于GAN生成序列数据的论文,为下一次阅读TimeGAN论文打作铺垫。通过阅读这篇论文,我了解到C-RNN-GAN模型如何利用对抗训练来生成连续序列数据,其中,生成器(G)包含LSTM层和全连接层;判别器(D)由Bi-LSTM(双向长短期记忆网络)组成。即 D双向的,G是单向的。同时,作者也通过实验证明了C-RNN-GAN的优势,虽然模型在序列数据生成方面有一定的效果,但仍存在一些不足之处,如生成序列数据与真实序列数据之间任然存在差距、模型结构尚可优化、应用到其他场景等等。作者提出的这些不足与展望为我后续研究数据增强方向提供了参考和思路。

相关文章:

2025.1.26机器学习笔记:C-RNN-GAN文献阅读

2025.1.26周报 文献阅读题目信息摘要Abstract创新点网络架构实验结论缺点以及后续展望 总结 文献阅读 题目信息 题目: C-RNN-GAN: Continuous recurrent neural networks with adversarial training会议期刊: NIPS作者: Olof Mogren发表时间…...

FAST-DDS and ROS2 RQT connect

reference: FAST-DDS与ROS2通信_ros2 收fastdds的数据-CSDN博客 software version: repositories: foonathan_memory_vendor: type: git url: https://github.com/eProsima/foonathan_memory_vendor.git version: v1.1.0 fastcdr: …...

GESP2024年3月认证C++六级( 第三部分编程题(2)好斗的牛)

参考程序(暴力枚举) #include <iostream> #include <vector> #include <algorithm> using namespace std; int N; vector<int> a, b; int ans 1e9; int main() {cin >> N;a.resize(N);b.resize(N);for (int i 0; i &l…...

记一次STM32编译生成BIN文件过大的问题(基于STM32CubeIDE)

文章目录 问题描述解决方法更多拓展 问题描述 最近在一个项目中使用了 STM32H743 单片机(基于 STM32CubeIDE GCC 开发),它的内存分为了 DTCMRAM RAM_D1 RAM_D2 …等很多部分。其中 DTCM 的速度是比通常的内存要快的,缺点是不支持…...

【暴力洗盘】的实战技术解读-北玻股份和三变科技

龙头的上攻与回调动作都是十分惊人的。不惊人不足以吸引投资者的关注,不惊人也就不能成为龙头了。 1.建筑节能概念--北玻股份 建筑节能,是指在建筑材料生产、房屋建筑和构筑物施工及使用过程中,满足同等需要或达到相同目的的条件下…...

Day42:列表的组合

在Python 中,列表的组合是指将两个或多个列表合并成一个新的列表。Python 提供了多种方法来实现这一操作,每种方法都有其特定的应用场景。今天我们将学习如何通过不同的方式组合列表。 1. 使用 运算符进行列表合并 最直接的方式是使用 运算符&#x…...

mantisbt添加修改用户密码

文章目录 问题当前版本安装流程创建用户修改密码老的方式探索阶段 问题 不太好改密码啊。貌似必须要域名要发邮件。公司太穷,看不见的东西不关心,只能改源码了。 当前版本 当前mantisbt版本 2.27 php版本 7.4.3 安装流程 (下面流程不是…...

DroneXtract:一款针对无人机的网络安全数字取证工具

关于DroneXtract DroneXtract是一款使用 Golang 开发的适用于DJI无人机的综合数字取证套件,该工具可用于分析无人机传感器值和遥测数据、可视化无人机飞行地图、审计威胁活动以及提取多种文件格式中的相关数据。 功能介绍 DroneXtract 具有四个用于无人机取证和审…...

简单树形菜单

引言 在网页开发中,树形菜单是一种非常实用的,它可以清晰地展示具有层级关系的数据,并且能够方便用户进行导航和操作。 整体思路 整个项目主要分为三个部分:HTML 结构搭建、CSS 样式设计和 JavaScript 交互逻辑实现。通过 XMLHt…...

Windows 靶机常见服务、端口及枚举工具与方法全解析:SMB、LDAP、NFS、RDP、WinRM、DNS

在渗透测试中,Windows 靶机通常会运行多种服务,每种服务都有其默认端口和常见的枚举工具及方法。以下是 Windows 靶机常见的服务、端口、枚举工具和方法的详细说明: 1. SMB(Server Message Block) 端口 445/TCP&…...

RNN实现阿尔茨海默症的诊断识别

本文为为🔗365天深度学习训练营内部文章 原作者:K同学啊 一 导入数据 import torch.nn as nn import torch.nn.functional as F import torchvision,torch from sklearn.preprocessing import StandardScaler from torch.utils.data import TensorDatase…...

14-6-1C++STL的list

(一)list容器的基本概念 list容器简介: 1.list是一个双向链表容器,可高效地进行插入删除元素 2.list不可以随机存取元素,所以不支持at.(pos)函数与[ ]操作符 (二)list容器头部和尾部的操作 list对象的默…...

Redis事务机制详解与Springboot项目中的使用

Redis 的事务机制允许将多个命令打包在一起,作为一个原子操作来执行。虽然 Redis 的事务与关系型数据库的事务有所不同,但它仍然提供了一种确保多个命令顺序执行的方式。以下是 Redis 事务机制的详细解析: 1. Redis 事务的基本概念 Redis 事…...

DeepSeek-R1,用Ollama跑起来

# DeepSeek-R1横空出世,超越OpenAI-o1,教你用Ollama跑起来 使用Ollama在本地运行DeepSeek-R1的操作指南。 DeepSeek-R1作为第一代推理模型,在数学、代码和推理任务上表现优异,与OpenAI-o1模型不相上下。 将此类模型部署到本地&am…...

Leecode刷题C语言之组合总和②

执行结果:通过 执行用时和内存消耗如下: int** ans; int* ansColumnSizes; int ansSize;int* sequence; int sequenceSize;int** freq; int freqSize;void dfs(int pos, int rest) {if (rest 0) {int* tmp malloc(sizeof(int) * sequenceSize);memcpy(tmp, seque…...

YOLOv8改进,YOLOv8检测头融合DynamicHead,并添加小目标检测层(四头检测),适合目标检测、分割等,全网独发

摘要 作者提出一种新的检测头,称为“动态头”,旨在将尺度感知、空间感知和任务感知统一在一起。如果我们将骨干网络的输出(即检测头的输入)视为一个三维张量,其维度为级别 空间 通道,这样的统一检测头可以看作是一个注意力学习问题,直观的解决方案是对该张量进行全自…...

【PyQt】QThread快速创建多线程任务

pyqt通过QThread快速创建多线程任务 在 PyQt5 中使用多线程时,需要注意 GUI 线程(主线程) 和 工作线程 的分离。PyQt5 的主线程负责处理 GUI 事件,如果在主线程中执行耗时任务,会导致界面卡顿甚至无响应。因此&#x…...

智能码二维码的成本效益分析

以下是智能码二维码的成本效益分析: 成本方面 硬件成本 标签成本:二维码标签本身价格低廉,即使进行大规模应用,成本也相对较低。如在智能仓储中,塑料托盘加二维码方案的标签成本几乎可以忽略不计4。扫描设备成本&…...

企业财务管理系统的需求设计和实现

该作者的原创文章目录: 生产制造执行MES系统的需求设计和实现 企业后勤管理系统的需求设计和实现 行政办公管理系统的需求设计和实现 人力资源管理HR系统的需求设计和实现 企业财务管理系统的需求设计和实现 董事会办公管理系统的需求设计和实现 公司组织架构…...

Springboot集成Swagger和Springdoc详解

Springboot2.x集成Swagger21. Springboot匹配版本2.7.0~2.7.18(其它版本需要自己去调试匹配)2. 首先导入Swagger2匹配的依赖项3. 导入依赖后创建配置文件SwaggerConfig4. Swagger集成完后,接下来接口的配置Springboot3.x集成Springdoc1. Springboot3.x依赖Springdoc配置2. 在…...

挑战杯推荐项目

“人工智能”创意赛 - 智能艺术创作助手:借助大模型技术,开发能根据用户输入的主题、风格等要求,生成绘画、音乐、文学作品等多种形式艺术创作灵感或初稿的应用,帮助艺术家和创意爱好者激发创意、提高创作效率。 - 个性化梦境…...

DockerHub与私有镜像仓库在容器化中的应用与管理

哈喽,大家好,我是左手python! Docker Hub的应用与管理 Docker Hub的基本概念与使用方法 Docker Hub是Docker官方提供的一个公共镜像仓库,用户可以在其中找到各种操作系统、软件和应用的镜像。开发者可以通过Docker Hub轻松获取所…...

Vue3 + Element Plus + TypeScript中el-transfer穿梭框组件使用详解及示例

使用详解 Element Plus 的 el-transfer 组件是一个强大的穿梭框组件,常用于在两个集合之间进行数据转移,如权限分配、数据选择等场景。下面我将详细介绍其用法并提供一个完整示例。 核心特性与用法 基本属性 v-model:绑定右侧列表的值&…...

【第二十一章 SDIO接口(SDIO)】

第二十一章 SDIO接口 目录 第二十一章 SDIO接口(SDIO) 1 SDIO 主要功能 2 SDIO 总线拓扑 3 SDIO 功能描述 3.1 SDIO 适配器 3.2 SDIOAHB 接口 4 卡功能描述 4.1 卡识别模式 4.2 卡复位 4.3 操作电压范围确认 4.4 卡识别过程 4.5 写数据块 4.6 读数据块 4.7 数据流…...

dedecms 织梦自定义表单留言增加ajax验证码功能

增加ajax功能模块,用户不点击提交按钮,只要输入框失去焦点,就会提前提示验证码是否正确。 一,模板上增加验证码 <input name"vdcode"id"vdcode" placeholder"请输入验证码" type"text&quo…...

大语言模型如何处理长文本?常用文本分割技术详解

为什么需要文本分割? 引言:为什么需要文本分割?一、基础文本分割方法1. 按段落分割(Paragraph Splitting)2. 按句子分割(Sentence Splitting)二、高级文本分割策略3. 重叠分割(Sliding Window)4. 递归分割(Recursive Splitting)三、生产级工具推荐5. 使用LangChain的…...

镜像里切换为普通用户

如果你登录远程虚拟机默认就是 root 用户,但你不希望用 root 权限运行 ns-3(这是对的,ns3 工具会拒绝 root),你可以按以下方法创建一个 非 root 用户账号 并切换到它运行 ns-3。 一次性解决方案:创建非 roo…...

的原因分类及对应排查方案)

JVM暂停(Stop-The-World,STW)的原因分类及对应排查方案

JVM暂停(Stop-The-World,STW)的完整原因分类及对应排查方案,结合JVM运行机制和常见故障场景整理而成: 一、GC相关暂停 1. 安全点(Safepoint)阻塞 现象:JVM暂停但无GC日志,日志显示No GCs detected。原因:JVM等待所有线程进入安全点(如…...

Java求职者面试指南:Spring、Spring Boot、MyBatis框架与计算机基础问题解析

Java求职者面试指南:Spring、Spring Boot、MyBatis框架与计算机基础问题解析 一、第一轮提问(基础概念问题) 1. 请解释Spring框架的核心容器是什么?它在Spring中起到什么作用? Spring框架的核心容器是IoC容器&#…...

Yolov8 目标检测蒸馏学习记录

yolov8系列模型蒸馏基本流程,代码下载:这里本人提交了一个demo:djdll/Yolov8_Distillation: Yolov8轻量化_蒸馏代码实现 在轻量化模型设计中,**知识蒸馏(Knowledge Distillation)**被广泛应用,作为提升模型…...