k8s和deepflow部署与测试

Ubuntu-22-LTS部署k8s和deepflow

环境详情:

Static hostname: k8smaster.example.net

Icon name: computer-vm

Chassis: vm

Machine ID: 22349ac6f9ba406293d0541bcba7c05d

Boot ID: 605a74a509724a88940bbbb69cde77f2

Virtualization: vmware

Operating System: Ubuntu 22.04.4 LTS

Kernel: Linux 5.15.0-106-generic

Architecture: x86-64

Hardware Vendor: VMware, Inc.

Hardware Model: VMware Virtual Platform

当您在 Ubuntu 22.04 上安装 Kubernetes 集群时,您可以遵循以下步骤:

-

设置主机名并在 hosts 文件中添加条目:

-

登录到主节点并使用

hostnamectl命令设置主机名:hostnamectl set-hostname "k8smaster.example.net" -

在工作节点上,运行以下命令设置主机名(分别对应第一个和第二个工作节点):

hostnamectl set-hostname "k8sworker1.example.net" # 第一个工作节点 hostnamectl set-hostname "k8sworker2.example.net" # 第二个工作节点 -

在每个节点的

/etc/hosts文件中添加以下条目:10.1.1.70 k8smaster.example.net k8smaster 10.1.1.71 k8sworker1.example.net k8sworker1

-

-

禁用 swap 并添加内核设置:

-

在所有节点上执行以下命令以禁用交换功能:

swapoff -a sed -i '/swap/ s/^\(.*\)$/#\1/g' /etc/fstab -

加载以下内核模块:

tee /etc/modules-load.d/containerd.conf <<EOF overlay br_netfilter EOF modprobe overlay modprobe br_netfilter -

为 Kubernetes 设置以下内核参数:

tee /etc/sysctl.d/kubernetes.conf <<EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF sysctl --system

-

-

安装 containerd 运行时:

-

首先安装 containerd 的依赖项:

apt install -y curl gnupg2 software-properties-common apt-transport-https ca-certificates -

启用 Docker 存储库:

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | apt-key add - add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" -

安装 containerd:

apt update apt install -y containerd.io -

配置 containerd 使用 systemd 作为 cgroup:

containerd config default | tee /etc/containerd/config.toml > /dev/null 2>&1 sed -i 's/SystemdCgroup\\=false/SystemdCgroup\\=true/g' /etc/containerd/config.toml部分配置手动修改

disabled_plugins = [] imports = [] oom_score = 0 plugin_dir = "" required_plugins = [] root = "/var/lib/containerd" state = "/run/containerd" temp = "" version = 2[cgroup] path = ""[debug] address = "" format = "" gid = 0 level = "" uid = 0[grpc] address = "/run/containerd/containerd.sock" gid = 0 max_recv_message_size = 16777216 max_send_message_size = 16777216 tcp_address = "" tcp_tls_ca = "" tcp_tls_cert = "" tcp_tls_key = "" uid = 0[metrics] address = "" grpc_histogram = false[plugins][plugins."io.containerd.gc.v1.scheduler"]deletion_threshold = 0mutation_threshold = 100pause_threshold = 0.02schedule_delay = "0s"startup_delay = "100ms"[plugins."io.containerd.grpc.v1.cri"]device_ownership_from_security_context = falsedisable_apparmor = falsedisable_cgroup = falsedisable_hugetlb_controller = truedisable_proc_mount = falsedisable_tcp_service = truedrain_exec_sync_io_timeout = "0s"enable_selinux = falseenable_tls_streaming = falseenable_unprivileged_icmp = falseenable_unprivileged_ports = falseignore_deprecation_warnings = []ignore_image_defined_volumes = falsemax_concurrent_downloads = 3max_container_log_line_size = 16384netns_mounts_under_state_dir = falserestrict_oom_score_adj = false# 修改以下这行sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.8"selinux_category_range = 1024stats_collect_period = 10stream_idle_timeout = "4h0m0s"stream_server_address = "127.0.0.1"stream_server_port = "0"systemd_cgroup = falsetolerate_missing_hugetlb_controller = trueunset_seccomp_profile = ""[plugins."io.containerd.grpc.v1.cri".cni]bin_dir = "/opt/cni/bin"conf_dir = "/etc/cni/net.d"conf_template = ""ip_pref = ""max_conf_num = 1[plugins."io.containerd.grpc.v1.cri".containerd]default_runtime_name = "runc"disable_snapshot_annotations = truediscard_unpacked_layers = falseignore_rdt_not_enabled_errors = falseno_pivot = falsesnapshotter = "overlayfs"[plugins."io.containerd.grpc.v1.cri".containerd.default_runtime]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = ""[plugins."io.containerd.grpc.v1.cri".containerd.default_runtime.options][plugins."io.containerd.grpc.v1.cri".containerd.runtimes][plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = "io.containerd.runc.v2"[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]BinaryName = ""CriuImagePath = ""CriuPath = ""CriuWorkPath = ""IoGid = 0IoUid = 0NoNewKeyring = falseNoPivotRoot = falseRoot = ""ShimCgroup = ""SystemdCgroup = true[plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = ""[plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime.options][plugins."io.containerd.grpc.v1.cri".image_decryption]key_model = "node"[plugins."io.containerd.grpc.v1.cri".registry]config_path = ""[plugins."io.containerd.grpc.v1.cri".registry.auths][plugins."io.containerd.grpc.v1.cri".registry.configs][plugins."io.containerd.grpc.v1.cri".registry.headers][plugins."io.containerd.grpc.v1.cri".registry.mirrors]# 添加如下4行[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]endpoint = ["https://docker.mirrors.ustc.edu.cn"][plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io"]endpoint = ["https://registry.aliyuncs.com/google_containers"][plugins."io.containerd.grpc.v1.cri".x509_key_pair_streaming]tls_cert_file = ""tls_key_file = ""[plugins."io.containerd.internal.v1.opt"]path = "/opt/containerd"[plugins."io.containerd.internal.v1.restart"]interval = "10s"[plugins."io.containerd.internal.v1.tracing"]sampling_ratio = 1.0service_name = "containerd"[plugins."io.containerd.metadata.v1.bolt"]content_sharing_policy = "shared"[plugins."io.containerd.monitor.v1.cgroups"]no_prometheus = false[plugins."io.containerd.runtime.v1.linux"]no_shim = falseruntime = "runc"runtime_root = ""shim = "containerd-shim"shim_debug = false[plugins."io.containerd.runtime.v2.task"]platforms = ["linux/amd64"]sched_core = false[plugins."io.containerd.service.v1.diff-service"]default = ["walking"][plugins."io.containerd.service.v1.tasks-service"]rdt_config_file = ""[plugins."io.containerd.snapshotter.v1.aufs"]root_path = ""[plugins."io.containerd.snapshotter.v1.btrfs"]root_path = ""[plugins."io.containerd.snapshotter.v1.devmapper"]async_remove = falsebase_image_size = ""discard_blocks = falsefs_options = ""fs_type = ""pool_name = ""root_path = ""[plugins."io.containerd.snapshotter.v1.native"]root_path = ""[plugins."io.containerd.snapshotter.v1.overlayfs"]mount_options = []root_path = ""sync_remove = falseupperdir_label = false[plugins."io.containerd.snapshotter.v1.zfs"]root_path = ""[plugins."io.containerd.tracing.processor.v1.otlp"]endpoint = ""insecure = falseprotocol = ""[proxy_plugins][stream_processors][stream_processors."io.containerd.ocicrypt.decoder.v1.tar"]accepts = ["application/vnd.oci.image.layer.v1.tar+encrypted"]args = ["--decryption-keys-path", "/etc/containerd/ocicrypt/keys"]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf"]path = "ctd-decoder"returns = "application/vnd.oci.image.layer.v1.tar"[stream_processors."io.containerd.ocicrypt.decoder.v1.tar.gzip"]accepts = ["application/vnd.oci.image.layer.v1.tar+gzip+encrypted"]args = ["--decryption-keys-path", "/etc/containerd/ocicrypt/keys"]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf"]path = "ctd-decoder"returns = "application/vnd.oci.image.layer.v1.tar+gzip"[timeouts] "io.containerd.timeout.bolt.open" = "0s" "io.containerd.timeout.shim.cleanup" = "5s" "io.containerd.timeout.shim.load" = "5s" "io.containerd.timeout.shim.shutdown" = "3s" "io.containerd.timeout.task.state" = "2s"[ttrpc] address = "" gid = 0 uid = 0 -

重启并启用容器服务:

systemctl restart containerd systemctl enable containerd -

设置crictl

cat > /etc/crictl.yaml <<EOF runtime-endpoint: unix:///var/run/containerd/containerd.sock image-endpoint: unix:///var/run/containerd/containerd.sock timeout: 10 debug: false pull-image-on-create: false EOF

-

-

添加阿里云的 Kubernetes 源:

-

首先,导入阿里云的 GPG 密钥:

curl -fsSL https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add - -

然后,添加阿里云的 Kubernetes 源:

tee /etc/apt/sources.list.d/kubernetes.list <<EOF deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main EOF

-

-

安装 Kubernetes 组件:

-

更新软件包索引并安装 kubelet、kubeadm 和 kubectl:

apt-get update apt-get install -y kubelet kubeadm kubectl -

设置 kubelet 使用 systemd 作为 cgroup 驱动:

# 可忽略 # sed -i 's/cgroup-driver=systemd/cgroup-driver=cgroupfs/g' /var/lib/kubelet/kubeadm-flags.env # systemctl daemon-reload # systemctl restart kubelet

-

-

初始化 Kubernetes 集群:

-

使用 kubeadm 初始化集群,并指定阿里云的镜像仓库:

# kubeadm init --image-repository registry.aliyuncs.com/google_containers I0513 14:16:59.740096 17563 version.go:256] remote version is much newer: v1.30.0; falling back to: stable-1.28 [init] Using Kubernetes version: v1.28.9 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' W0513 14:17:01.440936 17563 checks.go:835] detected that the sandbox image "registry.aliyuncs.com/google_containers/pause:3.8" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image. [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [k8smaster.example.net kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc. cluster.local] and IPs [10.96.0.1 10.1.1.70] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [k8smaster.example.net localhost] and IPs [10.1.1.70 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [k8smaster.example.net localhost] and IPs [10.1.1.70 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 4.002079 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node k8smaster.example.net as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes. io/exclude-from-external-load-balancers] [mark-control-plane] Marking the node k8smaster.example.net as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule] [bootstrap-token] Using token: m9z4yq.dok89ro6yt23wykr [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 10.1.1.70:6443 --token m9z4yq.dok89ro6yt23wykr \--discovery-token-ca-cert-hash sha256:17c3f29bd276592e668e9e6a7a187140a887254b4555cf7d293c3313d7c8a178

-

-

配置 kubectl:

-

为当前用户设置 kubectl 访问:

mkdir -p $HOME/.kube cp -i /etc/kubernetes/admin.conf $HOME/.kube/config chown $(id -u):$(id -g) $HOME/.kube/config

-

-

安装网络插件:

-

安装一个 Pod 网络插件,例如 Calico 或 Flannel。例如,使用 Calico:

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml # 网络插件初始化完毕之后,coredns容器就正常了 kubectl logs -n kube-system -l k8s-app=kube-dns

-

-

验证集群:

-

启动一个nginx pod:

# vim nginx_pod.yml apiVersion: v1 kind: Pod metadata:name: test-nginx-podnamespace: testlabels:app: nginx spec:containers:- name: test-nginx-containerimage: nginx:latestports:- containerPort: 80tolerations:- key: "node-role.kubernetes.io/control-plane"operator: "Exists"effect: "NoSchedule" ---apiVersion: v1 kind: Service # service和pod必须位于同一个namespace metadata:name: nginx-servicenamespace: test spec:type: NodePort# selector应该匹配pod的labelsselector:app: nginxports:- protocol: TCPport: 80nodePort: 30007targetPort: 80启动

kubectl apply -f nginx_pod.yml

-

部署opentelemetry-collector测试

otel-collector和otel-agent需要程序集成API,发送到以DaemonSet运行在每个节点的otel-agent,otel-agent再将数据发送给otel-collector汇总,然后发往可以处理otlp trace数据的后端,如zipkin、jaeger等。

自定义测试yaml文件

apiVersion: v1

kind: ConfigMap

metadata:name: otel-collector-confnamespace: default

data:# 你的配置数据config.yaml: |receivers:otlp:protocols:grpc:http:processors:batch:exporters:logging:loglevel: debugservice:pipelines:traces:receivers: [otlp]processors: [batch]exporters: [logging]---

apiVersion: v1

kind: Service

metadata:name: otel-collectorlabels:app: opentelemetry

spec:type: NodePortports:- port: 4317targetPort: 4317nodePort: 30080name: otlp-grpc- port: 8888targetPort: 8888name: metricsselector:component: otel-collector---

apiVersion: apps/v1

kind: Deployment

metadata:name: otel-collectorlabels:app: opentelemetry

spec:replicas: 1selector:matchLabels:component: otel-collectortemplate:metadata:labels:component: otel-collectorspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- name: otel-collectorimage: otel/opentelemetry-collector:latestports:- containerPort: 4317- containerPort: 8888env:- name: MY_POD_IPvalueFrom:fieldRef:fieldPath: status.podIPvolumeMounts:- name: otel-collector-config-volmountPath: /confvolumes:- configMap:name: otel-collector-confname: otel-collector-config-vol

启动

mkdir /conf

kubectl apply -f otel-collector.yaml

kubectl get -f otel-collector.yaml

删除

kubectl delete -f otel-collector.yaml

使用官方提供示例

kubectl apply -f https://raw.githubusercontent.com/open-telemetry/opentelemetry-collector/main/examples/k8s/otel-config.yaml

根据需要修改文件

otel-config.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:name: otel-agent-conflabels:app: opentelemetrycomponent: otel-agent-conf

data:otel-agent-config: |receivers:otlp:protocols:grpc:endpoint: ${env:MY_POD_IP}:4317http:endpoint: ${env:MY_POD_IP}:4318exporters:otlp:endpoint: "otel-collector.default:4317"tls:insecure: truesending_queue:num_consumers: 4queue_size: 100retry_on_failure:enabled: trueprocessors:batch:memory_limiter:# 80% of maximum memory up to 2Glimit_mib: 400# 25% of limit up to 2Gspike_limit_mib: 100check_interval: 5sextensions:zpages: {}service:extensions: [zpages]pipelines:traces:receivers: [otlp]processors: [memory_limiter, batch]exporters: [otlp]

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: otel-agentlabels:app: opentelemetrycomponent: otel-agent

spec:selector:matchLabels:app: opentelemetrycomponent: otel-agenttemplate:metadata:labels:app: opentelemetrycomponent: otel-agentspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- command:- "/otelcol"- "--config=/conf/otel-agent-config.yaml"image: otel/opentelemetry-collector:0.94.0name: otel-agentresources:limits:cpu: 500mmemory: 500Mirequests:cpu: 100mmemory: 100Miports:- containerPort: 55679 # ZPages endpoint.- containerPort: 4317 # Default OpenTelemetry receiver port.- containerPort: 8888 # Metrics.env:- name: MY_POD_IPvalueFrom:fieldRef:apiVersion: v1fieldPath: status.podIP- name: GOMEMLIMITvalue: 400MiBvolumeMounts:- name: otel-agent-config-volmountPath: /confvolumes:- configMap:name: otel-agent-confitems:- key: otel-agent-configpath: otel-agent-config.yamlname: otel-agent-config-vol

---

apiVersion: v1

kind: ConfigMap

metadata:name: otel-collector-conflabels:app: opentelemetrycomponent: otel-collector-conf

data:otel-collector-config: |receivers:otlp:protocols:grpc:endpoint: ${env:MY_POD_IP}:4317http:endpoint: ${env:MY_POD_IP}:4318processors:batch:memory_limiter:# 80% of maximum memory up to 2Glimit_mib: 1500# 25% of limit up to 2Gspike_limit_mib: 512check_interval: 5sextensions:zpages: {}exporters:otlp:endpoint: "http://someotlp.target.com:4317" # Replace with a real endpoint.tls:insecure: truezipkin:endpoint: "http://10.1.1.10:9411/api/v2/spans"format: "proto"service:extensions: [zpages]pipelines:traces/1:receivers: [otlp]processors: [memory_limiter, batch]exporters: [zipkin]

---

apiVersion: v1

kind: Service

metadata:name: otel-collectorlabels:app: opentelemetrycomponent: otel-collector

spec:ports:- name: otlp-grpc # Default endpoint for OpenTelemetry gRPC receiver.port: 4317protocol: TCPtargetPort: 4317- name: otlp-http # Default endpoint for OpenTelemetry HTTP receiver.port: 4318protocol: TCPtargetPort: 4318- name: metrics # Default endpoint for querying metrics.port: 8888selector:component: otel-collector

---

apiVersion: apps/v1

kind: Deployment

metadata:name: otel-collectorlabels:app: opentelemetrycomponent: otel-collector

spec:selector:matchLabels:app: opentelemetrycomponent: otel-collectorminReadySeconds: 5progressDeadlineSeconds: 120replicas: 1 #TODO - adjust this to your own requirementstemplate:metadata:labels:app: opentelemetrycomponent: otel-collectorspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- command:- "/otelcol"- "--config=/conf/otel-collector-config.yaml"image: otel/opentelemetry-collector:0.94.0name: otel-collectorresources:limits:cpu: 1memory: 2Girequests:cpu: 200mmemory: 400Miports:- containerPort: 55679 # Default endpoint for ZPages.- containerPort: 4317 # Default endpoint for OpenTelemetry receiver.- containerPort: 14250 # Default endpoint for Jaeger gRPC receiver.- containerPort: 14268 # Default endpoint for Jaeger HTTP receiver.- containerPort: 9411 # Default endpoint for Zipkin receiver.- containerPort: 8888 # Default endpoint for querying metrics.env:- name: MY_POD_IPvalueFrom:fieldRef:apiVersion: v1fieldPath: status.podIP- name: GOMEMLIMITvalue: 1600MiBvolumeMounts:- name: otel-collector-config-volmountPath: /conf

# - name: otel-collector-secrets

# mountPath: /secretsvolumes:- configMap:name: otel-collector-confitems:- key: otel-collector-configpath: otel-collector-config.yamlname: otel-collector-config-vol

# - secret:

# name: otel-collector-secrets

# items:

# - key: cert.pem

# path: cert.pem

# - key: key.pem

# path: key.pem

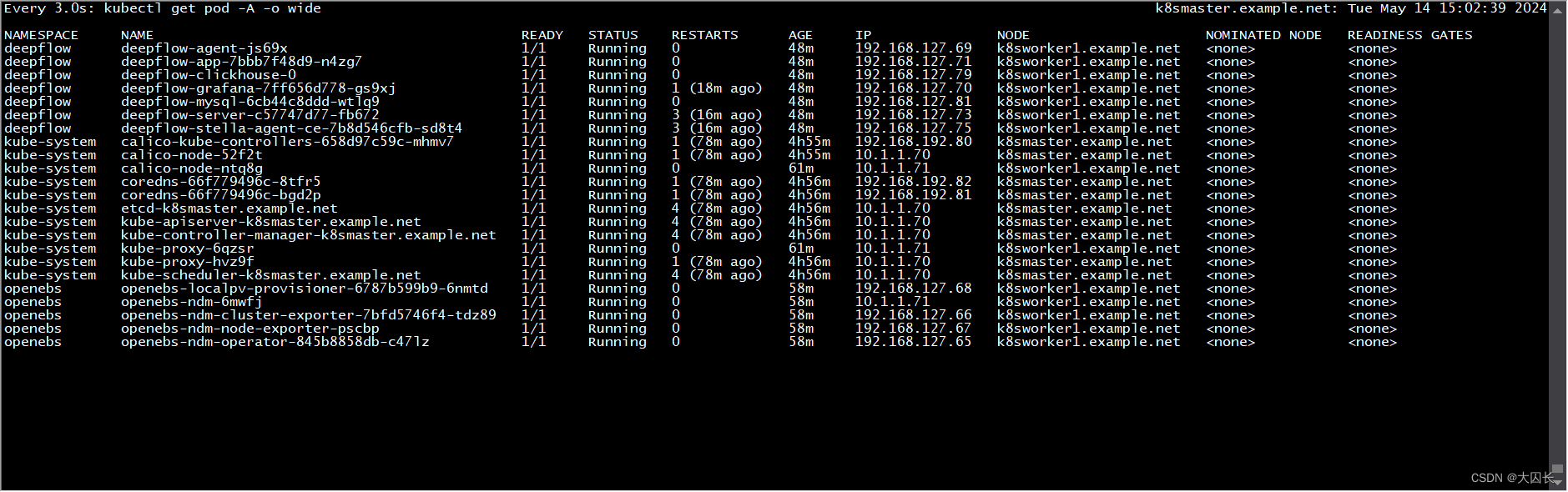

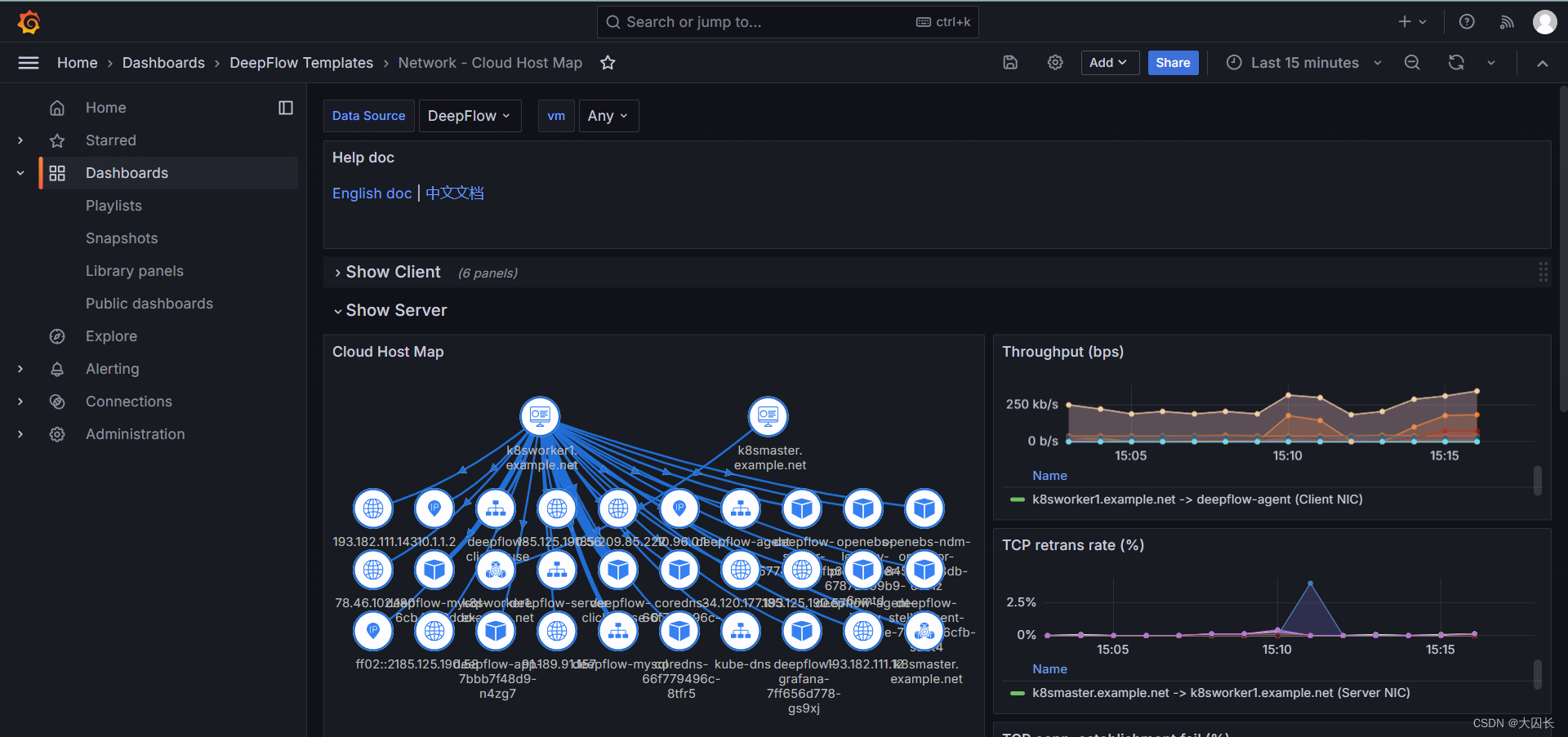

部署deepflow监控单个k8s集群

官方文档

官方demo

安装helm

snap install helm --classic

设置pv

kubectl apply -f https://openebs.github.io/charts/openebs-operator.yaml

## config default storage class

kubectl patch storageclass openebs-hostpath -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

部署deepflow

helm repo add deepflow https://deepflowio.github.io/deepflow

helm repo update deepflow # use `helm repo update` when helm < 3.7.0

helm install deepflow -n deepflow deepflow/deepflow --create-namespace

# 显示如下

NAME: deepflow

LAST DEPLOYED: Tue May 14 14:13:50 2024

NAMESPACE: deepflow

STATUS: deployed

REVISION: 1

NOTES:

██████╗ ███████╗███████╗██████╗ ███████╗██╗ ██████╗ ██╗ ██╗

██╔══██╗██╔════╝██╔════╝██╔══██╗██╔════╝██║ ██╔═══██╗██║ ██║

██║ ██║█████╗ █████╗ ██████╔╝█████╗ ██║ ██║ ██║██║ █╗ ██║

██║ ██║██╔══╝ ██╔══╝ ██╔═══╝ ██╔══╝ ██║ ██║ ██║██║███╗██║

██████╔╝███████╗███████╗██║ ██║ ███████╗╚██████╔╝╚███╔███╔╝

╚═════╝ ╚══════╝╚══════╝╚═╝ ╚═╝ ╚══════╝ ╚═════╝ ╚══╝╚══╝ An automated observability platform for cloud-native developers.# deepflow-agent Port for receiving trace, metrics, and logdeepflow-agent service: deepflow-agent.deepflow

deepflow-agent Host listening port: 38086# Get the Grafana URL to visit by running these commands in the same shellNODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow-grafana)

NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}")

echo -e "Grafana URL: http://$NODE_IP:$NODE_PORT \nGrafana auth: admin:deepflow"

节点安装deepflow-ctl

curl -o /usr/bin/deepflow-ctl https://deepflow-ce.oss-cn-beijing.aliyuncs.com/bin/ctl/stable/linux/$(arch | sed 's|x86_64|amd64|' | sed 's|aarch64|arm64|')/deepflow-ctl

chmod a+x /usr/bin/deepflow-ctl

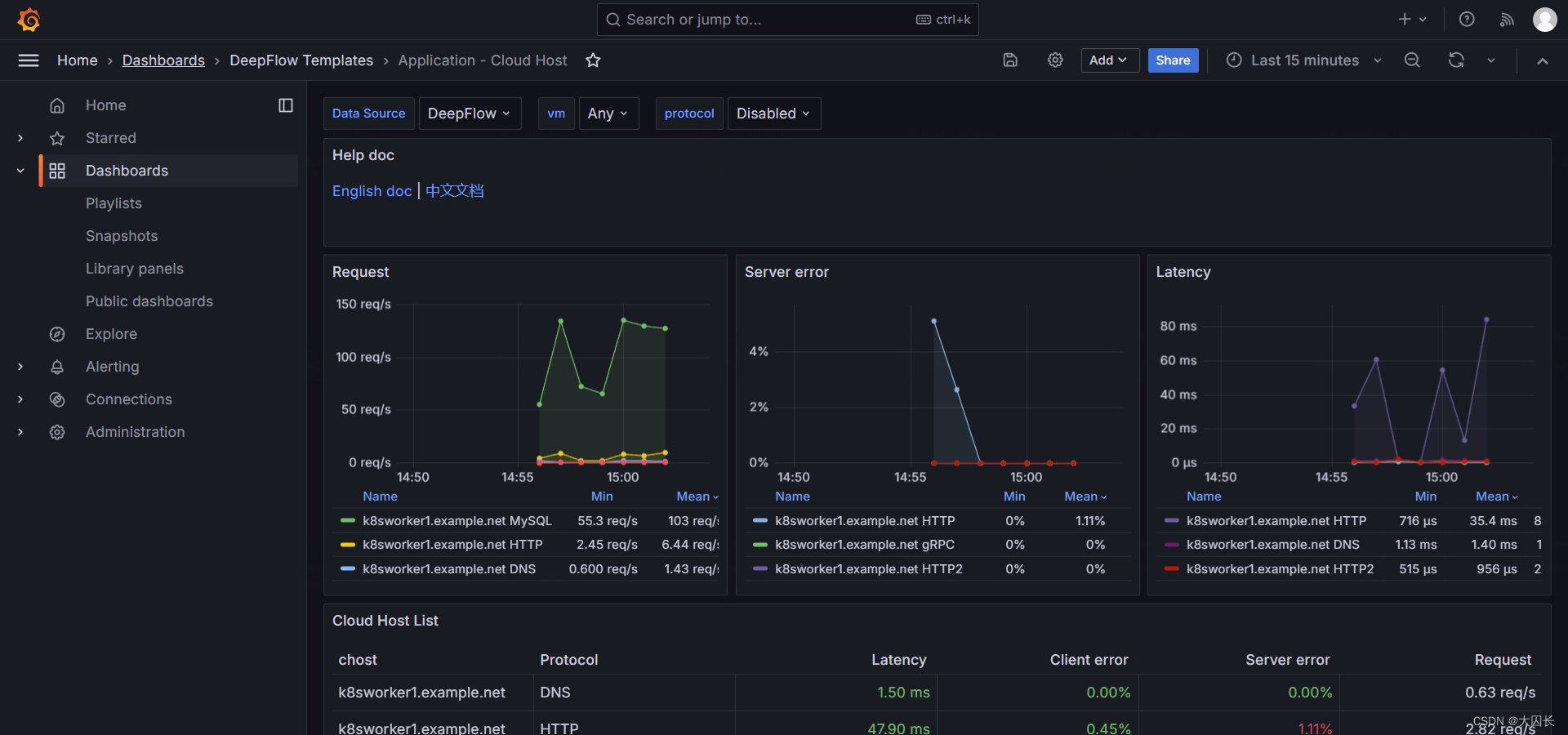

访问grafana页面

NODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow-grafana)

NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}")

echo -e "Grafana URL: http://$NODE_IP:$NODE_PORT \nGrafana auth: admin:deepflow"

Ubuntu-22-LTS部署k8s和deepflow

环境详情:

Static hostname: k8smaster.example.net

Icon name: computer-vm

Chassis: vm

Machine ID: 22349ac6f9ba406293d0541bcba7c05d

Boot ID: 605a74a509724a88940bbbb69cde77f2

Virtualization: vmware

Operating System: Ubuntu 22.04.4 LTS

Kernel: Linux 5.15.0-106-generic

Architecture: x86-64

Hardware Vendor: VMware, Inc.

Hardware Model: VMware Virtual Platform

当您在 Ubuntu 22.04 上安装 Kubernetes 集群时,您可以遵循以下步骤:

-

设置主机名并在 hosts 文件中添加条目:

-

登录到主节点并使用

hostnamectl命令设置主机名:hostnamectl set-hostname "k8smaster.example.net" -

在工作节点上,运行以下命令设置主机名(分别对应第一个和第二个工作节点):

hostnamectl set-hostname "k8sworker1.example.net" # 第一个工作节点 hostnamectl set-hostname "k8sworker2.example.net" # 第二个工作节点 -

在每个节点的

/etc/hosts文件中添加以下条目:10.1.1.70 k8smaster.example.net k8smaster 10.1.1.71 k8sworker1.example.net k8sworker1

-

-

禁用 swap 并添加内核设置:

-

在所有节点上执行以下命令以禁用交换功能:

swapoff -a sed -i '/swap/ s/^\(.*\)$/#\1/g' /etc/fstab -

加载以下内核模块:

tee /etc/modules-load.d/containerd.conf <<EOF overlay br_netfilter EOF modprobe overlay modprobe br_netfilter -

为 Kubernetes 设置以下内核参数:

tee /etc/sysctl.d/kubernetes.conf <<EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF sysctl --system

-

-

安装 containerd 运行时:

-

首先安装 containerd 的依赖项:

apt install -y curl gnupg2 software-properties-common apt-transport-https ca-certificates -

启用 Docker 存储库:

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | apt-key add - add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" -

安装 containerd:

apt update apt install -y containerd.io -

配置 containerd 使用 systemd 作为 cgroup:

containerd config default | tee /etc/containerd/config.toml > /dev/null 2>&1 sed -i 's/SystemdCgroup\\=false/SystemdCgroup\\=true/g' /etc/containerd/config.toml部分配置手动修改

disabled_plugins = [] imports = [] oom_score = 0 plugin_dir = "" required_plugins = [] root = "/var/lib/containerd" state = "/run/containerd" temp = "" version = 2[cgroup] path = ""[debug] address = "" format = "" gid = 0 level = "" uid = 0[grpc] address = "/run/containerd/containerd.sock" gid = 0 max_recv_message_size = 16777216 max_send_message_size = 16777216 tcp_address = "" tcp_tls_ca = "" tcp_tls_cert = "" tcp_tls_key = "" uid = 0[metrics] address = "" grpc_histogram = false[plugins][plugins."io.containerd.gc.v1.scheduler"]deletion_threshold = 0mutation_threshold = 100pause_threshold = 0.02schedule_delay = "0s"startup_delay = "100ms"[plugins."io.containerd.grpc.v1.cri"]device_ownership_from_security_context = falsedisable_apparmor = falsedisable_cgroup = falsedisable_hugetlb_controller = truedisable_proc_mount = falsedisable_tcp_service = truedrain_exec_sync_io_timeout = "0s"enable_selinux = falseenable_tls_streaming = falseenable_unprivileged_icmp = falseenable_unprivileged_ports = falseignore_deprecation_warnings = []ignore_image_defined_volumes = falsemax_concurrent_downloads = 3max_container_log_line_size = 16384netns_mounts_under_state_dir = falserestrict_oom_score_adj = false# 修改以下这行sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.8"selinux_category_range = 1024stats_collect_period = 10stream_idle_timeout = "4h0m0s"stream_server_address = "127.0.0.1"stream_server_port = "0"systemd_cgroup = falsetolerate_missing_hugetlb_controller = trueunset_seccomp_profile = ""[plugins."io.containerd.grpc.v1.cri".cni]bin_dir = "/opt/cni/bin"conf_dir = "/etc/cni/net.d"conf_template = ""ip_pref = ""max_conf_num = 1[plugins."io.containerd.grpc.v1.cri".containerd]default_runtime_name = "runc"disable_snapshot_annotations = truediscard_unpacked_layers = falseignore_rdt_not_enabled_errors = falseno_pivot = falsesnapshotter = "overlayfs"[plugins."io.containerd.grpc.v1.cri".containerd.default_runtime]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = ""[plugins."io.containerd.grpc.v1.cri".containerd.default_runtime.options][plugins."io.containerd.grpc.v1.cri".containerd.runtimes][plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = "io.containerd.runc.v2"[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]BinaryName = ""CriuImagePath = ""CriuPath = ""CriuWorkPath = ""IoGid = 0IoUid = 0NoNewKeyring = falseNoPivotRoot = falseRoot = ""ShimCgroup = ""SystemdCgroup = true[plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = ""[plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime.options][plugins."io.containerd.grpc.v1.cri".image_decryption]key_model = "node"[plugins."io.containerd.grpc.v1.cri".registry]config_path = ""[plugins."io.containerd.grpc.v1.cri".registry.auths][plugins."io.containerd.grpc.v1.cri".registry.configs][plugins."io.containerd.grpc.v1.cri".registry.headers][plugins."io.containerd.grpc.v1.cri".registry.mirrors]# 添加如下4行[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]endpoint = ["https://docker.mirrors.ustc.edu.cn"][plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io"]endpoint = ["https://registry.aliyuncs.com/google_containers"][plugins."io.containerd.grpc.v1.cri".x509_key_pair_streaming]tls_cert_file = ""tls_key_file = ""[plugins."io.containerd.internal.v1.opt"]path = "/opt/containerd"[plugins."io.containerd.internal.v1.restart"]interval = "10s"[plugins."io.containerd.internal.v1.tracing"]sampling_ratio = 1.0service_name = "containerd"[plugins."io.containerd.metadata.v1.bolt"]content_sharing_policy = "shared"[plugins."io.containerd.monitor.v1.cgroups"]no_prometheus = false[plugins."io.containerd.runtime.v1.linux"]no_shim = falseruntime = "runc"runtime_root = ""shim = "containerd-shim"shim_debug = false[plugins."io.containerd.runtime.v2.task"]platforms = ["linux/amd64"]sched_core = false[plugins."io.containerd.service.v1.diff-service"]default = ["walking"][plugins."io.containerd.service.v1.tasks-service"]rdt_config_file = ""[plugins."io.containerd.snapshotter.v1.aufs"]root_path = ""[plugins."io.containerd.snapshotter.v1.btrfs"]root_path = ""[plugins."io.containerd.snapshotter.v1.devmapper"]async_remove = falsebase_image_size = ""discard_blocks = falsefs_options = ""fs_type = ""pool_name = ""root_path = ""[plugins."io.containerd.snapshotter.v1.native"]root_path = ""[plugins."io.containerd.snapshotter.v1.overlayfs"]mount_options = []root_path = ""sync_remove = falseupperdir_label = false[plugins."io.containerd.snapshotter.v1.zfs"]root_path = ""[plugins."io.containerd.tracing.processor.v1.otlp"]endpoint = ""insecure = falseprotocol = ""[proxy_plugins][stream_processors][stream_processors."io.containerd.ocicrypt.decoder.v1.tar"]accepts = ["application/vnd.oci.image.layer.v1.tar+encrypted"]args = ["--decryption-keys-path", "/etc/containerd/ocicrypt/keys"]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf"]path = "ctd-decoder"returns = "application/vnd.oci.image.layer.v1.tar"[stream_processors."io.containerd.ocicrypt.decoder.v1.tar.gzip"]accepts = ["application/vnd.oci.image.layer.v1.tar+gzip+encrypted"]args = ["--decryption-keys-path", "/etc/containerd/ocicrypt/keys"]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf"]path = "ctd-decoder"returns = "application/vnd.oci.image.layer.v1.tar+gzip"[timeouts] "io.containerd.timeout.bolt.open" = "0s" "io.containerd.timeout.shim.cleanup" = "5s" "io.containerd.timeout.shim.load" = "5s" "io.containerd.timeout.shim.shutdown" = "3s" "io.containerd.timeout.task.state" = "2s"[ttrpc] address = "" gid = 0 uid = 0 -

重启并启用容器服务:

systemctl restart containerd systemctl enable containerd -

设置crictl

cat > /etc/crictl.yaml <<EOF runtime-endpoint: unix:///var/run/containerd/containerd.sock image-endpoint: unix:///var/run/containerd/containerd.sock timeout: 10 debug: false pull-image-on-create: false EOF

-

-

添加阿里云的 Kubernetes 源:

-

首先,导入阿里云的 GPG 密钥:

curl -fsSL https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add - -

然后,添加阿里云的 Kubernetes 源:

tee /etc/apt/sources.list.d/kubernetes.list <<EOF deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main EOF

-

-

安装 Kubernetes 组件:

-

更新软件包索引并安装 kubelet、kubeadm 和 kubectl:

apt-get update apt-get install -y kubelet kubeadm kubectl -

设置 kubelet 使用 systemd 作为 cgroup 驱动:

# 可忽略 # sed -i 's/cgroup-driver=systemd/cgroup-driver=cgroupfs/g' /var/lib/kubelet/kubeadm-flags.env # systemctl daemon-reload # systemctl restart kubelet

-

-

初始化 Kubernetes 集群:

-

使用 kubeadm 初始化集群,并指定阿里云的镜像仓库:

# kubeadm init --image-repository registry.aliyuncs.com/google_containers I0513 14:16:59.740096 17563 version.go:256] remote version is much newer: v1.30.0; falling back to: stable-1.28 [init] Using Kubernetes version: v1.28.9 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' W0513 14:17:01.440936 17563 checks.go:835] detected that the sandbox image "registry.aliyuncs.com/google_containers/pause:3.8" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image. [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [k8smaster.example.net kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc. cluster.local] and IPs [10.96.0.1 10.1.1.70] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [k8smaster.example.net localhost] and IPs [10.1.1.70 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [k8smaster.example.net localhost] and IPs [10.1.1.70 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 4.002079 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node k8smaster.example.net as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes. io/exclude-from-external-load-balancers] [mark-control-plane] Marking the node k8smaster.example.net as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule] [bootstrap-token] Using token: m9z4yq.dok89ro6yt23wykr [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 10.1.1.70:6443 --token m9z4yq.dok89ro6yt23wykr \--discovery-token-ca-cert-hash sha256:17c3f29bd276592e668e9e6a7a187140a887254b4555cf7d293c3313d7c8a178

-

-

配置 kubectl:

-

为当前用户设置 kubectl 访问:

mkdir -p $HOME/.kube cp -i /etc/kubernetes/admin.conf $HOME/.kube/config chown $(id -u):$(id -g) $HOME/.kube/config

-

-

安装网络插件:

-

安装一个 Pod 网络插件,例如 Calico 或 Flannel。例如,使用 Calico:

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml # 网络插件初始化完毕之后,coredns容器就正常了 kubectl logs -n kube-system -l k8s-app=kube-dns

-

-

验证集群:

-

启动一个nginx pod:

# vim nginx_pod.yml apiVersion: v1 kind: Pod metadata:name: test-nginx-podnamespace: testlabels:app: nginx spec:containers:- name: test-nginx-containerimage: nginx:latestports:- containerPort: 80tolerations:- key: "node-role.kubernetes.io/control-plane"operator: "Exists"effect: "NoSchedule" ---apiVersion: v1 kind: Service # service和pod必须位于同一个namespace metadata:name: nginx-servicenamespace: test spec:type: NodePort# selector应该匹配pod的labelsselector:app: nginxports:- protocol: TCPport: 80nodePort: 30007targetPort: 80启动

kubectl apply -f nginx_pod.yml

-

部署opentelemetry-collector测试

otel-collector和otel-agent需要程序集成API,发送到以DaemonSet运行在每个节点的otel-agent,otel-agent再将数据发送给otel-collector汇总,然后发往可以处理otlp trace数据的后端,如zipkin、jaeger等。

自定义测试yaml文件

apiVersion: v1

kind: ConfigMap

metadata:name: otel-collector-confnamespace: default

data:# 你的配置数据config.yaml: |receivers:otlp:protocols:grpc:http:processors:batch:exporters:logging:loglevel: debugservice:pipelines:traces:receivers: [otlp]processors: [batch]exporters: [logging]---

apiVersion: v1

kind: Service

metadata:name: otel-collectorlabels:app: opentelemetry

spec:type: NodePortports:- port: 4317targetPort: 4317nodePort: 30080name: otlp-grpc- port: 8888targetPort: 8888name: metricsselector:component: otel-collector---

apiVersion: apps/v1

kind: Deployment

metadata:name: otel-collectorlabels:app: opentelemetry

spec:replicas: 1selector:matchLabels:component: otel-collectortemplate:metadata:labels:component: otel-collectorspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- name: otel-collectorimage: otel/opentelemetry-collector:latestports:- containerPort: 4317- containerPort: 8888env:- name: MY_POD_IPvalueFrom:fieldRef:fieldPath: status.podIPvolumeMounts:- name: otel-collector-config-volmountPath: /confvolumes:- configMap:name: otel-collector-confname: otel-collector-config-vol

启动

mkdir /conf

kubectl apply -f otel-collector.yaml

kubectl get -f otel-collector.yaml

删除

kubectl delete -f otel-collector.yaml

使用官方提供示例

kubectl apply -f https://raw.githubusercontent.com/open-telemetry/opentelemetry-collector/main/examples/k8s/otel-config.yaml

根据需要修改文件

otel-config.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:name: otel-agent-conflabels:app: opentelemetrycomponent: otel-agent-conf

data:otel-agent-config: |receivers:otlp:protocols:grpc:endpoint: ${env:MY_POD_IP}:4317http:endpoint: ${env:MY_POD_IP}:4318exporters:otlp:endpoint: "otel-collector.default:4317"tls:insecure: truesending_queue:num_consumers: 4queue_size: 100retry_on_failure:enabled: trueprocessors:batch:memory_limiter:# 80% of maximum memory up to 2Glimit_mib: 400# 25% of limit up to 2Gspike_limit_mib: 100check_interval: 5sextensions:zpages: {}service:extensions: [zpages]pipelines:traces:receivers: [otlp]processors: [memory_limiter, batch]exporters: [otlp]

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: otel-agentlabels:app: opentelemetrycomponent: otel-agent

spec:selector:matchLabels:app: opentelemetrycomponent: otel-agenttemplate:metadata:labels:app: opentelemetrycomponent: otel-agentspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- command:- "/otelcol"- "--config=/conf/otel-agent-config.yaml"image: otel/opentelemetry-collector:0.94.0name: otel-agentresources:limits:cpu: 500mmemory: 500Mirequests:cpu: 100mmemory: 100Miports:- containerPort: 55679 # ZPages endpoint.- containerPort: 4317 # Default OpenTelemetry receiver port.- containerPort: 8888 # Metrics.env:- name: MY_POD_IPvalueFrom:fieldRef:apiVersion: v1fieldPath: status.podIP- name: GOMEMLIMITvalue: 400MiBvolumeMounts:- name: otel-agent-config-volmountPath: /confvolumes:- configMap:name: otel-agent-confitems:- key: otel-agent-configpath: otel-agent-config.yamlname: otel-agent-config-vol

---

apiVersion: v1

kind: ConfigMap

metadata:name: otel-collector-conflabels:app: opentelemetrycomponent: otel-collector-conf

data:otel-collector-config: |receivers:otlp:protocols:grpc:endpoint: ${env:MY_POD_IP}:4317http:endpoint: ${env:MY_POD_IP}:4318processors:batch:memory_limiter:# 80% of maximum memory up to 2Glimit_mib: 1500# 25% of limit up to 2Gspike_limit_mib: 512check_interval: 5sextensions:zpages: {}exporters:otlp:endpoint: "http://someotlp.target.com:4317" # Replace with a real endpoint.tls:insecure: truezipkin:endpoint: "http://10.1.1.10:9411/api/v2/spans"format: "proto"service:extensions: [zpages]pipelines:traces/1:receivers: [otlp]processors: [memory_limiter, batch]exporters: [zipkin]

---

apiVersion: v1

kind: Service

metadata:name: otel-collectorlabels:app: opentelemetrycomponent: otel-collector

spec:ports:- name: otlp-grpc # Default endpoint for OpenTelemetry gRPC receiver.port: 4317protocol: TCPtargetPort: 4317- name: otlp-http # Default endpoint for OpenTelemetry HTTP receiver.port: 4318protocol: TCPtargetPort: 4318- name: metrics # Default endpoint for querying metrics.port: 8888selector:component: otel-collector

---

apiVersion: apps/v1

kind: Deployment

metadata:name: otel-collectorlabels:app: opentelemetrycomponent: otel-collector

spec:selector:matchLabels:app: opentelemetrycomponent: otel-collectorminReadySeconds: 5progressDeadlineSeconds: 120replicas: 1 #TODO - adjust this to your own requirementstemplate:metadata:labels:app: opentelemetrycomponent: otel-collectorspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- command:- "/otelcol"- "--config=/conf/otel-collector-config.yaml"image: otel/opentelemetry-collector:0.94.0name: otel-collectorresources:limits:cpu: 1memory: 2Girequests:cpu: 200mmemory: 400Miports:- containerPort: 55679 # Default endpoint for ZPages.- containerPort: 4317 # Default endpoint for OpenTelemetry receiver.- containerPort: 14250 # Default endpoint for Jaeger gRPC receiver.- containerPort: 14268 # Default endpoint for Jaeger HTTP receiver.- containerPort: 9411 # Default endpoint for Zipkin receiver.- containerPort: 8888 # Default endpoint for querying metrics.env:- name: MY_POD_IPvalueFrom:fieldRef:apiVersion: v1fieldPath: status.podIP- name: GOMEMLIMITvalue: 1600MiBvolumeMounts:- name: otel-collector-config-volmountPath: /conf

# - name: otel-collector-secrets

# mountPath: /secretsvolumes:- configMap:name: otel-collector-confitems:- key: otel-collector-configpath: otel-collector-config.yamlname: otel-collector-config-vol

# - secret:

# name: otel-collector-secrets

# items:

# - key: cert.pem

# path: cert.pem

# - key: key.pem

# path: key.pem

部署deepflow监控单个k8s集群

官方文档

官方demo

安装helm

snap install helm --classic

设置pv

kubectl apply -f https://openebs.github.io/charts/openebs-operator.yaml

## config default storage class

kubectl patch storageclass openebs-hostpath -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

部署deepflow

helm repo add deepflow https://deepflowio.github.io/deepflow

helm repo update deepflow # use `helm repo update` when helm < 3.7.0

helm install deepflow -n deepflow deepflow/deepflow --create-namespace

# 显示如下

NAME: deepflow

LAST DEPLOYED: Tue May 14 14:13:50 2024

NAMESPACE: deepflow

STATUS: deployed

REVISION: 1

NOTES:

██████╗ ███████╗███████╗██████╗ ███████╗██╗ ██████╗ ██╗ ██╗

██╔══██╗██╔════╝██╔════╝██╔══██╗██╔════╝██║ ██╔═══██╗██║ ██║

██║ ██║█████╗ █████╗ ██████╔╝█████╗ ██║ ██║ ██║██║ █╗ ██║

██║ ██║██╔══╝ ██╔══╝ ██╔═══╝ ██╔══╝ ██║ ██║ ██║██║███╗██║

██████╔╝███████╗███████╗██║ ██║ ███████╗╚██████╔╝╚███╔███╔╝

╚═════╝ ╚══════╝╚══════╝╚═╝ ╚═╝ ╚══════╝ ╚═════╝ ╚══╝╚══╝ An automated observability platform for cloud-native developers.# deepflow-agent Port for receiving trace, metrics, and logdeepflow-agent service: deepflow-agent.deepflow

deepflow-agent Host listening port: 38086# Get the Grafana URL to visit by running these commands in the same shellNODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow-grafana)

NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}")

echo -e "Grafana URL: http://$NODE_IP:$NODE_PORT \nGrafana auth: admin:deepflow"

节点安装deepflow-ctl

curl -o /usr/bin/deepflow-ctl https://deepflow-ce.oss-cn-beijing.aliyuncs.com/bin/ctl/stable/linux/$(arch | sed 's|x86_64|amd64|' | sed 's|aarch64|arm64|')/deepflow-ctl

chmod a+x /usr/bin/deepflow-ctl

访问grafana页面

NODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow-grafana)

NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}")

echo -e "Grafana URL: http://$NODE_IP:$NODE_PORT \nGrafana auth: admin:deepflow"

Ubuntu-22-LTS部署k8s和deepflow

环境详情:

Static hostname: k8smaster.example.net

Icon name: computer-vm

Chassis: vm

Machine ID: 22349ac6f9ba406293d0541bcba7c05d

Boot ID: 605a74a509724a88940bbbb69cde77f2

Virtualization: vmware

Operating System: Ubuntu 22.04.4 LTS

Kernel: Linux 5.15.0-106-generic

Architecture: x86-64

Hardware Vendor: VMware, Inc.

Hardware Model: VMware Virtual Platform

当您在 Ubuntu 22.04 上安装 Kubernetes 集群时,您可以遵循以下步骤:

-

设置主机名并在 hosts 文件中添加条目:

-

登录到主节点并使用

hostnamectl命令设置主机名:hostnamectl set-hostname "k8smaster.example.net" -

在工作节点上,运行以下命令设置主机名(分别对应第一个和第二个工作节点):

hostnamectl set-hostname "k8sworker1.example.net" # 第一个工作节点 hostnamectl set-hostname "k8sworker2.example.net" # 第二个工作节点 -

在每个节点的

/etc/hosts文件中添加以下条目:10.1.1.70 k8smaster.example.net k8smaster 10.1.1.71 k8sworker1.example.net k8sworker1

-

-

禁用 swap 并添加内核设置:

-

在所有节点上执行以下命令以禁用交换功能:

swapoff -a sed -i '/swap/ s/^\(.*\)$/#\1/g' /etc/fstab -

加载以下内核模块:

tee /etc/modules-load.d/containerd.conf <<EOF overlay br_netfilter EOF modprobe overlay modprobe br_netfilter -

为 Kubernetes 设置以下内核参数:

tee /etc/sysctl.d/kubernetes.conf <<EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF sysctl --system

-

-

安装 containerd 运行时:

-

首先安装 containerd 的依赖项:

apt install -y curl gnupg2 software-properties-common apt-transport-https ca-certificates -

启用 Docker 存储库:

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | apt-key add - add-apt-repository "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" -

安装 containerd:

apt update apt install -y containerd.io -

配置 containerd 使用 systemd 作为 cgroup:

containerd config default | tee /etc/containerd/config.toml > /dev/null 2>&1 sed -i 's/SystemdCgroup\\=false/SystemdCgroup\\=true/g' /etc/containerd/config.toml部分配置手动修改

disabled_plugins = [] imports = [] oom_score = 0 plugin_dir = "" required_plugins = [] root = "/var/lib/containerd" state = "/run/containerd" temp = "" version = 2[cgroup] path = ""[debug] address = "" format = "" gid = 0 level = "" uid = 0[grpc] address = "/run/containerd/containerd.sock" gid = 0 max_recv_message_size = 16777216 max_send_message_size = 16777216 tcp_address = "" tcp_tls_ca = "" tcp_tls_cert = "" tcp_tls_key = "" uid = 0[metrics] address = "" grpc_histogram = false[plugins][plugins."io.containerd.gc.v1.scheduler"]deletion_threshold = 0mutation_threshold = 100pause_threshold = 0.02schedule_delay = "0s"startup_delay = "100ms"[plugins."io.containerd.grpc.v1.cri"]device_ownership_from_security_context = falsedisable_apparmor = falsedisable_cgroup = falsedisable_hugetlb_controller = truedisable_proc_mount = falsedisable_tcp_service = truedrain_exec_sync_io_timeout = "0s"enable_selinux = falseenable_tls_streaming = falseenable_unprivileged_icmp = falseenable_unprivileged_ports = falseignore_deprecation_warnings = []ignore_image_defined_volumes = falsemax_concurrent_downloads = 3max_container_log_line_size = 16384netns_mounts_under_state_dir = falserestrict_oom_score_adj = false# 修改以下这行sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.8"selinux_category_range = 1024stats_collect_period = 10stream_idle_timeout = "4h0m0s"stream_server_address = "127.0.0.1"stream_server_port = "0"systemd_cgroup = falsetolerate_missing_hugetlb_controller = trueunset_seccomp_profile = ""[plugins."io.containerd.grpc.v1.cri".cni]bin_dir = "/opt/cni/bin"conf_dir = "/etc/cni/net.d"conf_template = ""ip_pref = ""max_conf_num = 1[plugins."io.containerd.grpc.v1.cri".containerd]default_runtime_name = "runc"disable_snapshot_annotations = truediscard_unpacked_layers = falseignore_rdt_not_enabled_errors = falseno_pivot = falsesnapshotter = "overlayfs"[plugins."io.containerd.grpc.v1.cri".containerd.default_runtime]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = ""[plugins."io.containerd.grpc.v1.cri".containerd.default_runtime.options][plugins."io.containerd.grpc.v1.cri".containerd.runtimes][plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = "io.containerd.runc.v2"[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]BinaryName = ""CriuImagePath = ""CriuPath = ""CriuWorkPath = ""IoGid = 0IoUid = 0NoNewKeyring = falseNoPivotRoot = falseRoot = ""ShimCgroup = ""SystemdCgroup = true[plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime]base_runtime_spec = ""cni_conf_dir = ""cni_max_conf_num = 0container_annotations = []pod_annotations = []privileged_without_host_devices = falseruntime_engine = ""runtime_path = ""runtime_root = ""runtime_type = ""[plugins."io.containerd.grpc.v1.cri".containerd.untrusted_workload_runtime.options][plugins."io.containerd.grpc.v1.cri".image_decryption]key_model = "node"[plugins."io.containerd.grpc.v1.cri".registry]config_path = ""[plugins."io.containerd.grpc.v1.cri".registry.auths][plugins."io.containerd.grpc.v1.cri".registry.configs][plugins."io.containerd.grpc.v1.cri".registry.headers][plugins."io.containerd.grpc.v1.cri".registry.mirrors]# 添加如下4行[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]endpoint = ["https://docker.mirrors.ustc.edu.cn"][plugins."io.containerd.grpc.v1.cri".registry.mirrors."k8s.gcr.io"]endpoint = ["https://registry.aliyuncs.com/google_containers"][plugins."io.containerd.grpc.v1.cri".x509_key_pair_streaming]tls_cert_file = ""tls_key_file = ""[plugins."io.containerd.internal.v1.opt"]path = "/opt/containerd"[plugins."io.containerd.internal.v1.restart"]interval = "10s"[plugins."io.containerd.internal.v1.tracing"]sampling_ratio = 1.0service_name = "containerd"[plugins."io.containerd.metadata.v1.bolt"]content_sharing_policy = "shared"[plugins."io.containerd.monitor.v1.cgroups"]no_prometheus = false[plugins."io.containerd.runtime.v1.linux"]no_shim = falseruntime = "runc"runtime_root = ""shim = "containerd-shim"shim_debug = false[plugins."io.containerd.runtime.v2.task"]platforms = ["linux/amd64"]sched_core = false[plugins."io.containerd.service.v1.diff-service"]default = ["walking"][plugins."io.containerd.service.v1.tasks-service"]rdt_config_file = ""[plugins."io.containerd.snapshotter.v1.aufs"]root_path = ""[plugins."io.containerd.snapshotter.v1.btrfs"]root_path = ""[plugins."io.containerd.snapshotter.v1.devmapper"]async_remove = falsebase_image_size = ""discard_blocks = falsefs_options = ""fs_type = ""pool_name = ""root_path = ""[plugins."io.containerd.snapshotter.v1.native"]root_path = ""[plugins."io.containerd.snapshotter.v1.overlayfs"]mount_options = []root_path = ""sync_remove = falseupperdir_label = false[plugins."io.containerd.snapshotter.v1.zfs"]root_path = ""[plugins."io.containerd.tracing.processor.v1.otlp"]endpoint = ""insecure = falseprotocol = ""[proxy_plugins][stream_processors][stream_processors."io.containerd.ocicrypt.decoder.v1.tar"]accepts = ["application/vnd.oci.image.layer.v1.tar+encrypted"]args = ["--decryption-keys-path", "/etc/containerd/ocicrypt/keys"]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf"]path = "ctd-decoder"returns = "application/vnd.oci.image.layer.v1.tar"[stream_processors."io.containerd.ocicrypt.decoder.v1.tar.gzip"]accepts = ["application/vnd.oci.image.layer.v1.tar+gzip+encrypted"]args = ["--decryption-keys-path", "/etc/containerd/ocicrypt/keys"]env = ["OCICRYPT_KEYPROVIDER_CONFIG=/etc/containerd/ocicrypt/ocicrypt_keyprovider.conf"]path = "ctd-decoder"returns = "application/vnd.oci.image.layer.v1.tar+gzip"[timeouts] "io.containerd.timeout.bolt.open" = "0s" "io.containerd.timeout.shim.cleanup" = "5s" "io.containerd.timeout.shim.load" = "5s" "io.containerd.timeout.shim.shutdown" = "3s" "io.containerd.timeout.task.state" = "2s"[ttrpc] address = "" gid = 0 uid = 0 -

重启并启用容器服务:

systemctl restart containerd systemctl enable containerd -

设置crictl

cat > /etc/crictl.yaml <<EOF runtime-endpoint: unix:///var/run/containerd/containerd.sock image-endpoint: unix:///var/run/containerd/containerd.sock timeout: 10 debug: false pull-image-on-create: false EOF

-

-

添加阿里云的 Kubernetes 源:

-

首先,导入阿里云的 GPG 密钥:

curl -fsSL https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add - -

然后,添加阿里云的 Kubernetes 源:

tee /etc/apt/sources.list.d/kubernetes.list <<EOF deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main EOF

-

-

安装 Kubernetes 组件:

-

更新软件包索引并安装 kubelet、kubeadm 和 kubectl:

apt-get update apt-get install -y kubelet kubeadm kubectl -

设置 kubelet 使用 systemd 作为 cgroup 驱动:

# 可忽略 # sed -i 's/cgroup-driver=systemd/cgroup-driver=cgroupfs/g' /var/lib/kubelet/kubeadm-flags.env # systemctl daemon-reload # systemctl restart kubelet

-

-

初始化 Kubernetes 集群:

-

使用 kubeadm 初始化集群,并指定阿里云的镜像仓库:

# kubeadm init --image-repository registry.aliyuncs.com/google_containers I0513 14:16:59.740096 17563 version.go:256] remote version is much newer: v1.30.0; falling back to: stable-1.28 [init] Using Kubernetes version: v1.28.9 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' W0513 14:17:01.440936 17563 checks.go:835] detected that the sandbox image "registry.aliyuncs.com/google_containers/pause:3.8" of the container runtime is inconsistent with that used by kubeadm. It is recommended that using "registry.aliyuncs.com/google_containers/pause:3.9" as the CRI sandbox image. [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [k8smaster.example.net kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc. cluster.local] and IPs [10.96.0.1 10.1.1.70] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [k8smaster.example.net localhost] and IPs [10.1.1.70 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [k8smaster.example.net localhost] and IPs [10.1.1.70 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [apiclient] All control plane components are healthy after 4.002079 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node k8smaster.example.net as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes. io/exclude-from-external-load-balancers] [mark-control-plane] Marking the node k8smaster.example.net as control-plane by adding the taints [node-role.kubernetes.io/control-plane:NoSchedule] [bootstrap-token] Using token: m9z4yq.dok89ro6yt23wykr [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 10.1.1.70:6443 --token m9z4yq.dok89ro6yt23wykr \--discovery-token-ca-cert-hash sha256:17c3f29bd276592e668e9e6a7a187140a887254b4555cf7d293c3313d7c8a178

-

-

配置 kubectl:

-

为当前用户设置 kubectl 访问:

mkdir -p $HOME/.kube cp -i /etc/kubernetes/admin.conf $HOME/.kube/config chown $(id -u):$(id -g) $HOME/.kube/config

-

-

安装网络插件:

-

安装一个 Pod 网络插件,例如 Calico 或 Flannel。例如,使用 Calico:

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml # 网络插件初始化完毕之后,coredns容器就正常了 kubectl logs -n kube-system -l k8s-app=kube-dns

-

-

验证集群:

-

启动一个nginx pod:

# vim nginx_pod.yml apiVersion: v1 kind: Pod metadata:name: test-nginx-podnamespace: testlabels:app: nginx spec:containers:- name: test-nginx-containerimage: nginx:latestports:- containerPort: 80tolerations:- key: "node-role.kubernetes.io/control-plane"operator: "Exists"effect: "NoSchedule" ---apiVersion: v1 kind: Service # service和pod必须位于同一个namespace metadata:name: nginx-servicenamespace: test spec:type: NodePort# selector应该匹配pod的labelsselector:app: nginxports:- protocol: TCPport: 80nodePort: 30007targetPort: 80启动

kubectl apply -f nginx_pod.yml

-

部署opentelemetry-collector测试

otel-collector和otel-agent需要程序集成API,发送到以DaemonSet运行在每个节点的otel-agent,otel-agent再将数据发送给otel-collector汇总,然后发往可以处理otlp trace数据的后端,如zipkin、jaeger等。

自定义测试yaml文件

apiVersion: v1

kind: ConfigMap

metadata:name: otel-collector-confnamespace: default

data:# 你的配置数据config.yaml: |receivers:otlp:protocols:grpc:http:processors:batch:exporters:logging:loglevel: debugservice:pipelines:traces:receivers: [otlp]processors: [batch]exporters: [logging]---

apiVersion: v1

kind: Service

metadata:name: otel-collectorlabels:app: opentelemetry

spec:type: NodePortports:- port: 4317targetPort: 4317nodePort: 30080name: otlp-grpc- port: 8888targetPort: 8888name: metricsselector:component: otel-collector---

apiVersion: apps/v1

kind: Deployment

metadata:name: otel-collectorlabels:app: opentelemetry

spec:replicas: 1selector:matchLabels:component: otel-collectortemplate:metadata:labels:component: otel-collectorspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- name: otel-collectorimage: otel/opentelemetry-collector:latestports:- containerPort: 4317- containerPort: 8888env:- name: MY_POD_IPvalueFrom:fieldRef:fieldPath: status.podIPvolumeMounts:- name: otel-collector-config-volmountPath: /confvolumes:- configMap:name: otel-collector-confname: otel-collector-config-vol

启动

mkdir /conf

kubectl apply -f otel-collector.yaml

kubectl get -f otel-collector.yaml

删除

kubectl delete -f otel-collector.yaml

使用官方提供示例

kubectl apply -f https://raw.githubusercontent.com/open-telemetry/opentelemetry-collector/main/examples/k8s/otel-config.yaml

根据需要修改文件

otel-config.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:name: otel-agent-conflabels:app: opentelemetrycomponent: otel-agent-conf

data:otel-agent-config: |receivers:otlp:protocols:grpc:endpoint: ${env:MY_POD_IP}:4317http:endpoint: ${env:MY_POD_IP}:4318exporters:otlp:endpoint: "otel-collector.default:4317"tls:insecure: truesending_queue:num_consumers: 4queue_size: 100retry_on_failure:enabled: trueprocessors:batch:memory_limiter:# 80% of maximum memory up to 2Glimit_mib: 400# 25% of limit up to 2Gspike_limit_mib: 100check_interval: 5sextensions:zpages: {}service:extensions: [zpages]pipelines:traces:receivers: [otlp]processors: [memory_limiter, batch]exporters: [otlp]

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: otel-agentlabels:app: opentelemetrycomponent: otel-agent

spec:selector:matchLabels:app: opentelemetrycomponent: otel-agenttemplate:metadata:labels:app: opentelemetrycomponent: otel-agentspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- command:- "/otelcol"- "--config=/conf/otel-agent-config.yaml"image: otel/opentelemetry-collector:0.94.0name: otel-agentresources:limits:cpu: 500mmemory: 500Mirequests:cpu: 100mmemory: 100Miports:- containerPort: 55679 # ZPages endpoint.- containerPort: 4317 # Default OpenTelemetry receiver port.- containerPort: 8888 # Metrics.env:- name: MY_POD_IPvalueFrom:fieldRef:apiVersion: v1fieldPath: status.podIP- name: GOMEMLIMITvalue: 400MiBvolumeMounts:- name: otel-agent-config-volmountPath: /confvolumes:- configMap:name: otel-agent-confitems:- key: otel-agent-configpath: otel-agent-config.yamlname: otel-agent-config-vol

---

apiVersion: v1

kind: ConfigMap

metadata:name: otel-collector-conflabels:app: opentelemetrycomponent: otel-collector-conf

data:otel-collector-config: |receivers:otlp:protocols:grpc:endpoint: ${env:MY_POD_IP}:4317http:endpoint: ${env:MY_POD_IP}:4318processors:batch:memory_limiter:# 80% of maximum memory up to 2Glimit_mib: 1500# 25% of limit up to 2Gspike_limit_mib: 512check_interval: 5sextensions:zpages: {}exporters:otlp:endpoint: "http://someotlp.target.com:4317" # Replace with a real endpoint.tls:insecure: truezipkin:endpoint: "http://10.1.1.10:9411/api/v2/spans"format: "proto"service:extensions: [zpages]pipelines:traces/1:receivers: [otlp]processors: [memory_limiter, batch]exporters: [zipkin]

---

apiVersion: v1

kind: Service

metadata:name: otel-collectorlabels:app: opentelemetrycomponent: otel-collector

spec:ports:- name: otlp-grpc # Default endpoint for OpenTelemetry gRPC receiver.port: 4317protocol: TCPtargetPort: 4317- name: otlp-http # Default endpoint for OpenTelemetry HTTP receiver.port: 4318protocol: TCPtargetPort: 4318- name: metrics # Default endpoint for querying metrics.port: 8888selector:component: otel-collector

---

apiVersion: apps/v1

kind: Deployment

metadata:name: otel-collectorlabels:app: opentelemetrycomponent: otel-collector

spec:selector:matchLabels:app: opentelemetrycomponent: otel-collectorminReadySeconds: 5progressDeadlineSeconds: 120replicas: 1 #TODO - adjust this to your own requirementstemplate:metadata:labels:app: opentelemetrycomponent: otel-collectorspec:tolerations:- key: node-role.kubernetes.io/control-planeoperator: Existseffect: NoSchedulecontainers:- command:- "/otelcol"- "--config=/conf/otel-collector-config.yaml"image: otel/opentelemetry-collector:0.94.0name: otel-collectorresources:limits:cpu: 1memory: 2Girequests:cpu: 200mmemory: 400Miports:- containerPort: 55679 # Default endpoint for ZPages.- containerPort: 4317 # Default endpoint for OpenTelemetry receiver.- containerPort: 14250 # Default endpoint for Jaeger gRPC receiver.- containerPort: 14268 # Default endpoint for Jaeger HTTP receiver.- containerPort: 9411 # Default endpoint for Zipkin receiver.- containerPort: 8888 # Default endpoint for querying metrics.env:- name: MY_POD_IPvalueFrom:fieldRef:apiVersion: v1fieldPath: status.podIP- name: GOMEMLIMITvalue: 1600MiBvolumeMounts:- name: otel-collector-config-volmountPath: /conf

# - name: otel-collector-secrets

# mountPath: /secretsvolumes:- configMap:name: otel-collector-confitems:- key: otel-collector-configpath: otel-collector-config.yamlname: otel-collector-config-vol

# - secret:

# name: otel-collector-secrets

# items:

# - key: cert.pem

# path: cert.pem

# - key: key.pem

# path: key.pem

部署deepflow监控单个k8s集群

官方文档

官方demo

安装helm

snap install helm --classic

设置pv

kubectl apply -f https://openebs.github.io/charts/openebs-operator.yaml

## config default storage class

kubectl patch storageclass openebs-hostpath -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

部署deepflow

helm repo add deepflow https://deepflowio.github.io/deepflow

helm repo update deepflow # use `helm repo update` when helm < 3.7.0

helm install deepflow -n deepflow deepflow/deepflow --create-namespace

# 显示如下

NAME: deepflow

LAST DEPLOYED: Tue May 14 14:13:50 2024

NAMESPACE: deepflow

STATUS: deployed

REVISION: 1

NOTES:

██████╗ ███████╗███████╗██████╗ ███████╗██╗ ██████╗ ██╗ ██╗

██╔══██╗██╔════╝██╔════╝██╔══██╗██╔════╝██║ ██╔═══██╗██║ ██║

██║ ██║█████╗ █████╗ ██████╔╝█████╗ ██║ ██║ ██║██║ █╗ ██║

██║ ██║██╔══╝ ██╔══╝ ██╔═══╝ ██╔══╝ ██║ ██║ ██║██║███╗██║

██████╔╝███████╗███████╗██║ ██║ ███████╗╚██████╔╝╚███╔███╔╝

╚═════╝ ╚══════╝╚══════╝╚═╝ ╚═╝ ╚══════╝ ╚═════╝ ╚══╝╚══╝ An automated observability platform for cloud-native developers.# deepflow-agent Port for receiving trace, metrics, and logdeepflow-agent service: deepflow-agent.deepflow

deepflow-agent Host listening port: 38086# Get the Grafana URL to visit by running these commands in the same shellNODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow-grafana)

NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}")

echo -e "Grafana URL: http://$NODE_IP:$NODE_PORT \nGrafana auth: admin:deepflow"

节点安装deepflow-ctl

curl -o /usr/bin/deepflow-ctl https://deepflow-ce.oss-cn-beijing.aliyuncs.com/bin/ctl/stable/linux/$(arch | sed 's|x86_64|amd64|' | sed 's|aarch64|arm64|')/deepflow-ctl

chmod a+x /usr/bin/deepflow-ctl

访问grafana页面

NODE_PORT=$(kubectl get --namespace deepflow -o jsonpath="{.spec.ports[0].nodePort}" services deepflow-grafana)

NODE_IP=$(kubectl get nodes -o jsonpath="{.items[0].status.addresses[0].address}")

echo -e "Grafana URL: http://$NODE_IP:$NODE_PORT \nGrafana auth: admin:deepflow"

FAQ

如何将pod的端口暴露出来

在 Kubernetes 中,如果你想要将 Pod 的端口暴露给集群外部的用户或服务访问,你可以通过创建 Service 来实现。Service 提供了几种不同的类型来支持不同的用例和网络需求。以下是一些常见的方法:

-

NodePort:这种类型的 Service 会在集群的所有节点上开放一个指定的端口(通常在 30000-32767 范围内),任何发送到这个端口的流量都会被转发到对应的 Pod。这是最简单的方式,但它会占用节点上的端口。

示例 YAML 配置:

apiVersion: v1 kind: Service metadata:name: my-service spec:type: NodePortselector:app: MyAppports:- protocol: TCPport: 80targetPort: 9376nodePort: 30007 -

LoadBalancer:这种类型的 Service 通常由云提供商支持,它会为 Service 分配一个外部 IP 地址,所有发送到这个 IP 地址的流量都会被转发到 Pod。这种方式适用于需要直接从互联网访问服务的场景。

示例 YAML 配置:

apiVersion: v1 kind: Service metadata:name: my-service spec:selector:app: MyAppports:- protocol: TCPport: 80targetPort: 9376type: LoadBalancer -

Ingress:Ingress 是一个 API 对象,它定义了外部访问到集群服务的规则。它可以提供负载均衡、SSL 终端和基于名称的虚拟托管。这是一个更高级的暴露方式,可以让你使用一个单一的入口点来管理多个服务的访问。

示例 YAML 配置:

apiVersion: networking.k8s.io/v1 kind: Ingress metadata:name: example-ingress spec:rules:- host: www.example.comhttp:paths:- path: /pathType: Prefixbackend:service:name: my-serviceport:number: 80 -

Port Forwarding:如果你只是临时需要从本地机器访问 Pod,可以使用

kubectl port-forward命令将本地端口转发到 Pod 中的端口。示例命令:

kubectl port-forward pods/my-pod 8080:80

这些方法可以根据你的具体需求和环境来选择使用。

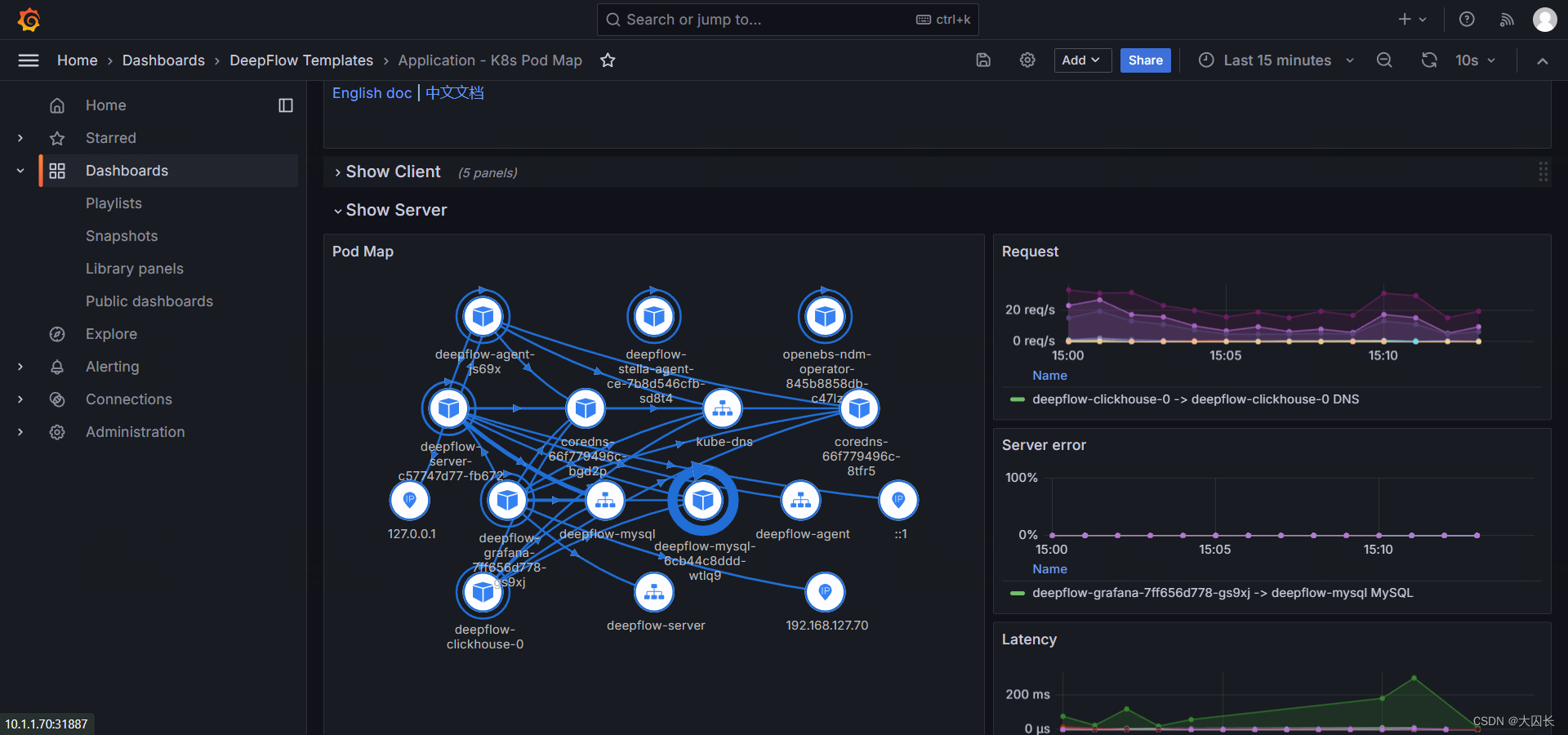

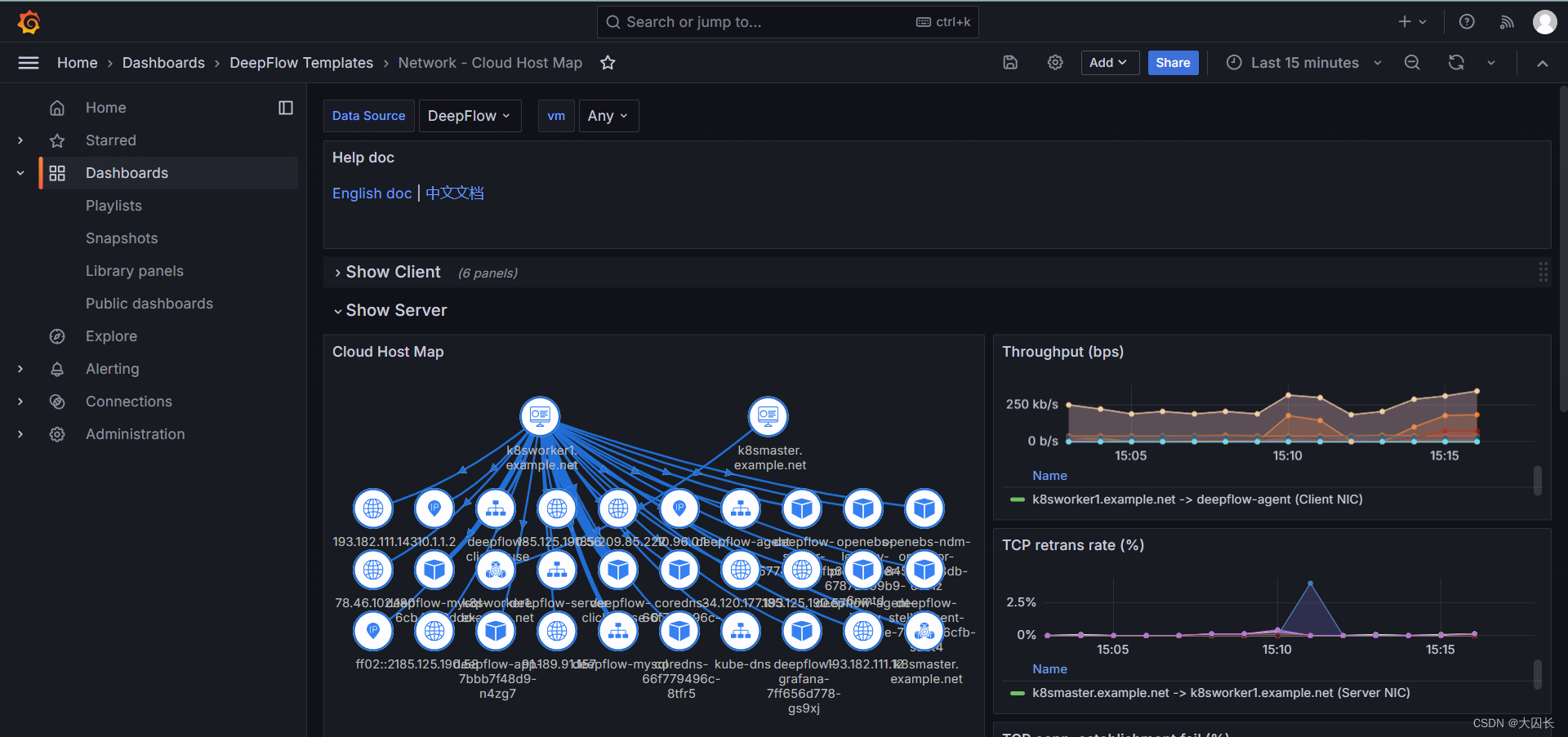

deepflow是一个可观测平台吗

是的,DeepFlow 是一个可观测性平台。它是由云杉网络开发的,旨在为复杂的云基础设施及云原生应用提供深度可观测性。DeepFlow 基于 eBPF 技术实现了应用性能指标、分布式追踪、持续性能剖析等观测信号的零侵扰采集,并结合智能标签技术实现了所有观测信号的全栈关联和高效存取¹。

DeepFlow 提供了以下核心特性:

- 全景图:利用 eBPF 技术零侵扰绘制生产环境的服务全景图。

- 分布式追踪:基于 eBPF 和 Wasm 技术零侵扰实现分布式追踪。

- 持续性能剖析:利用 eBPF 技术以低开销零侵扰采集生产环境进程的性能剖析数据。

- 存储性能优化:通过 SmartEncoding 机制大幅降低存储成本。

- 无缝集成:可以作为 Prometheus、OpenTelemetry、SkyWalking、Pyroscope 的存储后端,也提供 SQL、PromQL、OTLP 等数据接口¹。

DeepFlow 旨在解决传统 APM 方案中的侵扰性和观测盲点问题,提供一种更高效、自动化的可观测性解决方案。

deepflow会自动监控k8s中的pod应用吗

是的,DeepFlow 可以自动监控 Kubernetes 中的 Pod 应用。DeepFlow 是一个可观测性平台,它基于 eBPF 技术实现了应用性能指标、分布式追踪、持续性能剖析等观测信号的零侵扰采集,并结合智能标签技术实现了所有观测信号的全栈关联和高效存取。

以下是 DeepFlow 在 Kubernetes 中自动监控 Pod 应用的一些特性:

-

零侵扰采集:

DeepFlow 使用 eBPF 技术,无需修改应用程序代码,即可自动收集应用性能指标、分布式追踪数据和持续性能剖析信息。 -

全栈关联:

DeepFlow 通过智能标签技术,将所有观测信号(指标、日志、追踪)关联到全栈中的不同组件,帮助您更好地理解应用程序的整体性能。 -

自动标记 K8s 资源:

DeepFlow 自动标记 Kubernetes 资源(如 Pod、Service、Deployment 等),使您可以轻松地按照资源维度进行监控。 -

持续性能剖析:

DeepFlow 可以低开销地采集生产环境进程的性能剖析数据,帮助您深入分析应用程序的性能瓶颈。

总之,DeepFlow 是一个强大的可观测性工具,可以自动监控 Kubernetes 中的应用程序,帮助您更好地理解和优化应用性能。

FAQ

如何将pod的端口暴露出来

在 Kubernetes 中,如果你想要将 Pod 的端口暴露给集群外部的用户或服务访问,你可以通过创建 Service 来实现。Service 提供了几种不同的类型来支持不同的用例和网络需求。以下是一些常见的方法:

-

NodePort:这种类型的 Service 会在集群的所有节点上开放一个指定的端口(通常在 30000-32767 范围内),任何发送到这个端口的流量都会被转发到对应的 Pod。这是最简单的方式,但它会占用节点上的端口。

示例 YAML 配置:

apiVersion: v1 kind: Service metadata:name: my-service spec:type: NodePortselector:app: MyAppports:- protocol: TCPport: 80targetPort: 9376nodePort: 30007 -

LoadBalancer:这种类型的 Service 通常由云提供商支持,它会为 Service 分配一个外部 IP 地址,所有发送到这个 IP 地址的流量都会被转发到 Pod。这种方式适用于需要直接从互联网访问服务的场景。

示例 YAML 配置:

apiVersion: v1 kind: Service metadata:name: my-service spec:selector:app: MyAppports:- protocol: TCPport: 80targetPort: 9376type: LoadBalancer -

Ingress:Ingress 是一个 API 对象,它定义了外部访问到集群服务的规则。它可以提供负载均衡、SSL 终端和基于名称的虚拟托管。这是一个更高级的暴露方式,可以让你使用一个单一的入口点来管理多个服务的访问。

示例 YAML 配置:

apiVersion: networking.k8s.io/v1 kind: Ingress metadata:name: example-ingress spec:rules:- host: www.example.comhttp:paths:- path: /pathType: Prefixbackend:service:name: my-serviceport:number: 80 -

Port Forwarding:如果你只是临时需要从本地机器访问 Pod,可以使用

kubectl port-forward命令将本地端口转发到 Pod 中的端口。示例命令:

kubectl port-forward pods/my-pod 8080:80

这些方法可以根据你的具体需求和环境来选择使用。

deepflow是一个可观测平台吗

是的,DeepFlow 是一个可观测性平台。它是由云杉网络开发的,旨在为复杂的云基础设施及云原生应用提供深度可观测性。DeepFlow 基于 eBPF 技术实现了应用性能指标、分布式追踪、持续性能剖析等观测信号的零侵扰采集,并结合智能标签技术实现了所有观测信号的全栈关联和高效存取¹。

DeepFlow 提供了以下核心特性:

- 全景图:利用 eBPF 技术零侵扰绘制生产环境的服务全景图。

- 分布式追踪:基于 eBPF 和 Wasm 技术零侵扰实现分布式追踪。

- 持续性能剖析:利用 eBPF 技术以低开销零侵扰采集生产环境进程的性能剖析数据。

- 存储性能优化:通过 SmartEncoding 机制大幅降低存储成本。

- 无缝集成:可以作为 Prometheus、OpenTelemetry、SkyWalking、Pyroscope 的存储后端,也提供 SQL、PromQL、OTLP 等数据接口¹。

DeepFlow 旨在解决传统 APM 方案中的侵扰性和观测盲点问题,提供一种更高效、自动化的可观测性解决方案。

deepflow会自动监控k8s中的pod应用吗

是的,DeepFlow 可以自动监控 Kubernetes 中的 Pod 应用。DeepFlow 是一个可观测性平台,它基于 eBPF 技术实现了应用性能指标、分布式追踪、持续性能剖析等观测信号的零侵扰采集,并结合智能标签技术实现了所有观测信号的全栈关联和高效存取。

以下是 DeepFlow 在 Kubernetes 中自动监控 Pod 应用的一些特性:

-

零侵扰采集:

DeepFlow 使用 eBPF 技术,无需修改应用程序代码,即可自动收集应用性能指标、分布式追踪数据和持续性能剖析信息。 -

全栈关联:

DeepFlow 通过智能标签技术,将所有观测信号(指标、日志、追踪)关联到全栈中的不同组件,帮助您更好地理解应用程序的整体性能。 -

自动标记 K8s 资源:

DeepFlow 自动标记 Kubernetes 资源(如 Pod、Service、Deployment 等),使您可以轻松地按照资源维度进行监控。 -

持续性能剖析:

DeepFlow 可以低开销地采集生产环境进程的性能剖析数据,帮助您深入分析应用程序的性能瓶颈。

总之,DeepFlow 是一个强大的可观测性工具,可以自动监控 Kubernetes 中的应用程序,帮助您更好地理解和优化应用性能。

FAQ

如何将pod的端口暴露出来

在 Kubernetes 中,如果你想要将 Pod 的端口暴露给集群外部的用户或服务访问,你可以通过创建 Service 来实现。Service 提供了几种不同的类型来支持不同的用例和网络需求。以下是一些常见的方法:

-

NodePort:这种类型的 Service 会在集群的所有节点上开放一个指定的端口(通常在 30000-32767 范围内),任何发送到这个端口的流量都会被转发到对应的 Pod。这是最简单的方式,但它会占用节点上的端口。

示例 YAML 配置:

apiVersion: v1 kind: Service metadata:name: my-service spec:type: NodePortselector:app: MyAppports:- protocol: TCPport: 80targetPort: 9376nodePort: 30007 -

LoadBalancer:这种类型的 Service 通常由云提供商支持,它会为 Service 分配一个外部 IP 地址,所有发送到这个 IP 地址的流量都会被转发到 Pod。这种方式适用于需要直接从互联网访问服务的场景。

示例 YAML 配置:

apiVersion: v1 kind: Service metadata:name: my-service spec:selector:app: MyAppports:- protocol: TCPport: 80targetPort: 9376type: LoadBalancer -

Ingress:Ingress 是一个 API 对象,它定义了外部访问到集群服务的规则。它可以提供负载均衡、SSL 终端和基于名称的虚拟托管。这是一个更高级的暴露方式,可以让你使用一个单一的入口点来管理多个服务的访问。

示例 YAML 配置:

apiVersion: networking.k8s.io/v1 kind: Ingress metadata:name: example-ingress spec:rules:- host: www.example.comhttp:paths:- path: /pathType: Prefixbackend:service:name: my-serviceport:number: 80 -

Port Forwarding:如果你只是临时需要从本地机器访问 Pod,可以使用

kubectl port-forward命令将本地端口转发到 Pod 中的端口。示例命令:

kubectl port-forward pods/my-pod 8080:80

这些方法可以根据你的具体需求和环境来选择使用。

deepflow是一个可观测平台吗

是的,DeepFlow 是一个可观测性平台。它是由云杉网络开发的,旨在为复杂的云基础设施及云原生应用提供深度可观测性。DeepFlow 基于 eBPF 技术实现了应用性能指标、分布式追踪、持续性能剖析等观测信号的零侵扰采集,并结合智能标签技术实现了所有观测信号的全栈关联和高效存取¹。

DeepFlow 提供了以下核心特性:

- 全景图:利用 eBPF 技术零侵扰绘制生产环境的服务全景图。

- 分布式追踪:基于 eBPF 和 Wasm 技术零侵扰实现分布式追踪。

- 持续性能剖析:利用 eBPF 技术以低开销零侵扰采集生产环境进程的性能剖析数据。

- 存储性能优化:通过 SmartEncoding 机制大幅降低存储成本。

- 无缝集成:可以作为 Prometheus、OpenTelemetry、SkyWalking、Pyroscope 的存储后端,也提供 SQL、PromQL、OTLP 等数据接口¹。

DeepFlow 旨在解决传统 APM 方案中的侵扰性和观测盲点问题,提供一种更高效、自动化的可观测性解决方案。

deepflow会自动监控k8s中的pod应用吗

是的,DeepFlow 可以自动监控 Kubernetes 中的 Pod 应用。DeepFlow 是一个可观测性平台,它基于 eBPF 技术实现了应用性能指标、分布式追踪、持续性能剖析等观测信号的零侵扰采集,并结合智能标签技术实现了所有观测信号的全栈关联和高效存取。

以下是 DeepFlow 在 Kubernetes 中自动监控 Pod 应用的一些特性:

-

零侵扰采集: