k8s更改master节点IP

背景

搭建集群的同事未规划网络,导致其中有一台master ip是192.168.7.173,和其他集群节点的IP192.168.0.x或192.168.1.x相隔太远,现在需要对网络做整改,方便管理配置诸如绑定限速等操作。

master节点是3节点的。此博客属于事后记录

Note:并没有想的那么简单

思路

- ectd中踢出master1节点

- kubectl踢出master1节点

- 更改master1节点IP地址

由于当前master集群前面没有haproxy、nginx等反向代理,所以集群中的一些地方配置的是master1的节点IP或master1节点IP:6443作为访问集群的入口的,坑基本上也都在这里,遇到问题解决问题 - master1节点执行kubeadm reset 及清理cni插件信息

- 更改所有的节点的hosts文件

- 执行kubeadm join重新将节点加入集群

- 验证各个组件&排障

实施

系统版本

root@dev-k8s-master01:~# cat /etc/os-release

NAME="Ubuntu"

VERSION="18.04.6 LTS (Bionic Beaver)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 18.04.6 LTS"

VERSION_ID="18.04"

HOME_URL="https://www.ubuntu.com/"

SUPPORT_URL="https://help.ubuntu.com/"

BUG_REPORT_URL="https://bugs.launchpad.net/ubuntu/"

PRIVACY_POLICY_URL="https://www.ubuntu.com/legal/terms-and-policies/privacy-policy"

VERSION_CODENAME=bionic

UBUNTU_CODENAME=bionic

etcd踢出master1节点

使用etcdctl命令将要更改IP的master节点踢出集群

export ETCDCTL_API=3

# 查看集群成员信息

etcdctl --endpoints=https://192.168.7.173:2379,https://192.168.1.17:2379,https://192.168.1.38:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/server.crt --key=/etc/kubernetes/pki/etcd/server.key member list --write-out=table

# 备注 使用member list 查看不到当前集群的etcd主节点

# 查看集群etcd主节点

endpoints=https://192.168.7.173:2379,https://192.168.1.17:2379,https://192.168.1.38:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/server.crt --key=/etc/kubernetes/pki/etcd/server.key endpoint status --write-out=table

执行结果如下

root@dev-k8s-master03:~# etcdctl --endpoints=https://192.168.1.15:2379,https://192.168.1.17:2379,https://192.168.1.38:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/server.crt --key=/etc/kubernetes/pki/etcd/server.key member list --write-out=table

+------------------+---------+------------------+---------------------------+---------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+------------------+---------------------------+---------------------------+------------+

| 802c824d9f96584b | started | dev-k8s-master03 | https://192.168.1.38:2380 | https://192.168.1.38:2379 | false |

| c3e9ff62e7bd6a70 | started | dev-k8s-master01 | https://192.168.1.15:2380 | https://192.168.1.15:2379 | false |

| ef1d4aa461844a8a | started | dev-k8s-master02 | https://192.168.1.17:2380 | https://192.168.1.17:2379 | false |

+------------------+---------+------------------+---------------------------+---------------------------+------------+

root@dev-k8s-master03:~# etcdctl --endpoints=https://192.168.1.15:2379,https://192.168.1.17:2379,https://192.168.1.38:2379 --cacert=/etc/kubernetes/pki/etcd/ca.crt --cert=/etc/kubernetes/pki/etcd/server.crt --key=/etc/kubernetes/pki/etcd/server.key endpoint status --write-out=table

+---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| https://192.168.1.15:2379 | c3e9ff62e7bd6a70 | 3.5.0 | 718 MB | false | false | 235 | 548780443 | 548780443 | |

| https://192.168.1.17:2379 | ef1d4aa461844a8a | 3.5.0 | 718 MB | false | false | 235 | 548780444 | 548780444 | |

| https://192.168.1.38:2379 | 802c824d9f96584b | 3.5.0 | 718 MB | true | false | 235 | 548780444 | 548780444 | |

+---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+kubectl将master1踢出集群

kubectl get node -o wide

kubectl cordon k8s-master01

kubectl delete node k8s-master01

kubeadm reset

root@dev-k8s-master01:~# kubeadm reset

[reset] Reading configuration from the cluster...

[reset] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

W0524 17:45:02.703143 6814 reset.go:101] [reset] Unable to fetch the kubeadm-config ConfigMap from cluster: failed to get config map: Get "https://192.168.7.173:6443/api/v1/namespaces/kube-system/configmaps/kubeadm-config?timeout=10s": dial tcp 192.168.7.173:6443: connect: no route to host

[reset] WARNING: Changes made to this host by 'kubeadm init' or 'kubeadm join' will be reverted.

[reset] Are you sure you want to proceed? [y/N]: y

[preflight] Running pre-flight checks

W0524 17:45:22.610779 6814 removeetcdmember.go:80] [reset] No kubeadm config, using etcd pod spec to get data directory

[reset] Stopping the kubelet service

[reset] Unmounting mounted directories in "/var/lib/kubelet"

[reset] Deleting contents of config directories: [/etc/kubernetes/manifests /etc/kubernetes/pki]

[reset] Deleting files: [/etc/kubernetes/admin.conf /etc/kubernetes/kubelet.conf /etc/kubernetes/bootstrap-kubelet.conf /etc/kubernetes/controller-manager.conf /etc/kubernetes/scheduler.conf]

[reset] Deleting contents of stateful directories: [/var/lib/etcd /var/lib/kubelet /var/lib/dockershim /var/run/kubernetes /var/lib/cni]The reset process does not clean CNI configuration. To do so, you must remove /etc/cni/net.dThe reset process does not reset or clean up iptables rules or IPVS tables.

If you wish to reset iptables, you must do so manually by using the "iptables" command.If your cluster was setup to utilize IPVS, run ipvsadm --clear (or similar)

to reset your system's IPVS tables.The reset process does not clean your kubeconfig files and you must remove them manually.

Please, check the contents of the $HOME/.kube/config file.

清理cni插件&iptables规则

root@dev-k8s-master01:~# mv /etc/cni/net.d /tmp/

root@dev-k8s-master01:~# iptables-save > /tmp/iptables.bak

root@dev-k8s-master01:~# iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -t raw -F && iptables -t security -F && iptables -X && su

# 验证

iptables -vnL

生成密钥&重新加入集群

root@dev-k8s-master01:~# kubeadm join 192.168.1.38:6443 --token ngq4b9.vylcwrghfiayv8au --discovery-token-ca-cert-hash sha256:b4c2xxxxx2ab6cf397255ff13c179e --control-plane --certificate-key e2d52bxxxab84edd --v=5

I0524 17:57:55.749888 10646 join.go:405] [preflight] found NodeName empty; using OS hostname as NodeName

I0524 17:57:55.749934 10646 join.go:409] [preflight] found advertiseAddress empty; using default interface's IP address as advertiseAddress

I0524 17:57:55.749974 10646 initconfiguration.go:116] detected and using CRI socket: /var/run/dockershim.sock

I0524 17:57:55.750479 10646 interface.go:431] Looking for default routes with IPv4 addresses

I0524 17:57:55.750548 10646 interface.go:436] Default route transits interface "ens18"

I0524 17:57:55.750709 10646 interface.go:208] Interface ens18 is up

I0524 17:57:55.750784 10646 interface.go:256] Interface "ens18" has 2 addresses :[192.168.1.15/21 fe80::68bf:2bff:feee:6c6e/64].

I0524 17:57:55.750809 10646 interface.go:223] Checking addr 192.168.1.15/21.

I0524 17:57:55.750822 10646 interface.go:230] IP found 192.168.1.15

I0524 17:57:55.750851 10646 interface.go:262] Found valid IPv4 address 192.168.1.15 for interface "ens18".

I0524 17:57:55.750871 10646 interface.go:442] Found active IP 192.168.1.15

[preflight] Running pre-flight checks

I0524 17:57:55.750986 10646 preflight.go:92] [preflight] Running general checks

I0524 17:57:55.751036 10646 checks.go:245] validating the existence and emptiness of directory /etc/kubernetes/manifests

I0524 17:57:55.751096 10646 checks.go:282] validating the existence of file /etc/kubernetes/kubelet.conf

I0524 17:57:55.751107 10646 checks.go:282] validating the existence of file /etc/kubernetes/bootstrap-kubelet.conf

I0524 17:57:55.751119 10646 checks.go:106] validating the container runtime

I0524 17:57:55.812507 10646 checks.go:132] validating if the "docker" service is enabled and active

I0524 17:57:55.831211 10646 checks.go:331] validating the contents of file /proc/sys/net/bridge/bridge-nf-call-iptables

I0524 17:57:55.831268 10646 checks.go:331] validating the contents of file /proc/sys/net/ipv4/ip_forward

I0524 17:57:55.831297 10646 checks.go:649] validating whether swap is enabled or not

I0524 17:57:55.831322 10646 checks.go:372] validating the presence of executable conntrack

I0524 17:57:55.831337 10646 checks.go:372] validating the presence of executable ip

I0524 17:57:55.831351 10646 checks.go:372] validating the presence of executable iptables

I0524 17:57:55.831368 10646 checks.go:372] validating the presence of executable mount

I0524 17:57:55.831382 10646 checks.go:372] validating the presence of executable nsenter

I0524 17:57:55.831392 10646 checks.go:372] validating the presence of executable ebtables

I0524 17:57:55.831403 10646 checks.go:372] validating the presence of executable ethtool

I0524 17:57:55.831419 10646 checks.go:372] validating the presence of executable socat

I0524 17:57:55.831430 10646 checks.go:372] validating the presence of executable tc

I0524 17:57:55.831442 10646 checks.go:372] validating the presence of executable touch

I0524 17:57:55.831455 10646 checks.go:520] running all checks

I0524 17:57:55.900002 10646 checks.go:403] checking whether the given node name is valid and reachable using net.LookupHost

I0524 17:57:55.900158 10646 checks.go:618] validating kubelet version

I0524 17:57:55.953966 10646 checks.go:132] validating if the "kubelet" service is enabled and active

I0524 17:57:55.970602 10646 checks.go:205] validating availability of port 10250

I0524 17:57:55.970805 10646 checks.go:432] validating if the connectivity type is via proxy or direct

I0524 17:57:55.970857 10646 join.go:475] [preflight] Discovering cluster-info

I0524 17:57:55.970891 10646 token.go:80] [discovery] Created cluster-info discovery client, requesting info from "192.168.1.38:6443"

I0524 17:57:55.978324 10646 token.go:118] [discovery] Requesting info from "192.168.1.38:6443" again to validate TLS against the pinned public key

I0524 17:57:55.983254 10646 token.go:135] [discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.1.38:6443"

I0524 17:57:55.983268 10646 discovery.go:52] [discovery] Using provided TLSBootstrapToken as authentication credentials for the join process

I0524 17:57:55.983276 10646 join.go:489] [preflight] Fetching init configuration

I0524 17:57:55.983281 10646 join.go:534] [preflight] Retrieving KubeConfig objects

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

I0524 17:57:55.991118 10646 interface.go:431] Looking for default routes with IPv4 addresses

I0524 17:57:55.991128 10646 interface.go:436] Default route transits interface "ens18"

I0524 17:57:55.991236 10646 interface.go:208] Interface ens18 is up

I0524 17:57:55.991269 10646 interface.go:256] Interface "ens18" has 2 addresses :[192.168.1.15/21 fe80::68bf:2bff:feee:6c6e/64].

I0524 17:57:55.991284 10646 interface.go:223] Checking addr 192.168.1.15/21.

I0524 17:57:55.991288 10646 interface.go:230] IP found 192.168.1.15

I0524 17:57:55.991292 10646 interface.go:262] Found valid IPv4 address 192.168.1.15 for interface "ens18".

I0524 17:57:55.991295 10646 interface.go:442] Found active IP 192.168.1.15

I0524 17:57:55.994214 10646 preflight.go:103] [preflight] Running configuration dependant checks

[preflight] Running pre-flight checks before initializing the new control plane instance

I0524 17:57:55.994260 10646 checks.go:577] validating Kubernetes and kubeadm version

I0524 17:57:55.994304 10646 checks.go:170] validating if the firewall is enabled and active

I0524 17:57:56.001072 10646 checks.go:205] validating availability of port 6443

I0524 17:57:56.001119 10646 checks.go:205] validating availability of port 10259

I0524 17:57:56.001134 10646 checks.go:205] validating availability of port 10257

I0524 17:57:56.001149 10646 checks.go:282] validating the existence of file /etc/kubernetes/manifests/kube-apiserver.yaml

I0524 17:57:56.001162 10646 checks.go:282] validating the existence of file /etc/kubernetes/manifests/kube-controller-manager.yaml

I0524 17:57:56.001170 10646 checks.go:282] validating the existence of file /etc/kubernetes/manifests/kube-scheduler.yaml

I0524 17:57:56.001174 10646 checks.go:282] validating the existence of file /etc/kubernetes/manifests/etcd.yaml

I0524 17:57:56.001178 10646 checks.go:432] validating if the connectivity type is via proxy or direct

I0524 17:57:56.001195 10646 checks.go:471] validating http connectivity to first IP address in the CIDR

I0524 17:57:56.001208 10646 checks.go:471] validating http connectivity to first IP address in the CIDR

I0524 17:57:56.001217 10646 checks.go:205] validating availability of port 2379

I0524 17:57:56.001235 10646 checks.go:205] validating availability of port 2380

I0524 17:57:56.001247 10646 checks.go:245] validating the existence and emptiness of directory /var/lib/etcd

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

I0524 17:57:56.001349 10646 checks.go:838] using image pull policy: IfNotPresent

I0524 17:57:56.018435 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.22.4

I0524 17:57:56.033614 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.22.4

I0524 17:57:56.049090 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.22.4

I0524 17:57:56.064531 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.22.4

I0524 17:57:56.082128 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.5

I0524 17:57:56.097267 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.0-0

I0524 17:57:56.113455 10646 checks.go:847] image exists: registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.4

[download-certs] Downloading the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[certs] Using certificateDir folder "/etc/kubernetes/pki"

I0524 17:57:56.118314 10646 certs.go:46] creating PKI assets

I0524 17:57:56.118376 10646 certs.go:487] validating certificate period for etcd/ca certificate

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [dev-k8s-master01 localhost] and IPs [192.168.1.15 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [dev-k8s-master01 localhost] and IPs [192.168.1.15 127.0.0.1 ::1]

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "etcd/healthcheck-client" certificate and key

I0524 17:57:57.010895 10646 certs.go:487] validating certificate period for ca certificate

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [dev-k8s-master01 dev-k8s-master02 dev-k8s-master03 k8s-dev-master.ex-ai.com kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.1.15 192.168.7.173 192.168.1.38 192.168.0.163 192.168.0.90]

I0524 17:57:57.339536 10646 certs.go:487] validating certificate period for front-proxy-ca certificate

[certs] Generating "front-proxy-client" certificate and key

[certs] Valid certificates and keys now exist in "/etc/kubernetes/pki"

I0524 17:57:57.385838 10646 certs.go:77] creating new public/private key files for signing service account users

[certs] Using the existing "sa" key

[kubeconfig] Generating kubeconfig files

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

I0524 17:57:58.009494 10646 manifests.go:99] [control-plane] getting StaticPodSpecs

I0524 17:57:58.009751 10646 certs.go:487] validating certificate period for CA certificate

I0524 17:57:58.009827 10646 manifests.go:125] [control-plane] adding volume "ca-certs" for component "kube-apiserver"

I0524 17:57:58.009841 10646 manifests.go:125] [control-plane] adding volume "etc-ca-certificates" for component "kube-apiserver"

I0524 17:57:58.009847 10646 manifests.go:125] [control-plane] adding volume "etc-pki" for component "kube-apiserver"

I0524 17:57:58.009854 10646 manifests.go:125] [control-plane] adding volume "k8s-certs" for component "kube-apiserver"

I0524 17:57:58.009862 10646 manifests.go:125] [control-plane] adding volume "usr-local-share-ca-certificates" for component "kube-apiserver"

I0524 17:57:58.009869 10646 manifests.go:125] [control-plane] adding volume "usr-share-ca-certificates" for component "kube-apiserver"

I0524 17:57:58.016124 10646 manifests.go:154] [control-plane] wrote static Pod manifest for component "kube-apiserver" to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

I0524 17:57:58.016145 10646 manifests.go:99] [control-plane] getting StaticPodSpecs

I0524 17:57:58.016322 10646 manifests.go:125] [control-plane] adding volume "ca-certs" for component "kube-controller-manager"

I0524 17:57:58.016335 10646 manifests.go:125] [control-plane] adding volume "etc-ca-certificates" for component "kube-controller-manager"

I0524 17:57:58.016341 10646 manifests.go:125] [control-plane] adding volume "etc-pki" for component "kube-controller-manager"

I0524 17:57:58.016348 10646 manifests.go:125] [control-plane] adding volume "flexvolume-dir" for component "kube-controller-manager"

I0524 17:57:58.016356 10646 manifests.go:125] [control-plane] adding volume "k8s-certs" for component "kube-controller-manager"

I0524 17:57:58.016363 10646 manifests.go:125] [control-plane] adding volume "kubeconfig" for component "kube-controller-manager"

I0524 17:57:58.016370 10646 manifests.go:125] [control-plane] adding volume "usr-local-share-ca-certificates" for component "kube-controller-manager"

I0524 17:57:58.016377 10646 manifests.go:125] [control-plane] adding volume "usr-share-ca-certificates" for component "kube-controller-manager"

I0524 17:57:58.016980 10646 manifests.go:154] [control-plane] wrote static Pod manifest for component "kube-controller-manager" to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[control-plane] Creating static Pod manifest for "kube-scheduler"

I0524 17:57:58.016998 10646 manifests.go:99] [control-plane] getting StaticPodSpecs

I0524 17:57:58.017171 10646 manifests.go:125] [control-plane] adding volume "kubeconfig" for component "kube-scheduler"

I0524 17:57:58.017507 10646 manifests.go:154] [control-plane] wrote static Pod manifest for component "kube-scheduler" to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[check-etcd] Checking that the etcd cluster is healthy

I0524 17:57:58.018268 10646 local.go:71] [etcd] Checking etcd cluster health

I0524 17:57:58.018282 10646 local.go:74] creating etcd client that connects to etcd pods

I0524 17:57:58.018291 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:02.138320 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:05.187304 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:08.258177 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:11.356253 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:14.366123 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:17.447385 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:20.513218 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:23.606232 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:26.699159 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:29.800693 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:32.813094 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:35.901599 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:38.967241 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:42.054078 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:45.107540 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:48.180650 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:51.291295 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:54.317130 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:58:57.388214 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:00.476226 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:03.569171 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:06.669514 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:09.685159 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:12.757184 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:15.875321 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:18.892172 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:22.030165 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:25.085195 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

I0524 17:59:28.139599 10646 etcd.go:166] retrieving etcd endpoints from "kubeadm.kubernetes.io/etcd.advertise-client-urls" annotation in etcd Pods

Get "https://192.168.7.173:6443/api/v1/namespaces/kube-system/pods?labelSelector=component%3Detcd%2Ctier%3Dcontrol-plane": dial tcp 192.168.7.173:6443: connect: no route to host

could not retrieve the list of etcd endpoints

k8s.io/kubernetes/cmd/kubeadm/app/util/etcd.getRawEtcdEndpointsFromPodAnnotation/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/util/etcd/etcd.go:155

k8s.io/kubernetes/cmd/kubeadm/app/util/etcd.getEtcdEndpointsWithBackoff/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/util/etcd/etcd.go:131

k8s.io/kubernetes/cmd/kubeadm/app/util/etcd.getEtcdEndpoints/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/util/etcd/etcd.go:127

k8s.io/kubernetes/cmd/kubeadm/app/util/etcd.NewFromCluster/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/util/etcd/etcd.go:98

k8s.io/kubernetes/cmd/kubeadm/app/phases/etcd.CheckLocalEtcdClusterStatus/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/phases/etcd/local.go:75

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/join.runCheckEtcdPhase/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/join/checketcd.go:69

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow.(*Runner).Run.func1/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow/runner.go:234

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow.(*Runner).visitAll/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow/runner.go:421

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow.(*Runner).Run/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow/runner.go:207

k8s.io/kubernetes/cmd/kubeadm/app/cmd.newCmdJoin.func1/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/join.go:174

k8s.io/kubernetes/vendor/github.com/spf13/cobra.(*Command).execute/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/vendor/github.com/spf13/cobra/command.go:852

k8s.io/kubernetes/vendor/github.com/spf13/cobra.(*Command).ExecuteC/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/vendor/github.com/spf13/cobra/command.go:960

k8s.io/kubernetes/vendor/github.com/spf13/cobra.(*Command).Execute/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/vendor/github.com/spf13/cobra/command.go:897

k8s.io/kubernetes/cmd/kubeadm/app.Run/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/kubeadm.go:50

main.main_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/kubeadm.go:25

runtime.main/usr/local/go/src/runtime/proc.go:225

runtime.goexit/usr/local/go/src/runtime/asm_amd64.s:1371

error execution phase check-etcd

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow.(*Runner).Run.func1/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow/runner.go:235

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow.(*Runner).visitAll/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow/runner.go:421

k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow.(*Runner).Run/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/phases/workflow/runner.go:207

k8s.io/kubernetes/cmd/kubeadm/app/cmd.newCmdJoin.func1/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/cmd/join.go:174

k8s.io/kubernetes/vendor/github.com/spf13/cobra.(*Command).execute/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/vendor/github.com/spf13/cobra/command.go:852

k8s.io/kubernetes/vendor/github.com/spf13/cobra.(*Command).ExecuteC/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/vendor/github.com/spf13/cobra/command.go:960

k8s.io/kubernetes/vendor/github.com/spf13/cobra.(*Command).Execute/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/vendor/github.com/spf13/cobra/command.go:897

k8s.io/kubernetes/cmd/kubeadm/app.Run/go/src/k8s.io/kubernetes/_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/app/kubeadm.go:50

main.main_output/local/go/src/k8s.io/kubernetes/cmd/kubeadm/kubeadm.go:25

runtime.main/usr/local/go/src/runtime/proc.go:225

runtime.goexit/usr/local/go/src/runtime/asm_amd64.s:1371

报错1

Get “https://192.168.7.173:6443/api/v1/namespaces/kube-system/pods?labelSelector=component%3Detcd%2Ctier%3Dcontrol-plane”: dial tcp 192.168.7.173:6443: connect: no route to host

解决:在活着的master上更改kube-system ns下的kubeadm-config这个cm

root@dev-k8s-master03:~# kubectl edit cm kubeadm-configcontrolPlaneEndpoint: 192.168.1.38:6443

有空继续补充

refer

https://cloud.tencent.com/developer/article/2008321

相关文章:

k8s更改master节点IP

背景 搭建集群的同事未规划网络,导致其中有一台master ip是192.168.7.173,和其他集群节点的IP192.168.0.x或192.168.1.x相隔太远,现在需要对网络做整改,方便管理配置诸如绑定限速等操作。 master节点是3节点的。此博客属于事后记…...

c++【入门】已知一个圆的半径,求解该圆的面积和周长?

限制 时间限制 : 1 秒 内存限制 : 128 MB 已知一个圆的半径,求解该圆的面积和周长 输入 输入只有一行,只有1个整数。 输出 输出只有两行,一行面积,一行周长。(保留两位小数)。 令pi3.1415926 样例…...

c#通过sqlsugar查询信息并日期排序

c#通过sqlsugar查询信息并日期字段排序 public static List<Sugar_Get_Info_Class> Get_xml_lot_xx(string lot_number){DBContext<Sugar_Get_Info_Class> db_data DBContext<Sugar_Get_Info_Class>.OpDB();Expression<Func<Sugar_Get_Info_Class, b…...

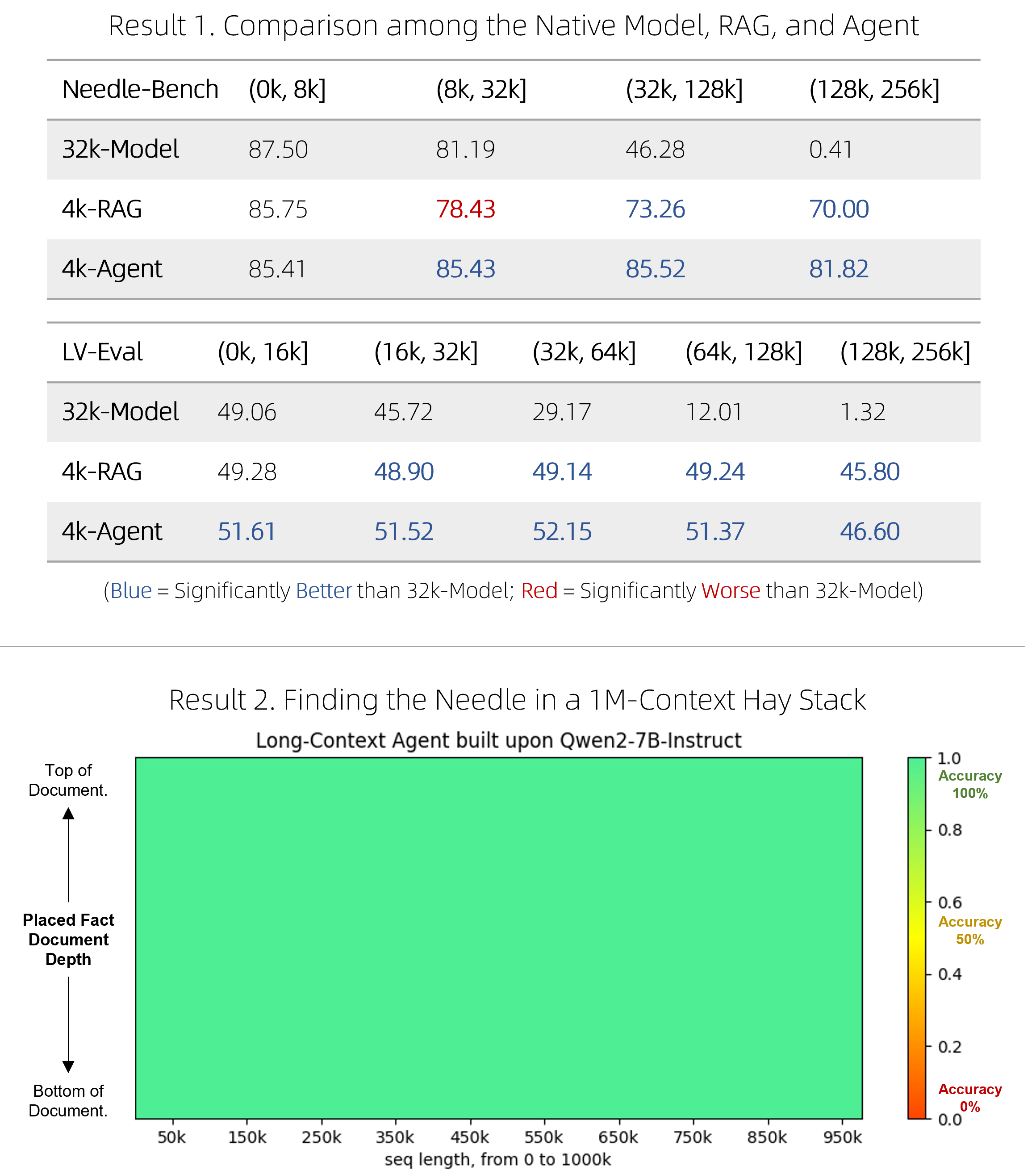

使用 Qwen-Agent 将 8k 上下文记忆扩展到百万量级

节前,我们组织了一场算法岗技术&面试讨论会,邀请了一些互联网大厂朋友、今年参加社招和校招面试的同学。 针对大模型技术趋势、大模型落地项目经验分享、新手如何入门算法岗、该如何准备面试攻略、面试常考点等热门话题进行了深入的讨论。 汇总合集…...

Vyper重入漏洞解析

什么是重入攻击 Reentrancy攻击是以太坊智能合约中最具破坏性的攻击之一。当一个函数对另一个不可信合约进行外部调用时,就会发生重入攻击。然后,不可信合约会递归调用原始函数,试图耗尽资金。 当合约在发送资金之前未能更新其状态时&#…...

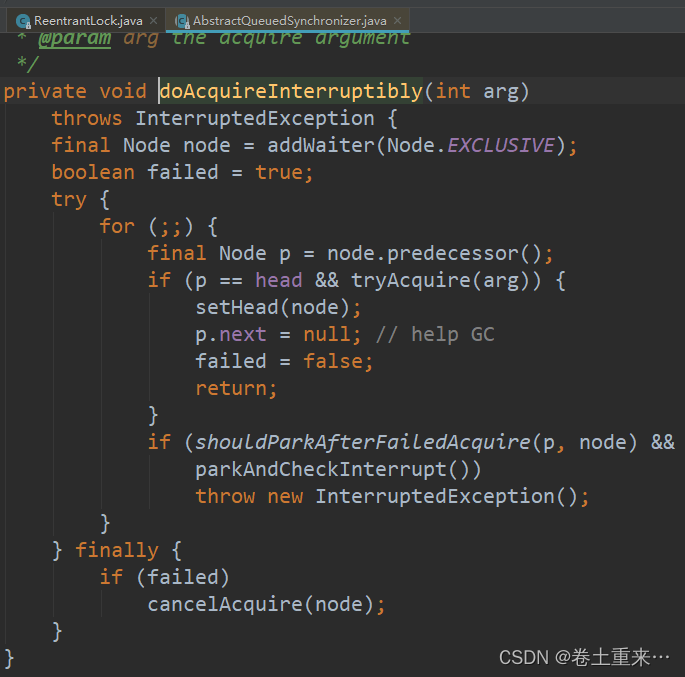

53.ReentrantLock原理

ReentrantLock使用 ReentrantLock 实现了Lock接口, 内置了Sync同步器继承了AbstractQueuedSynchronizer。 Sync是抽象类,有两个实现NonfairSync非公平,FairSync公平。 所以ReentrantLock有公平锁和非公平锁。默认是非公平锁。 public sta…...

“论边缘计算及应用”必过范文,突击2024软考高项论文

论文真题 边缘计算是在靠近物或数据源头的网络边缘侧,融合网络、计算、存储、应用核心能力的分布式开放平台(架构),就近提供边缘智能服务。边缘计算与云计算各有所长,云计算擅长全局性、非实时、长周期的大数据处理与分析,能够在…...

浅谈安全用电管理系统对重要用户的安全管理

1用电安全管理的重要性 随着社会经济的不断发展,电网建设力度的不断加大,供电的可靠性和供电质量日益提高,电网结构也在不断完善。但在电网具备供电的条件下,部分高危和重要电力用户未按规定实现双回路电源线路供电࿱…...

Docker的资源限制

文章目录 一、什么是资源限制1、Docker的资源限制2、内核支持Linux功能3、OOM异常4、调整/设置进程OOM评分和优先级4.1、/proc/PID/oom_score_adj4.2、/proc/PID/oom_adj4.3、/proc/PID/oom_score 二、容器的内存限制1、实现原理2、命令格式及指令参数2.1、命令格式2.2、指令参…...

MongoDB $rename 给字段一次重新命名的机会

学习mongodb,体会mongodb的每一个使用细节,欢迎阅读威赞的文章。这是威赞发布的第58篇mongodb技术文章,欢迎浏览本专栏威赞发布的其他文章。 在日常编写程序过程中,命名错误是经常出现的错误。拼写错误的单词,大小写字…...

OnlyOwner在Solidity中是一个修饰符,TypeError:

目录 OnlyOwner在Solidity中是一个修饰符 TypeError: Data location must be "memory" or "calldata" for parameter in function, but none was given. function AddDOm (address dataOwnermAddress, string dataProduct, string dataNotes) OnlyOwner …...

数据Ant-Design-Vue动态表头并填充

Ant-Design-Vue是一款基于Vue.js的UI组件库,广泛应用于前端开发中。在Ant-Design-Vue中,提供了许多常用的组件,包括表格组件。表格组件可以方便地展示和处理大量的数据。 在实际的开发中,我们经常会遇到需要根据后台返回的数据动…...

验证码案例

目录 前言 一、Hutool工具介绍 1.1 Maven 1.2 介绍 1.3 实现类 二、验证码案例 2.1 需求 2.2 约定前后端交互接口 2.2.1 需求分析 2.2.2 接口定义 2.3 后端生成验证码 2.4 前端接收验证码图片 2.5 后端校验验证码 2.6 前端校验验证码 2.7 后端完整代码 前言…...

python身份证ocr接口功能免费体验、身份证实名认证接口

翔云人工智能API开放平台提供身份证实名认证接口、身份证识别接口,两者的相结合可以实现身份证的快速、精准核验,当用户在进行身份证实名认证操作时,仅需上传身份证照片,证件识别接口即可快速、精准的对证件上的文字信息进行快速提…...

屏幕空间反射技术在AI绘画中的作用

在数字艺术和游戏开发的世界中,真实感渲染一直是追求的圣杯。屏幕空间反射(Screen Space Reflection,SSR)技术作为一种先进的图形处理手段,它通过在屏幕空间内模拟光线的反射来增强场景的真实感和视觉冲击力。随着人工…...

JDK下载安装Java SDK

Android中国开发者官网 Android官网 (VPN翻墙) 通过brew命令 下载OracleJDK(推荐) 手动下载OracleJDK(不推荐) oracle OracleJDK下载页 查找硬件设备是否已存在JDK环境 oracle官网 备注: JetPack JavaDevelopmentKit Java开发的系统SDK OpenJDK 开源免费SDK …...

【ARM Cache 系列文章 1.2 -- Data Cache 和 Unified Cache 的详细介绍】

请阅读【ARM Cache 及 MMU/MPU 系列文章专栏导读】 及【嵌入式开发学习必备专栏】 文章目录 Data Cache and Unified Cache数据缓存 (Data Cache)统一缓存 (Unified Cache)数据缓存与统一缓存的比较小结 Data Cache and Unified Cache 在 ARM架构中,缓存(…...

Debian13将正式切换到基于内存的临时文件系统

以前的内存很小,旅行者一号上的计算机内存只有68KB,现在的内存可以几十G,上百G足够把系统全部装载在内存里运行,获得优异的性能和极速响应体验。 很多小型系统能做到这一点,Linux没有那么激进,不过Debian …...

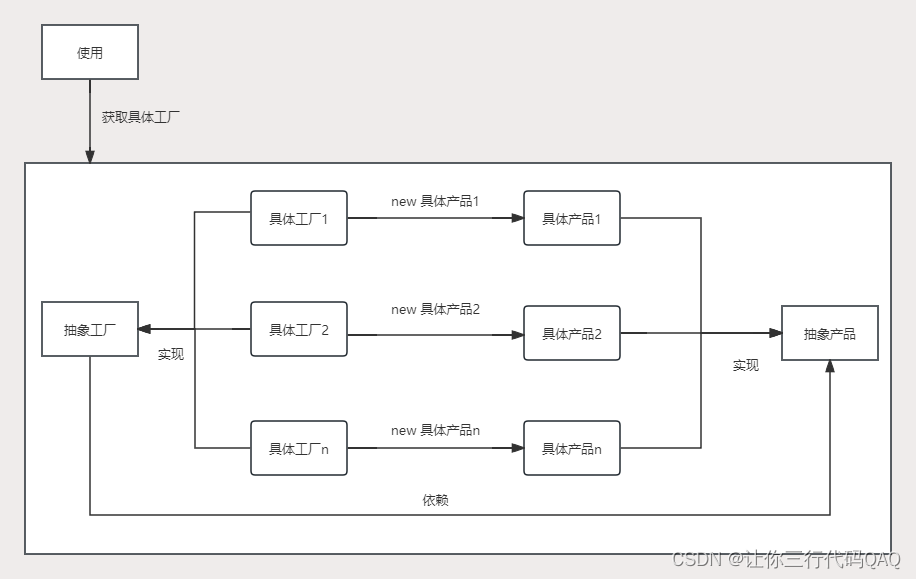

设计模式-工厂方法(创建型)

创建型-工厂方法 简单工厂 将被创建的对象称为“产品”,将生产“产品”对象称为“工厂”;如果创建的产品不多,且不需要生产新的产品,那么只需要一个工厂就可以,这种模式叫做“简单工厂”,它不属于23中设计…...

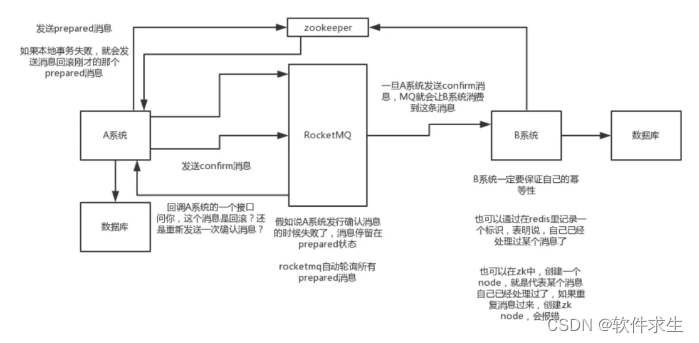

分布式事务大揭秘:使用MQ实现最终一致性

本文作者:小米,一个热爱技术分享的29岁程序员。如果你喜欢我的文章,欢迎关注我的微信公众号“软件求生”,获取更多技术干货! 大家好,我是小米,一个热爱分享技术的29岁程序员,今天我们来聊聊分布式事务中的一种经典实现方式——MQ最终一致性。这是一个在互联网公司中广…...

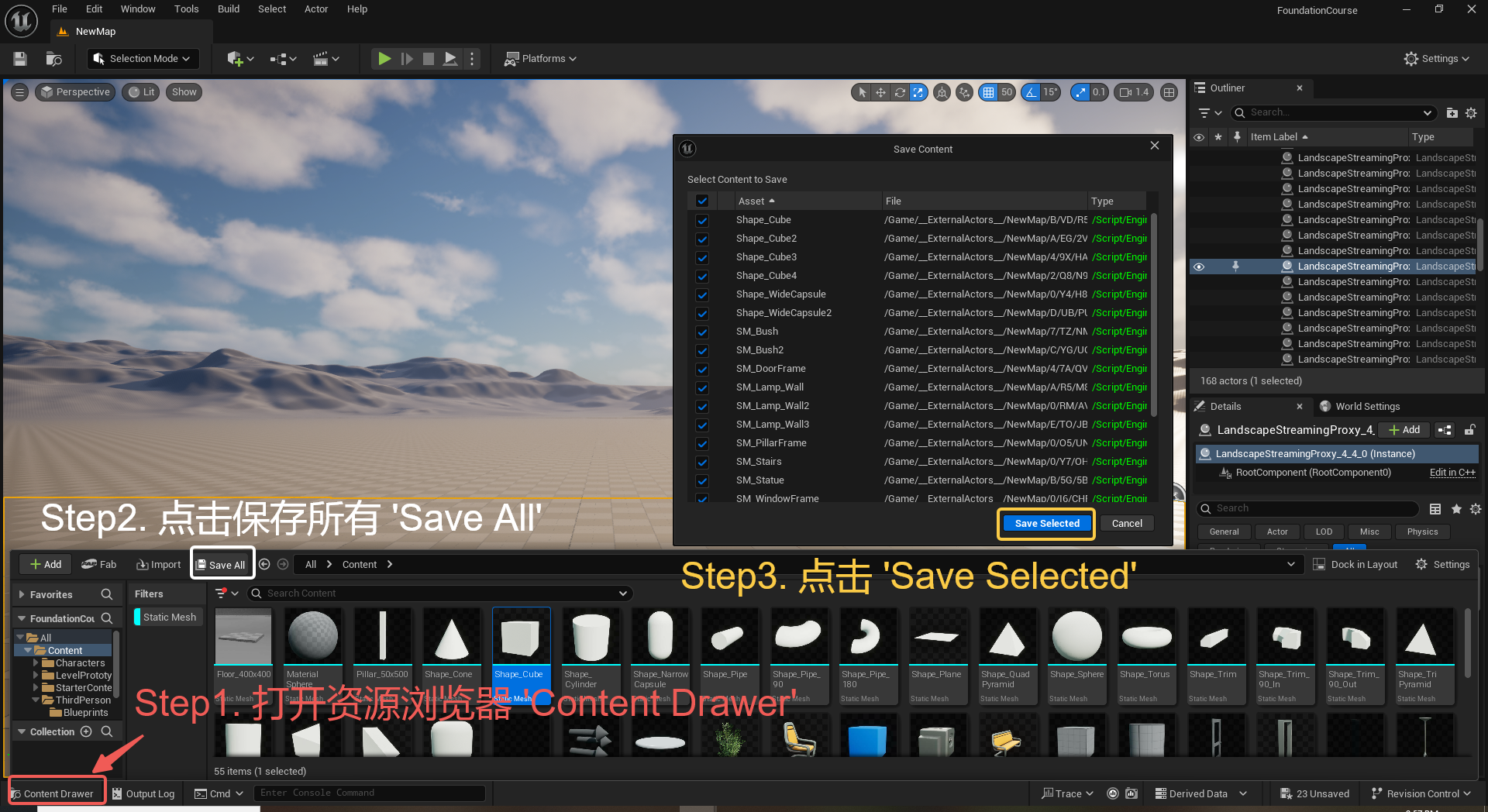

UE5 学习系列(三)创建和移动物体

这篇博客是该系列的第三篇,是在之前两篇博客的基础上展开,主要介绍如何在操作界面中创建和拖动物体,这篇博客跟随的视频链接如下: B 站视频:s03-创建和移动物体 如果你不打算开之前的博客并且对UE5 比较熟的话按照以…...

江苏艾立泰跨国资源接力:废料变黄金的绿色供应链革命

在华东塑料包装行业面临限塑令深度调整的背景下,江苏艾立泰以一场跨国资源接力的创新实践,重新定义了绿色供应链的边界。 跨国回收网络:废料变黄金的全球棋局 艾立泰在欧洲、东南亚建立再生塑料回收点,将海外废弃包装箱通过标准…...

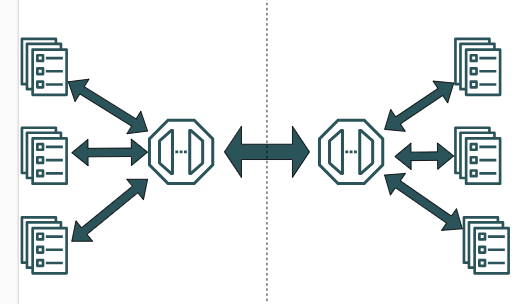

大模型多显卡多服务器并行计算方法与实践指南

一、分布式训练概述 大规模语言模型的训练通常需要分布式计算技术,以解决单机资源不足的问题。分布式训练主要分为两种模式: 数据并行:将数据分片到不同设备,每个设备拥有完整的模型副本 模型并行:将模型分割到不同设备,每个设备处理部分模型计算 现代大模型训练通常结合…...

SpringCloudGateway 自定义局部过滤器

场景: 将所有请求转化为同一路径请求(方便穿网配置)在请求头内标识原来路径,然后在将请求分发给不同服务 AllToOneGatewayFilterFactory import lombok.Getter; import lombok.Setter; import lombok.extern.slf4j.Slf4j; impor…...

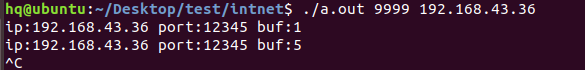

网络编程(UDP编程)

思维导图 UDP基础编程(单播) 1.流程图 服务器:短信的接收方 创建套接字 (socket)-----------------------------------------》有手机指定网络信息-----------------------------------------------》有号码绑定套接字 (bind)--------------…...

JAVA后端开发——多租户

数据隔离是多租户系统中的核心概念,确保一个租户(在这个系统中可能是一个公司或一个独立的客户)的数据对其他租户是不可见的。在 RuoYi 框架(您当前项目所使用的基础框架)中,这通常是通过在数据表中增加一个…...

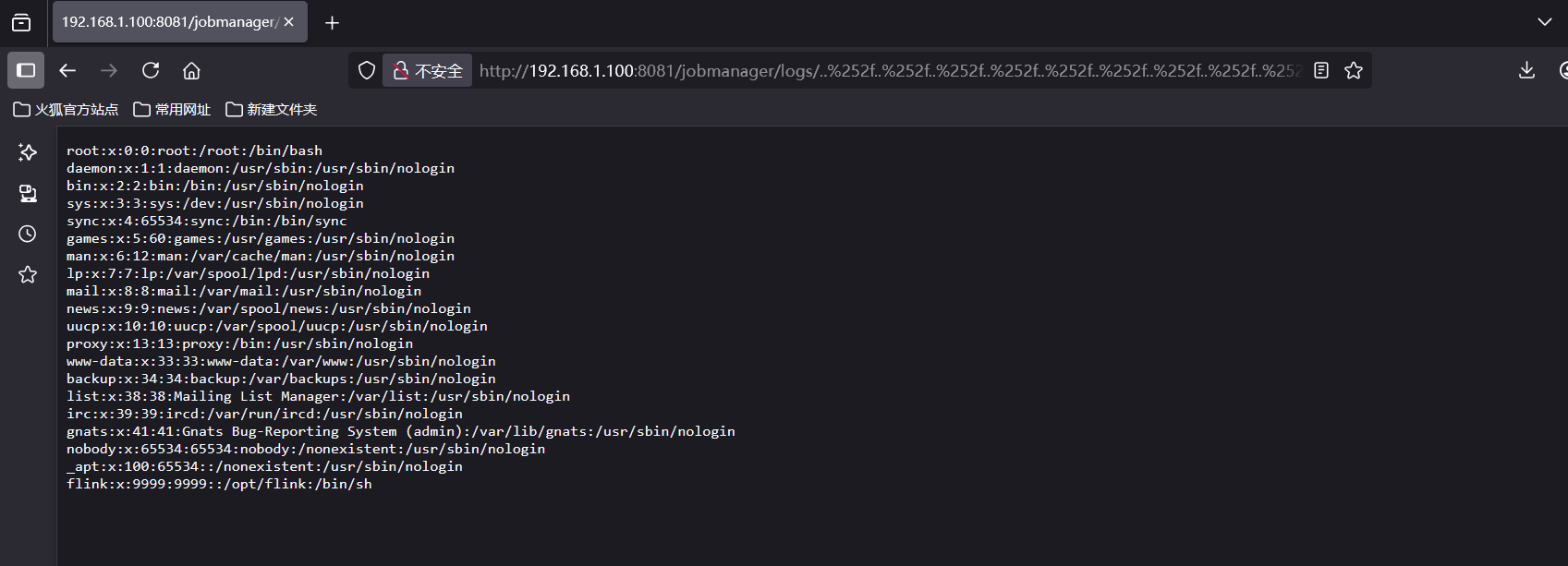

CVE-2020-17519源码分析与漏洞复现(Flink 任意文件读取)

漏洞概览 漏洞名称:Apache Flink REST API 任意文件读取漏洞CVE编号:CVE-2020-17519CVSS评分:7.5影响版本:Apache Flink 1.11.0、1.11.1、1.11.2修复版本:≥ 1.11.3 或 ≥ 1.12.0漏洞类型:路径遍历&#x…...

Java求职者面试指南:计算机基础与源码原理深度解析

Java求职者面试指南:计算机基础与源码原理深度解析 第一轮提问:基础概念问题 1. 请解释什么是进程和线程的区别? 面试官:进程是程序的一次执行过程,是系统进行资源分配和调度的基本单位;而线程是进程中的…...

如何更改默认 Crontab 编辑器 ?

在 Linux 领域中,crontab 是您可能经常遇到的一个术语。这个实用程序在类 unix 操作系统上可用,用于调度在预定义时间和间隔自动执行的任务。这对管理员和高级用户非常有益,允许他们自动执行各种系统任务。 编辑 Crontab 文件通常使用文本编…...

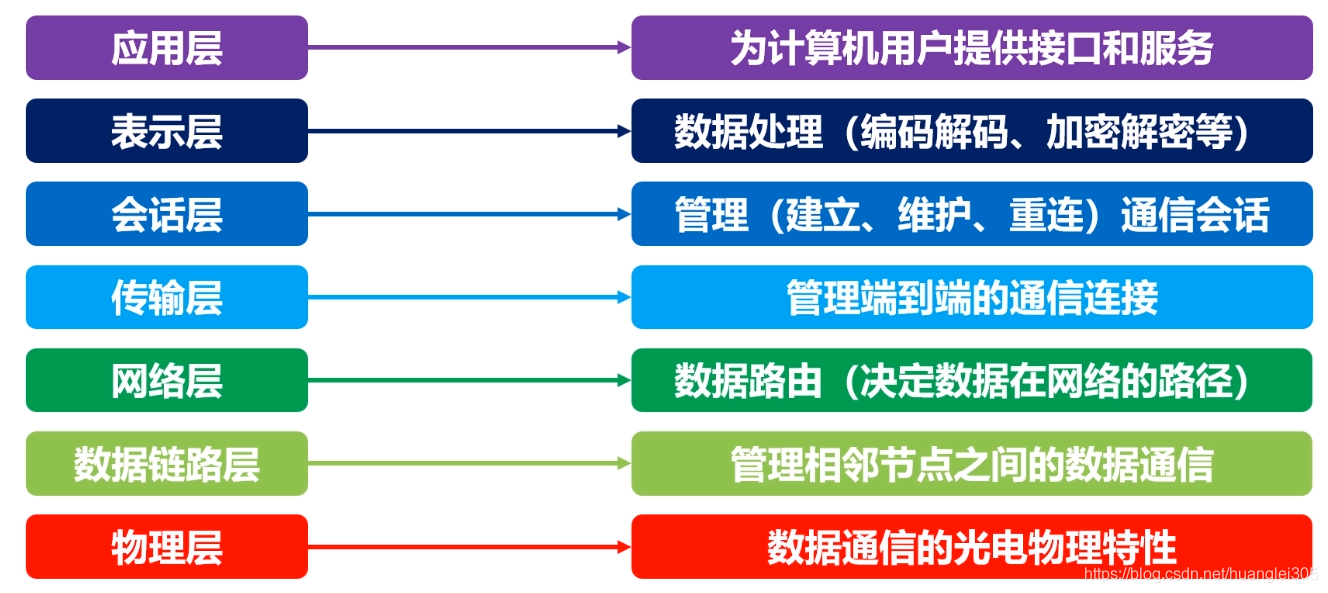

计算机基础知识解析:从应用到架构的全面拆解

目录 前言 1、 计算机的应用领域:无处不在的数字助手 2、 计算机的进化史:从算盘到量子计算 3、计算机的分类:不止 “台式机和笔记本” 4、计算机的组件:硬件与软件的协同 4.1 硬件:五大核心部件 4.2 软件&#…...